Hadoop 版本编译前的准备

安装必备软件

1. 安装 gcc

yum install gcc2. gcc-c++

yum install gcc-c++这样可以避免出现问题:Cannot find appropriate C++ compiler on this system

3. JAVA

具体可参考笔者相关博文 Centos6.5 JAVA配置

4. 编译前准备其他Linux安装依赖包

以下可能需要管理者权限

yum install autoconf automake libtool cmake

yum install ncurses-devel

yum install openssl-devel

yum install lzo-devel zlib-devel

yum install ant make检查是否安装成功: xxx –version

autoconf (GNU Autoconf) 2.63

automake (GNU automake) 1.11.1

ltmain.sh (GNU libtool) 2.2.6b5. 安装maven

具体可参考笔者相关博文 Centos6.5 下 Maven 安装

这里笔者要强调一下:有的时候,编译源码,在 Maven 上需要壮士断臂,将 ~/.m2/repository 删除!

rm -rf ~/.m2/repository6. 安装protobuf

- 下载wget https://protobuf.googlecode.com/files/protobuf-2.5.0.tar.gz 或者去笔者我资源下下载 protobuf-2.5.0.tar.gz

- 解压压缩文件

tar -zxvf protobuf-2.5.0.tar.gz- 进入protobuf-2.5.0目录,执行下面的命令

./configure

make

make check

make install其中 make check 这个步骤非常耗时

- 检验 protoc –version 看能否出现 libprotoc 2.5.0 信息

7. 安装cmake

- 下载 wget http://www.cmake.org/files/v2.8/cmake-2.8.12.2.tar.gz 或者笔者我相关资源处 下载cmake-2.8.12.2.tar.gz

- 解压压缩文件

tar -zxvf cmake-2.8.12.2.tar.gz - 进入cmake-2.8.12.2目录,执行下面的命令

./bootstrap

make

make install- 通过cmake –version来查看是否安装正常,若为cmake version 2.8.12.2 则ok!

8. 安装autotool

yum install autoconf automake libtool9. 网上有说可能还需要findingbugs

- 下载地址

http://sourceforge.jp/projects/sfnet_findbugs/downloads/findbugs/3.0.0/findbugs-3.0.0-dev-20131204-e3cbbd5.tar.gz/

或者笔者我的资源处下载 - 设置环境变量:

vim /etc/profile

export FINDBUGS_HOME=/opt/softwares/findbugs-3.0.0

export PATH=$PATH:$FINDBUGS_HOME/bin10. 确保能上网!!!

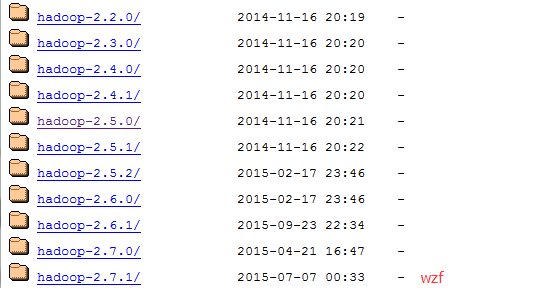

下载相应的 hadoop 源代码

- 如果是 Apache 版本的 hadoop,则可通过官网下载 http://archive.apache.org/dist/hadoop/common/

- 如果是 CHD 版本的 hadoop ,则可通过此处下载 http://archive.cloudera.com/cdh5/cdh/5/

有的版本的 hadoop 天生就有缺陷,可能需要修改某些配置文件,比如 hadoop-2.2.0

CDH5 版本请使用 CDH 5 Maven Repository

将下载好的 hadoop 源码解压了,然后进入解压文件。这里以 hadoop-2.5.0-cdh5.2.0 为例

tar -zxvf hadoop-2.5.0-cdh5.2.0-src.tar.gz 然后修改内部的 pom.xml 文件

cd hadoop-2.5.0-cdh5.2.0-src

vim pom.xml添加下列代码:

<repository>

<id>clouderaid>

<url>https://repository.cloudera.com/artifactory/cloudera-repos/url>

repository>参考资料: Using the CDH 5 Maven Repository:http://www.cloudera.com/content/www/en-us/documentation/enterprise/latest/topics/cdh_vd_cdh5_maven_repo.html#concept_pjw_v3z_dr_unique_2

最直接的参考文档——Hadoop 编译指南(BUILDING.txt)

在源码解压包里的目录结构是这样的,不知道你还留意过它?

请允许笔者这低分飘过英语6级的水准来稍微翻译下,贱笑了~

1. 编译必备

- Unix System

- JDK 1.6+

- Maven 3.0 or later

- Findbugs 1.3.9 (if running findbugs)

- ProtocolBuffer 2.5.0

- CMake 2.6 or newer (if compiling native code)

- Zlib devel (if compiling native code)

- openssl devel ( if compiling native hadoop-pipes )

- Internet connection for first build (to fetch all Maven and Hadoop dependencies)

2. Maven 主要模块

hadoop (Main Hadoop project // 主要 Hadoop 工程)

hadoop-project ( Parent POM for all Hadoop Maven modules. // 是所有 Hadoop Maven 组件的父类 POM. )

(All plugins & dependencies versions are defined here. // 所有插件 & 依赖版本都在这里定义.)hadoop-project-dist —— (Parent POM for modules that generate distributions // 生成发布版组件的父类POM.)

hadoop-annotations—— (用于生成 Hadoop 的 javadocs 文档)

hadoop-assemblies —— (Maven assemblies used by the different modules // 使用不同模块汇编 Maven)

hadoop-common-project —— (Hadoop Common)

hadoop-hdfs-project —— (Hadoop HDFS)

hadoop-mapreduce-project —— (Hadoop MapReduce)

hadoop-tools —— (Hadoop tools like Streaming, Distcp, etc.)

hadoop-dist —— (Hadoop distribution assembler)

3. Maven 从何处运行

它可以从任何模块运行,唯一美中不足的是,如果不从 utrunk 处运行,那么所有不属于编译运行的模块都将需要安装在本地 Maven 缓存或可在 Maven 仓库中获得。

4. Maven 构建目标

- Clean : mvn clean

- Compile : mvn compile [-Pnative]

- Run tests : mvn test [-Pnative]

- Create JAR : mvn package

- Run findbugs : mvn compile findbugs:findbugs

- Run checkstyle : mvn compile checkstyle:checkstyle

- Install JAR in M2 cache : mvn install

- Deploy JAR to Maven repo : mvn deploy

- Run clover : mvn test -Pclover [-DcloverLicenseLocation=${user.name}/.clover.license]

- Run Rat : mvn apache-rat:check

- Build javadocs : mvn javadoc:javadoc

- Build distribution : mvn package [-Pdist][-Pdocs][-Psrc][-Pnative][-Dtar]

- Change Hadoop version : mvn versions:set -DnewVersion=NEWVERSION

编译选项

- Use -Pnative to compile/bundle native code

- Use -Pdocs to generate & bundle the documentation in the distribution (using -Pdist)

- Use -Psrc to create a project source TAR.GZ

- Use -Dtar to create a TAR with the distribution (using -Pdist)

Snappy 编译选项

Snappy 是一个可以被 native code 使用的压缩库。它目前作为一个可选组件,意味着它的使用与否对 Hadoop 没有什么影响。

如果找不到 libsnappy.so ,则使用 -Drequire.snappy 来停止编译。如果没有指定该选项且 snappy 库也丢失了,那么我们就静默编译一个不能使用 snappy 的 libhadoop.so 库。如果你计划使用 snappy 并且想获得 可复验编译版本,那么就推荐这个选项。

使用 -Dsnappy.prefix 来为 libsnappy 头文件和库文件指定一个非标准位置。如果你已经使用一个包管理器安装 snppy 那么你就不需要这个选项。

使用 -Dsnappy.lib 来为 libsnappy 库文件制定一个非标准位置。与 snappy.prefix 相似,如果你已经使用一个包管理器安装 snappy 那么你就不需要这个选项。

使用 -Dbundle.snappy 来将 snappy.lib 目录的内容复制到最终的 tar 文件。这个选项需要 -Dsnappy.lib 已经提供,并且忽略 -Dsnappy.prefix 选项。

OpenSSL 编译选项

OpenSSL 包括一个 crypto 库,该库可以用于 native code。它目前作为一个可选组件,意味着它的使用与否对 Hadoop 没有什么影响。

如果找不到 libcrypto.so ,则使用 -Drequire.openssl 来停止编译。如果没有指定该选项且 snappy 库也丢失了,那么我们就静默编译一个不能使用 openssl 的 libhadoop.so 库。如果你计划使用 openssl 并且想获得 可复验编译版本,那么就推荐这个选项。

使用 -Dopenssl.prefix 来为 libcrypto 头文件和库文件指定一个非标准位置。如果你已经使用一个包管理器安装 openssl 那么你就不需要这个选项。

使用 -Dopenssl.lib 来为 libcrypto 库文件制定一个非标准位置。与 openssl .prefix 相似,如果你已经使用一个包管理器安装 openssl 那么你就不需要这个选项。

使用 -Dbundle.openssl 来将 openssl.lib 目录的内容复制到最终的 tar 文件。这个选项需要 -Dopenssl.lib 已经提供,并且忽略 -Dopenssl.prefix 选项。

测试选项:

- 使用 -DskipTests 来跳过测试当运行以下的 Maven 目标时:

‘package’, ‘install’, ‘deploy’ or ‘verify’ - -Dtest=

<TESTCLASSNAME>,<TESTCLASSNAME#METHODNAME>,…. - -Dtest.exclude=

<TESTCLASSNAME> - -Dtest.exclude.pattern=/

<TESTCLASSNAME1>.java,/<TESTCLASSNAME2>.java

编译组件分离

如果你正在编译一个子模块,所有这个子模块的 hadoop 依赖包已经作为其他第三方依赖被解决了。这个是从 Maven 缓存或从 Maven 仓库(如果无法在缓存中获取或者 SNAPSHOT ‘time out’ )

协议缓存编译器(Protocol Buffer compiler)

协议缓存编译的版本,protco,必须和 JAR protobuf 的版本匹配。

如果你的系统里有多个 protoc 版本,你可以在你的编译 shell 脚本中设置 HADOOP_PROTOC_CDH5_PATH 环境变量来指出你想用于 hadoop 编译时的那个 protoc 版本。如果你没有定义环境变量,protoc 会在默认的 PATH 中进行搜寻。

将工程导入 Eclipse

在导入项目到 eclipse 之前,首先就得安装 hadoop-maven-plugins

cd hadoop-maven-plugins

mvn install这时就会生成 eclipse 项目文件

mvn eclipse:eclipse -DskipTests最后,通过制定项目的根目录来导入到 exlipse

[File] > [Import] > [Existing Projects into Workspace].编译版本

- 创建二进制发布版,没有 native code 和 documentation(文档):

mvn package -Pdist -DskipTests -Dtar- 创建二进制发布版,带有 native code 和 documentation(文档):

mvn package -Pdist,native,docs -DskipTests -Dtar- 创建源发布版

mvn package -Psrc -DskipTests- 创建源和二进制发布版,带有 native code 和 documentation(文档):

mvn package -Pdist,native,docs,src -DskipTests -Dtar- 创建一个本地网页 的 staging 版本 (在 /tmp/hadoop-site)

mvn clean site; mvn site:stage -DstagingDirectory=/tmp/hadoop-site笔者注:package是生命周期,打包。 -D 是传入参数 -P是激活某个profile。 常用也就这两个。 -P后面的名字是根据你pom文件里面指定的,profile的id

编译中处理内存溢出错误 (out of memory errors)

如果在编译过程中遇到了内存溢出的错误,你应该通过增大 maven 所使用的内存来解决它。这个通过设置环境变量 MAVEN_OPTS。以下是个例子:

export MAVEN_OPTS="-Xms256m -Xmx512m"附:原文

Build instructions for Hadoop

hehe

----------------------------------------------------------------------------------

Requirements:

* Unix System

* JDK 1.6+

* Maven 3.0 or later

* Findbugs 1.3.9 (if running findbugs)

* ProtocolBuffer 2.5.0

* CMake 2.6 or newer (if compiling native code)

* Zlib devel (if compiling native code)

* openssl devel ( if compiling native hadoop-pipes )

* Internet connection for first build (to fetch all Maven and Hadoop dependencies)

----------------------------------------------------------------------------------

Maven main modules:

hadoop (Main Hadoop project)

- hadoop-project (Parent POM for all Hadoop Maven modules. )

(All plugins & dependencies versions are defined here.)

- hadoop-project-dist (Parent POM for modules that generate distributions.)

- hadoop-annotations (Generates the Hadoop doclet used to generated the Javadocs)

- hadoop-assemblies (Maven assemblies used by the different modules)

- hadoop-common-project (Hadoop Common)

- hadoop-hdfs-project (Hadoop HDFS)

- hadoop-mapreduce-project (Hadoop MapReduce)

- hadoop-tools (Hadoop tools like Streaming, Distcp, etc.)

- hadoop-dist (Hadoop distribution assembler)

----------------------------------------------------------------------------------

Where to run Maven from?

It can be run from any module. The only catch is that if not run from utrunk

all modules that are not part of the build run must be installed in the local

Maven cache or available in a Maven repository.

----------------------------------------------------------------------------------

Maven build goals:

* Clean : mvn clean

* Compile : mvn compile [-Pnative]

* Run tests : mvn test [-Pnative]

* Create JAR : mvn package

* Run findbugs : mvn compile findbugs:findbugs

* Run checkstyle : mvn compile checkstyle:checkstyle

* Install JAR in M2 cache : mvn install

* Deploy JAR to Maven repo : mvn deploy

* Run clover : mvn test -Pclover [-DcloverLicenseLocation=${user.name}/.clover.license]

* Run Rat : mvn apache-rat:check

* Build javadocs : mvn javadoc:javadoc

* Build distribution : mvn package [-Pdist][-Pdocs][-Psrc][-Pnative][-Dtar]

* Change Hadoop version : mvn versions:set -DnewVersion=NEWVERSION

Build options:

* Use -Pnative to compile/bundle native code

* Use -Pdocs to generate & bundle the documentation in the distribution (using -Pdist)

* Use -Psrc to create a project source TAR.GZ

* Use -Dtar to create a TAR with the distribution (using -Pdist)

Snappy build options:

Snappy is a compression library that can be utilized by the native code.

It is currently an optional component, meaning that Hadoop can be built with

or without this dependency.

* Use -Drequire.snappy to fail the build if libsnappy.so is not found.

If this option is not specified and the snappy library is missing,

we silently build a version of libhadoop.so that cannot make use of snappy.

This option is recommended if you plan on making use of snappy and want

to get more repeatable builds.

* Use -Dsnappy.prefix to specify a nonstandard location for the libsnappy

header files and library files. You do not need this option if you have

installed snappy using a package manager.

* Use -Dsnappy.lib to specify a nonstandard location for the libsnappy library

files. Similarly to snappy.prefix, you do not need this option if you have

installed snappy using a package manager.

* Use -Dbundle.snappy to copy the contents of the snappy.lib directory into

the final tar file. This option requires that -Dsnappy.lib is also given,

and it ignores the -Dsnappy.prefix option.

OpenSSL build options:

OpenSSL includes a crypto library that can be utilized by the native code.

It is currently an optional component, meaning that Hadoop can be built with

or without this dependency.

* Use -Drequire.openssl to fail the build if libcrypto.so is not found.

If this option is not specified and the openssl library is missing,

we silently build a version of libhadoop.so that cannot make use of

openssl. This option is recommended if you plan on making use of openssl

and want to get more repeatable builds.

* Use -Dopenssl.prefix to specify a nonstandard location for the libcrypto

header files and library files. You do not need this option if you have

installed openssl using a package manager.

* Use -Dopenssl.lib to specify a nonstandard location for the libcrypto library

files. Similarly to openssl.prefix, you do not need this option if you have

installed openssl using a package manager.

* Use -Dbundle.openssl to copy the contents of the openssl.lib directory into

the final tar file. This option requires that -Dopenssl.lib is also given,

and it ignores the -Dopenssl.prefix option.

Tests options:

* Use -DskipTests to skip tests when running the following Maven goals:

'package', 'install', 'deploy' or 'verify'

* -Dtest=,,....

* -Dtest.exclude=

* -Dtest.exclude.pattern=**/.java,**/.java

----------------------------------------------------------------------------------

Building components separately

If you are building a submodule directory, all the hadoop dependencies this

submodule has will be resolved as all other 3rd party dependencies. This is,

from the Maven cache or from a Maven repository (if not available in the cache

or the SNAPSHOT 'timed out').

An alternative is to run 'mvn install -DskipTests' from Hadoop source top

level once; and then work from the submodule. Keep in mind that SNAPSHOTs

time out after a while, using the Maven '-nsu' will stop Maven from trying

to update SNAPSHOTs from external repos.

----------------------------------------------------------------------------------

Protocol Buffer compiler

The version of Protocol Buffer compiler, protoc, must match the version of the

protobuf JAR.

If you have multiple versions of protoc in your system, you can set in your

build shell the HADOOP_PROTOC_CDH5_PATH environment variable to point to the one you

want to use for the Hadoop build. If you don't define this environment variable,

protoc is looked up in the PATH.

----------------------------------------------------------------------------------

Importing projects to eclipse

When you import the project to eclipse, install hadoop-maven-plugins at first.

$ cd hadoop-maven-plugins

$ mvn install

Then, generate eclipse project files.

$ mvn eclipse:eclipse -DskipTests

At last, import to eclipse by specifying the root directory of the project via

[File] > [Import] > [Existing Projects into Workspace].

----------------------------------------------------------------------------------

Building distributions:

Create binary distribution without native code and without documentation:

$ mvn package -Pdist -DskipTests -Dtar

Create binary distribution with native code and with documentation:

$ mvn package -Pdist,native,docs -DskipTests -Dtar

Create source distribution:

$ mvn package -Psrc -DskipTests

Create source and binary distributions with native code and documentation:

$ mvn package -Pdist,native,docs,src -DskipTests -Dtar

Create a local staging version of the website (in /tmp/hadoop-site)

$ mvn clean site; mvn site:stage -DstagingDirectory=/tmp/hadoop-site

----------------------------------------------------------------------------------

Handling out of memory errors in builds

----------------------------------------------------------------------------------

If the build process fails with an out of memory error, you should be able to fix

it by increasing the memory used by maven -which can be done via the environment

variable MAVEN_OPTS.

Here is an example setting to allocate between 256 and 512 MB of heap space to

Maven

export MAVEN_OPTS="-Xms256m -Xmx512m"

----------------------------------------------------------------------------------

Building on OS/X

----------------------------------------------------------------------------------

A one-time manual step is required to enable building Hadoop OS X with Java 7

every time the JDK is updated.

see: https://issues.apache.org/jira/browse/HADOOP-9350

$ sudo mkdir `/usr/libexec/java_home`/Classes

$ sudo ln -s `/usr/libexec/java_home`/lib/tools.jar `/usr/libexec/java_home`/Classes/classes.jar

----------------------------------------------------------------------------------

Building on Windows

----------------------------------------------------------------------------------

Requirements:

* Windows System

* JDK 1.6+

* Maven 3.0 or later

* Findbugs 1.3.9 (if running findbugs)

* ProtocolBuffer 2.5.0

* CMake 2.6 or newer

* Windows SDK or Visual Studio 2010 Professional

* Unix command-line tools from GnuWin32 or Cygwin: sh, mkdir, rm, cp, tar, gzip

* zlib headers (if building native code bindings for zlib)

* Internet connection for first build (to fetch all Maven and Hadoop dependencies)

If using Visual Studio, it must be Visual Studio 2010 Professional (not 2012).

Do not use Visual Studio Express. It does not support compiling for 64-bit,

which is problematic if running a 64-bit system. The Windows SDK is free to

download here:

http://www.microsoft.com/en-us/download/details.aspx?id=8279

----------------------------------------------------------------------------------

Building:

Keep the source code tree in a short path to avoid running into problems related

to Windows maximum path length limitation. (For example, C:\hdc).

Run builds from a Windows SDK Command Prompt. (Start, All Programs,

Microsoft Windows SDK v7.1, Windows SDK 7.1 Command Prompt.)

JAVA_HOME must be set, and the path must not contain spaces. If the full path

would contain spaces, then use the Windows short path instead.

You must set the Platform environment variable to either x64 or Win32 depending

on whether you're running a 64-bit or 32-bit system. Note that this is

case-sensitive. It must be "Platform", not "PLATFORM" or "platform".

Environment variables on Windows are usually case-insensitive, but Maven treats

them as case-sensitive. Failure to set this environment variable correctly will

cause msbuild to fail while building the native code in hadoop-common.

set Platform=x64 (when building on a 64-bit system)

set Platform=Win32 (when building on a 32-bit system)

Several tests require that the user must have the Create Symbolic Links

privilege.

All Maven goals are the same as described above with the exception that

native code is built by enabling the 'native-win' Maven profile. -Pnative-win

is enabled by default when building on Windows since the native components

are required (not optional) on Windows.

If native code bindings for zlib are required, then the zlib headers must be

deployed on the build machine. Set the ZLIB_HOME environment variable to the

directory containing the headers.

set ZLIB_HOME=C:\zlib-1.2.7

At runtime, zlib1.dll must be accessible on the PATH. Hadoop has been tested

with zlib 1.2.7, built using Visual Studio 2010 out of contrib\vstudio\vc10 in

the zlib 1.2.7 source tree.

http://www.zlib.net/

----------------------------------------------------------------------------------

Building distributions:

* Build distribution with native code : mvn package [-Pdist][-Pdocs][-Psrc][-Dtar]