Python计算机视觉编程(九)

- knn可视化

- knn

- 算法流程

- 二维点knn可视化

- dense sift原理

- 手势识别

knn可视化

knn

邻近算法,或者说K最近邻(kNN,k-NearestNeighbor)分类算法是数据挖掘分类技术中最简单的方法之一。kNN算法的核心思想是如果一个样本在特征空间中的k个最相邻的样本中的大多数属于某一个类别,则该样本也属于这个类别,并具有这个类别上样本的特性。

算法流程

- 准备数据,对数据进行预处理

- 选用合适的数据结构存储训练数据和测试元组

- 设定参数,如k

4.维护一个大小为k的的按距离由大到小的优先级队列,用于存储最近邻训练元组。随机从训练元组中选取k个元组作为初始的最近邻元组,分别计算测试元组到这k个元组的距离,将训练元组标号和距离存入优先级队列 - 遍历训练元组集,计算当前训练元组与测试元组的距离,将所得距离L 与优先级队列中的最大距离Lmax

- 进行比较。若L>=Lmax,则舍弃该元组,遍历下一个元组。若L < Lmax,删除优先级队列中最大距离的元组,将当前训练元组存入优先级队列。

- 遍历完毕,计算优先级队列中k 个元组的多数类,并将其作为测试元组的类别。

- 测试元组集测试完毕后计算误差率,继续设定不同的k值重新进行训练,最后取误差率最小的k 值。

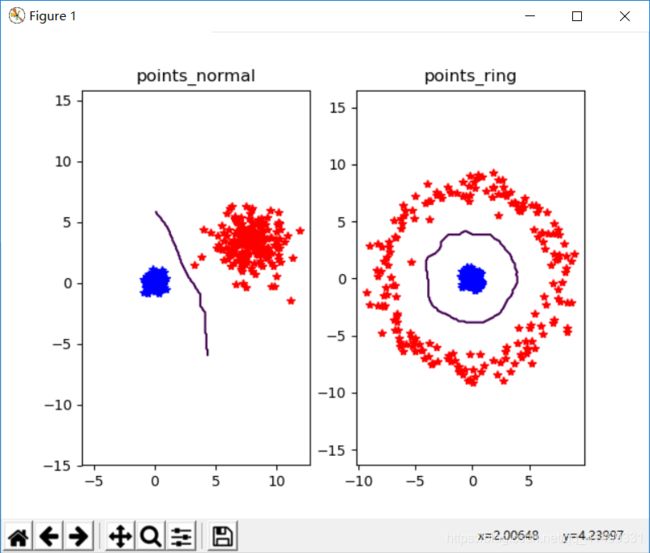

二维点knn可视化

# -*- coding: utf-8 -*-

from numpy.random import randn

import pickle

from pylab import *

n = 200

class_1 = 0.4 * randn(n,2)

class_2 = 1.5 * randn(n,2) + array([8,3])

labels = hstack((ones(n),-ones(n)))

with open('points_normal.pkl', 'w') as f:

pickle.dump(class_1,f)

pickle.dump(class_2,f)

pickle.dump(labels,f)

print "save OK!"

with open('points_normal_test.pkl', 'w') as f:

pickle.dump(class_1,f)

pickle.dump(class_2,f)

pickle.dump(labels,f)

print "save OK!"

class_1 = 0.4 * randn(n,2)

r = 0.8 * randn(n,1) + 8

angle = 3*pi * randn(n,1)

class_2 = hstack((r*cos(angle),r*sin(angle)))

labels = hstack((ones(n),-ones(n)))

with open('points_ring.pkl', 'w') as f:

pickle.dump(class_1,f)

pickle.dump(class_2,f)

pickle.dump(labels,f)

print "save OK!"

with open('points_ring_test.pkl', 'w') as f:

pickle.dump(class_1,f)

pickle.dump(class_2,f)

pickle.dump(labels,f)

print "save OK!"

可视化

# -*- coding: utf-8 -*-

import pickle

from pylab import *

from PCV.classifiers import knn

from PCV.tools import imtools

pklist=['points_normal.pkl','points_ring.pkl']

figure()

for i, pklfile in enumerate(pklist):

with open(pklfile, 'r') as f:

class_1 = pickle.load(f)

class_2 = pickle.load(f)

labels = pickle.load(f)

with open(pklfile[:-4]+'_test.pkl', 'r') as f:

class_1 = pickle.load(f)

class_2 = pickle.load(f)

labels = pickle.load(f)

model = knn.KnnClassifier(labels,vstack((class_1,class_2)))

print model.classify(class_1[0])

def classify(x,y,model=model):

return array([model.classify([xx,yy]) for (xx,yy) in zip(x,y)])

subplot(1,2,i+1)

imtools.plot_2D_boundary([-6,6,-6,6],[class_1,class_2],classify,[1,-1])

titlename=pklfile[:-4]

title(titlename)

show()

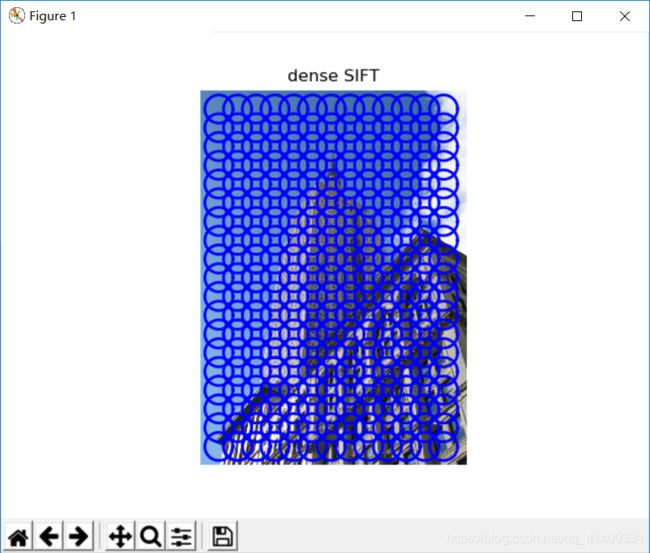

dense sift原理

一种使用稠密SIFT特征进行目标跟踪的算法.该算法首先将表达目标的矩形区域分成相同大小的矩形块,计算每一个小块的SIFT特征,再对各个小块的稠密SIFT特征在中心位置进行采样,建模目标的表达.然后度量两个图像区域的不相似性,先计算两个区域对应小块的Bhattacharyya距离,再对各距离加权求和作为两个区域间的距离.因为目标所在区域靠近边缘的部分可能受到背景像素的影响,而区域的内部则更一致,所以越靠近区域中心权函数的值越大.最后提出了能适应目标尺度变化的跟踪算法.实验表明,本算法具有良好的性能.

手势的dense sift

# -*- coding: utf-8 -*-

from PCV.localdescriptors import sift, dsift

from pylab import *

from PIL import Image

dsift.process_image_dsift('gesture/empire.jpg','empire.dsift',90,40,True)

l,d = sift.read_features_from_file('empire.dsift')

im = array(Image.open('gesture/empire.jpg'))

sift.plot_features(im,l,True)

title('dense SIFT')

show()

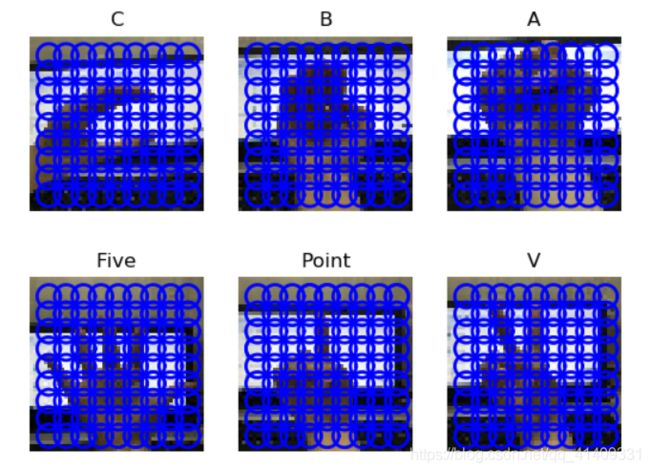

手势识别

我们使用dense sift描述子来表示这些手势图像,并建立一个简单的手势识别系统。用训练数据及其标记作为输入,创建分类器对象。然后在测试集遍历并分类,计算出正确分类数。

使用书上的图片集手势识别测试结果

使用自己拍摄的手势图片,从中每类抽取一张测试

# -*- coding: utf-8 -*-

from PCV.localdescriptors import dsift

import os

from PCV.localdescriptors import sift

from pylab import *

from PCV.classifiers import knn

def get_imagelist(path):

return [os.path.join(path,f) for f in os.listdir(path) if f.endswith('.ppm')]

def read_gesture_features_labels(path):

# create list of all files ending in .dsift

featlist = [os.path.join(path,f) for f in os.listdir(path) if f.endswith('.dsift')]

# read the features

features = []

for featfile in featlist:

l,d = sift.read_features_from_file(featfile)

features.append(d.flatten())

features = array(features)

# create labels

labels = [featfile.split('/')[-1][0] for featfile in featlist]

return features,array(labels)

def print_confusion(res,labels,classnames):

n = len(classnames)

# confusion matrix

class_ind = dict([(classnames[i],i) for i in range(n)])

confuse = zeros((n,n))

for i in range(len(test_labels)):

confuse[class_ind[res[i]],class_ind[test_labels[i]]] += 1

print 'Confusion matrix for'

print classnames

print confuse

filelist_train = get_imagelist('gesture/train')

filelist_test = get_imagelist('gesture/test')

imlist=filelist_train+filelist_test

for filename in imlist:

featfile = filename[:-3]+'dsift'

dsift.process_image_dsift(filename,featfile,10,5,resize=(50,50))

features,labels = read_gesture_features_labels('gesture/train/')

test_features,test_labels = read_gesture_features_labels('gesture/test/')

classnames = unique(labels)

# test kNN

k = 1

knn_classifier = knn.KnnClassifier(labels,features)

res = array([knn_classifier.classify(test_features[i],k) for i in

range(len(test_labels))])

# accuracy

acc = sum(1.0*(res==test_labels)) / len(test_labels)

print 'Accuracy:', acc

print_confusion(res,test_labels,classnames)