【莫凡Python】Tensorflow 基础构架

1 处理结构

- Tensorflow:

首先要定义神经网络结构(数据流图 data flow graphs),再把数据 (数据以张量tensor形式存在) 放入结构中进行运算和训练。即tensor不断在一个节点flow到另一个节点。 - Tensor (张量) :

-

- 零阶张量=纯量=标量=scalar=一个数值,e.g. [1]

-

- 一维张量=向量=vector, e.g. [1, 2, 3]

-

- 二维张量=矩阵=matrix, e.g. [[1, 2, 3 ][ 4, 5, 6 ][7, 8, 9 ]]

2 例子2

目的:线性拟合 y=0.1x+0.3 , 每20步训练,输出w, b。

import tensorflow as tf

import numpy as np

# create data

x_data = np.random.rand(100).astype(np.float32)

y_data = x_data * 0.1 + 0.3

# create model

Weights = tf.Variable(tf.random_uniform([1], -1.0, 1.0))

biases = tf.Variable(tf.zeros([1]))

y = Weights * x_data + biases

# cal loss

loss = tf.reduce_mean(tf.square(y-y_data))

optimizer = tf.train.GradientDescentOptimizer(0.5)

tain = optimizer.minimize(loss)

# use model

init = tf.global_variable_initializer() #初始化之前定义的Variable

sess = tf.Session() #创建会话,用session执行init初始化步骤

sess.run(init)

# train

for step in range(200):

sess.run(train)

if step % 20 == 0:

print(step, sess.run(Weights), sess.run(biases))

3 Session 会话控制

功能:加载两个tensorflow,建立两个matrix,输出两个matrix相乘的结果。

import tensorflow as tf

# create two matrixes

matrix1 = tf.constant([[3,3]])

matrix2 = tf.constant([[2],[2]])

product = tf.matmul(matrix1, matrix2)

# method of open Session

with tf.Session() as sess:

result = sess.run(product)

print(result) #[[12]]

4 Variable 变量

在Tensorflow中,变量必须定义是用tf.Variable说明。

import numpy as np

import matplotlib.pyplot as plt

import tensorflow.compat.v1 as tf

tf.disable_v2_behavior()

v1 = tf.Variable(0,name='age') #定义变量,值为0,名字为age

c1 = tf.constant(1) #定义常量

v2 = tf.add(v1,c1)

update = tf.assign(v1,v2)

# 若定义了Variable就一定要initialize

init = tf.global_variables_initializer()

# 使用Session启动

with tf.Session() as sess:

sess.run(init)

for _ in range(3):

sess.run(update)

print(sess.run(v1))

5 Placeholder 传入值

placeholder是Tensorflow中的占位符,暂时存储变量。

Tensorflow如果想从外部传入data,需要用tf.placeholder(),然后以这种形式传输数据sess.run(**, feed_dict={input: **}).

import numpy as np

import matplotlib.pyplot as plt

import tensorflow.compat.v1 as tf

tf.disable_v2_behavior()

# 定义两个碗

input1 = tf.placeholder(tf.float32)

input2 = tf.placeholder(tf.float32)

output = tf.multiply(input1,input2)

with tf.Session() as sess:

print(sess.run(output,feed_dict={

input1:[3.],input2:[8.]})) # [24.]

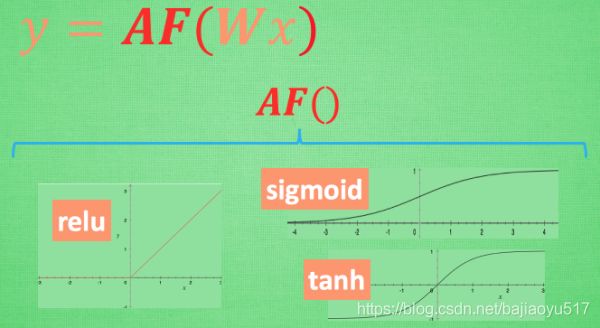

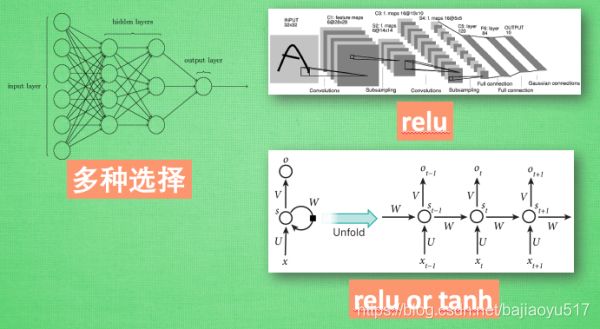

6 什么是激励函数 (Activation Function)

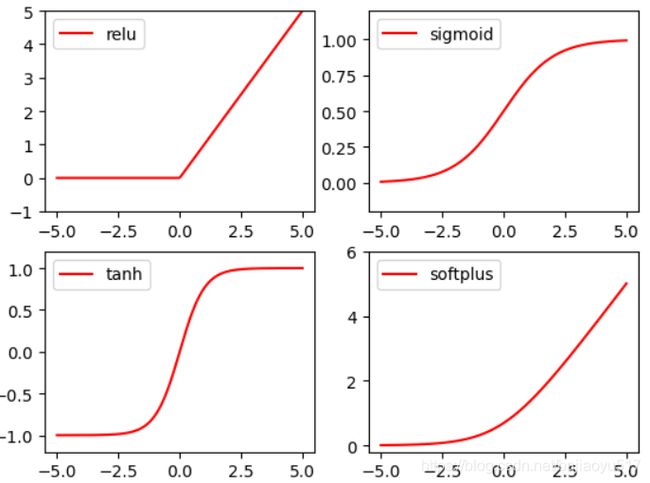

7 激励函数 Activation Function

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

# fake data

x = np.linspace(-5, 5, 200) # x data, shape=(100, 1)

# following are popular activation functions

y_relu = tf.nn.relu(x)

y_sigmoid = tf.nn.sigmoid(x)

y_tanh = tf.nn.tanh(x)

y_softplus = tf.nn.softplus(x)

# y_softmax = tf.nn.softmax(x) softmax is a special kind of activation function, it is about probability

sess = tf.Session()

y_relu, y_sigmoid, y_tanh, y_softplus = sess.run([y_relu, y_sigmoid, y_tanh, y_softplus])

# plt to visualize these activation function

plt.figure(1, figsize=(8, 6))

plt.subplot(221)

plt.plot(x, y_relu, c='red', label='relu')

plt.ylim((-1, 5))

plt.legend(loc='best')

plt.subplot(222)

plt.plot(x, y_sigmoid, c='red', label='sigmoid')

plt.ylim((-0.2, 1.2))

plt.legend(loc='best')

plt.subplot(223)

plt.plot(x, y_tanh, c='red', label='tanh')

plt.ylim((-1.2, 1.2))

plt.legend(loc='best')

plt.subplot(224)

plt.plot(x, y_softplus, c='red', label='softplus')

plt.ylim((-0.2, 6))

plt.legend(loc='best')

plt.show()