理解SVM(附代码)

参考内容:https://blog.csdn.net/weixin_39605679/article/details/81170300

SVM 支持向量机是一种二分类模型,它的基本模型是定义在特征空间上的间隔最大的分类器,SVM还包含核技巧,这使它可以成为非线性的分类器。SVM的学习策略就是间隔最大化。

算法原理

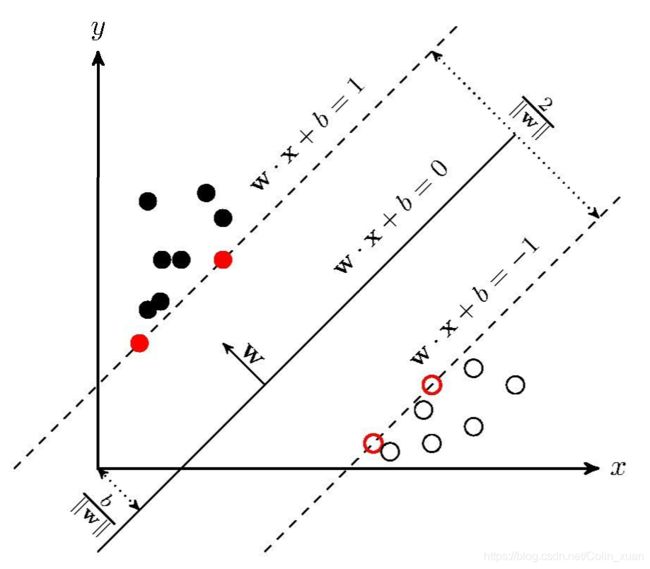

SVM 学习的基本思想就是求解能够正确划分训练数据集并且几何间隔距离最大的超平面。

对于线性可分的数据,超平面(w*x+b=0)有无穷多个,但是几何间隔最大的超平面是唯一的

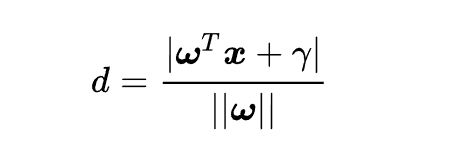

几何间隔: 对于给定的数据集和超平面,定义超平面关于样本点(Xi,Yi)的几何间隔为

根据点到直线的距离

扩展到超平面距离为:

这个d 就是分类间隔。

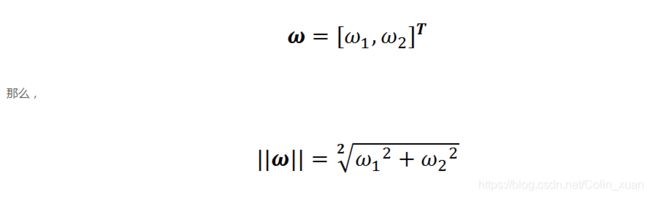

||W|| 表示w的二范数,即求所有元素的平方和,然后开方,比如对于二维平面:

分类器的好坏评定依据就是分类间隔W=2d的大小,W越大,我们认为超平面分类效果越好,现在问题变成了最大化问题

约束条件:

至此我们已经获得了目标函数的数学形式,但是为了求解w的最大值,我们需要解决下面问题:

1: 如何判断超平面将样本点正确分类。

2 : 我们知道了求距离d的最大值,首先需要找到支持向量上的点,如何从众多的点中选出支持向量上的点呢?

上面需要面对的问题就是约束条件,也就是我们优化的变量d的取值范围受到了限制和约束条件。SVM算法通过一些巧妙的技巧,将约束条件融合到一个不等式里。

下面讲述这个不等式。

1 解决约束条件中的第一个问题:

每个样本点都有一个类别标签,如果我们的超平面方程能够完全正确的讲上图样本点正确分类,就会满足下面的方程:

可以理解为,正样本都在这个平面上方,负样本都在这个平面下方。

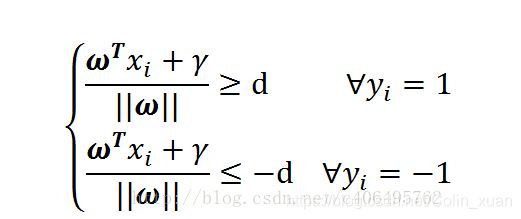

如果我们要求再高一点,使得超平面在间隔区域的中轴线上,并且相应的支持向量上对应的样本点到决策面上的距离为d,上面公式可以进一步写成:

两边同时除以d:

我们对Wd Rd 重新起个名,变成下面的方程:

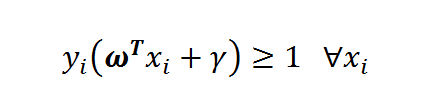

把上面式子整合在 一起就是:

我们在上面把标签设置为1 和-1 这样的标记方便我们将上述方程变成如上方式。。

解决办法:

1 目标函数:使得d最大化

所有支持向量上的样本点满足如下公式:

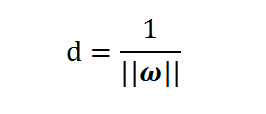

所以讲目标函数进一步简化为:(支持向量那条线和决策线是平行的,根据两条平行线的距离公式)

最大化d ,等价于最小化

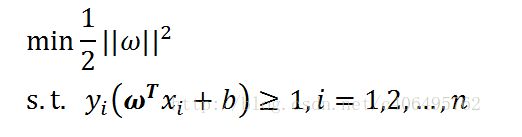

我们把目标函数和约束条件放在一起:

st 表示 服从某某条件的意思。这个也就是支持向量机基本数学模型。

求解:

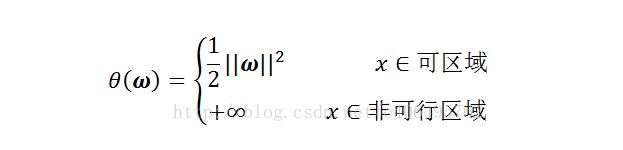

定理:非凸函数,我们无法得到全局最优解,只能活得局部最优解,所以我们先把目标函数转换成凸函数

判断是否是凸函数:

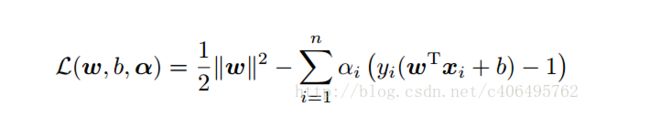

至此我们将我们的问题归纳为凸函数优化问题,怎么解决这个问题,需要用到的就是拉格朗日函数。

需要两个步骤:

1 将有约束的原始目标函数转换为无约束的新构造的拉格朗日目标函数

为了我们的初衷,我们建立了如下函数:

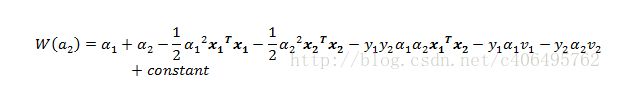

然后接下来使用KKT条件,拉格朗日对偶,最后利用SMO算子求出拉格朗日的乘子。

最后的目标函数变为:

封装函数 在主函数中包含这个头文件 就可以使用SVM 训练和预测数据了

#include

#include

#include

#include"svm.h"

using namespace std;

class ClassificationSVM

{

public:

void train(const std::string& modelFileName);

double predict(const vector& vec, const std::string& modelFileName, vector& prob);

void setParam();

void AddTrainData(const vector& vec, int dim, double label);

svm_parameter param;

svm_problem prob;//all the data for train

std::list dataList;//list of features of all the samples

std::list typeList;//list of type of all the samples

int sampleNum;

svm_model* svm_load;

//bool* judgeRight;

};

void ClassificationSVM::setParam()

{

param.svm_type = C_SVC;

param.kernel_type = RBF;

param.degree = 3;

param.gamma = 2.8;

param.coef0 = 0;

param.nu = 0.5;

//以下为训练时才用到

param.cache_size = 400;

param.C = 50; //惩罚因子

param.eps = 1e-4;

param.p = 0.1;

param.shrinking = 1;

param.nr_weight = 0;

param.weight = NULL;

param.weight_label = NULL;

param.probability = 1;

}

void ClassificationSVM::AddTrainData(const vector& vec, int dim, double label) //从一个vector特征向量中读入到svm_node链表中

{

svm_node* features = new svm_node[dim + 1];

for (int k = 0; k < dim; k++)

{

features[k].index = k + 1;//特征标号,从1开始

features[k].value = vec[k];//特征值

}

features[dim].index = -1;//结束标记

double type = label; //标签

dataList.push_back(features);

typeList.push_back(type);

sampleNum++; //增加计数

}

void ClassificationSVM::train(const string& modelFileName)

{

cout << sampleNum << endl;

prob.l = sampleNum;//number of training samples

prob.x = new svm_node *[prob.l];//features of all the training samples

prob.y = new double[prob.l];//每个样本的标签

int index = 0;

while (!dataList.empty())

{

prob.x[index] = dataList.front();

prob.y[index] = typeList.front();

dataList.pop_front();

typeList.pop_front();

index++;

}

cout << "start training" << endl;

setParam();

svm_model *svmModel = svm_train(&prob, ¶m);

cout << "save model" << endl;

svm_save_model(modelFileName.c_str(), svmModel);

cout << "done!" << endl;

delete[] prob.x;

delete[] prob.y;

}

double ClassificationSVM::predict(const vector& vec, const string& modelFileName, vector& prob)

{

double* probability = new double[2];

std::vector judgeRight;

//double result[2] = {0, 0};

if (svm_load == NULL)

svm_load = svm_load_model(modelFileName.c_str());

int dim = vec.size();

svm_node* input = new svm_node[dim + 1];

for (int k = 0; k < dim; k++)

{

input[k].index = k + 1;//特征标号,从1开始

input[k].value = vec[k];//特征值

}

input[dim].index = -1;//结束标记

double predictValue;

if (svm_check_probability_model(svm_load) == 1)

predictValue = svm_predict_probability(svm_load, input, probability);

prob.push_back(probability[0]);

prob.push_back(probability[1]);

delete[] input;

delete probability;

return predictValue;

}

svm.h svm.cpp

#include "stdafx.h"

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include "svm.h"

int libsvm_version = LIBSVM_VERSION;

typedef float Qfloat;

typedef signed char schar;

#ifndef min

template static inline T min(T x,T y) { return (x static inline T max(T x,T y) { return (x>y)?x:y; }

#endif

template static inline void swap(T& x, T& y) { T t=x; x=y; y=t; }

template static inline void clone(T*& dst, S* src, int n)

{

dst = new T[n];

memcpy((void *)dst,(void *)src,sizeof(T)*n);

}

static inline double powi(double base, int times)

{

double tmp = base, ret = 1.0;

for(int t=times; t>0; t/=2)

{

if(t%2==1) ret*=tmp;

tmp = tmp * tmp;

}

return ret;

}

#define INF HUGE_VAL

#define TAU 1e-12

#define Malloc(type,n) (type *)malloc((n)*sizeof(type))

static void print_string_stdout(const char *s)

{

fputs(s,stdout);

fflush(stdout);

}

static void (*svm_print_string) (const char *) = &print_string_stdout;

#if 1

static void info(const char *fmt,...)

{

char buf[BUFSIZ];

va_list ap;

va_start(ap,fmt);

vsprintf(buf,fmt,ap);

va_end(ap);

(*svm_print_string)(buf);

}

#else

static void info(const char *fmt,...) {}

#endif

//

// Kernel Cache

//

// l is the number of total data items

// size is the cache size limit in bytes

//

class Cache

{

public:

Cache(int l,long int size);

~Cache();

// request data [0,len)

// return some position p where [p,len) need to be filled

// (p >= len if nothing needs to be filled)

int get_data(const int index, Qfloat **data, int len);

void swap_index(int i, int j);

private:

int l;

long int size;

struct head_t

{

head_t *prev, *next; // a circular list

Qfloat *data;

int len; // data[0,len) is cached in this entry

};

head_t *head;

head_t lru_head;

void lru_delete(head_t *h);

void lru_insert(head_t *h);

};

Cache::Cache(int l_,long int size_):l(l_),size(size_)

{

head = (head_t *)calloc(l,sizeof(head_t)); // initialized to 0

size /= sizeof(Qfloat);

size -= l * sizeof(head_t) / sizeof(Qfloat);

size = max(size, 2 * (long int) l); // cache must be large enough for two columns

lru_head.next = lru_head.prev = &lru_head;

}

Cache::~Cache()

{

for(head_t *h = lru_head.next; h != &lru_head; h=h->next)

free(h->data);

free(head);

}

void Cache::lru_delete(head_t *h)

{

// delete from current location

h->prev->next = h->next;

h->next->prev = h->prev;

}

void Cache::lru_insert(head_t *h)

{

// insert to last position

h->next = &lru_head;

h->prev = lru_head.prev;

h->prev->next = h;

h->next->prev = h;

}

int Cache::get_data(const int index, Qfloat **data, int len)

{

head_t *h = &head[index];

if(h->len) lru_delete(h);

int more = len - h->len;

if(more > 0)

{

// free old space

while(size < more)

{

head_t *old = lru_head.next;

lru_delete(old);

free(old->data);

size += old->len;

old->data = 0;

old->len = 0;

}

// allocate new space

h->data = (Qfloat *)realloc(h->data,sizeof(Qfloat)*len);

size -= more;

swap(h->len,len);

}

lru_insert(h);

*data = h->data;

return len;

}

void Cache::swap_index(int i, int j)

{

if(i==j) return;

if(head[i].len) lru_delete(&head[i]);

if(head[j].len) lru_delete(&head[j]);

swap(head[i].data,head[j].data);

swap(head[i].len,head[j].len);

if(head[i].len) lru_insert(&head[i]);

if(head[j].len) lru_insert(&head[j]);

if(i>j) swap(i,j);

for(head_t *h = lru_head.next; h!=&lru_head; h=h->next)

{

if(h->len > i)

{

if(h->len > j)

swap(h->data[i],h->data[j]);

else

{

// give up

lru_delete(h);

free(h->data);

size += h->len;

h->data = 0;

h->len = 0;

}

}

}

}

//

// Kernel evaluation

//

// the static method k_function is for doing single kernel evaluation

// the constructor of Kernel prepares to calculate the l*l kernel matrix

// the member function get_Q is for getting one column from the Q Matrix

//

class QMatrix {

public:

virtual Qfloat *get_Q(int column, int len) const = 0;

virtual double *get_QD() const = 0;

virtual void swap_index(int i, int j) const = 0;

virtual ~QMatrix() {}

};

class Kernel: public QMatrix {

public:

Kernel(int l, svm_node * const * x, const svm_parameter& param);

virtual ~Kernel();

static double k_function(const svm_node *x, const svm_node *y,

const svm_parameter& param);

virtual Qfloat *get_Q(int column, int len) const = 0;

virtual double *get_QD() const = 0;

virtual void swap_index(int i, int j) const // no so const...

{

swap(x[i],x[j]);

if(x_square) swap(x_square[i],x_square[j]);

}

protected:

double (Kernel::*kernel_function)(int i, int j) const;

private:

const svm_node **x;

double *x_square;

// svm_parameter

const int kernel_type;

const int degree;

const double gamma;

const double coef0;

static double dot(const svm_node *px, const svm_node *py);

double kernel_linear(int i, int j) const

{

return dot(x[i],x[j]);

}

double kernel_poly(int i, int j) const

{

return powi(gamma*dot(x[i],x[j])+coef0,degree);

}

double kernel_rbf(int i, int j) const

{

return exp(-gamma*(x_square[i]+x_square[j]-2*dot(x[i],x[j])));

}

double kernel_sigmoid(int i, int j) const

{

return tanh(gamma*dot(x[i],x[j])+coef0);

}

double kernel_precomputed(int i, int j) const

{

return x[i][(int)(x[j][0].value)].value;

}

};

Kernel::Kernel(int l, svm_node * const * x_, const svm_parameter& param)

:kernel_type(param.kernel_type), degree(param.degree),

gamma(param.gamma), coef0(param.coef0)

{

switch(kernel_type)

{

case LINEAR:

kernel_function = &Kernel::kernel_linear;

break;

case POLY:

kernel_function = &Kernel::kernel_poly;

break;

case RBF:

kernel_function = &Kernel::kernel_rbf;

break;

case SIGMOID:

kernel_function = &Kernel::kernel_sigmoid;

break;

case PRECOMPUTED:

kernel_function = &Kernel::kernel_precomputed;

break;

}

clone(x,x_,l);

if(kernel_type == RBF)

{

x_square = new double[l];

for(int i=0;iindex != -1 && py->index != -1)

{

if(px->index == py->index)

{

sum += px->value * py->value;

++px;

++py;

}

else

{

if(px->index > py->index)

++py;

else

++px;

}

}

return sum;

}

double Kernel::k_function(const svm_node *x, const svm_node *y,

const svm_parameter& param)

{

switch(param.kernel_type)

{

case LINEAR:

return dot(x,y);

case POLY:

return powi(param.gamma*dot(x,y)+param.coef0,param.degree);

case RBF:

{

double sum = 0;

while(x->index != -1 && y->index !=-1)

{

if(x->index == y->index)

{

double d = x->value - y->value;

sum += d*d;

++x;

++y;

}

else

{

if(x->index > y->index)

{

sum += y->value * y->value;

++y;

}

else

{

sum += x->value * x->value;

++x;

}

}

}

while(x->index != -1)

{

sum += x->value * x->value;

++x;

}

while(y->index != -1)

{

sum += y->value * y->value;

++y;

}

return exp(-param.gamma*sum);

}

case SIGMOID:

return tanh(param.gamma*dot(x,y)+param.coef0);

case PRECOMPUTED: //x: test (validation), y: SV

return x[(int)(y->value)].value;

default:

return 0; // Unreachable

}

}

// An SMO algorithm in Fan et al., JMLR 6(2005), p. 1889--1918

// Solves:

//

// min 0.5(\alpha^T Q \alpha) + p^T \alpha

//

// y^T \alpha = \delta

// y_i = +1 or -1

// 0 <= alpha_i <= Cp for y_i = 1

// 0 <= alpha_i <= Cn for y_i = -1

//

// Given:

//

// Q, p, y, Cp, Cn, and an initial feasible point \alpha

// l is the size of vectors and matrices

// eps is the stopping tolerance

//

// solution will be put in \alpha, objective value will be put in obj

//

class Solver {

public:

Solver() {};

virtual ~Solver() {};

struct SolutionInfo {

double obj;

double rho;

double upper_bound_p;

double upper_bound_n;

double r; // for Solver_NU

};

void Solve(int l, const QMatrix& Q, const double *p_, const schar *y_,

double *alpha_, double Cp, double Cn, double eps,

SolutionInfo* si, int shrinking);

protected:

int active_size;

schar *y;

double *G; // gradient of objective function

enum { LOWER_BOUND, UPPER_BOUND, FREE };

char *alpha_status; // LOWER_BOUND, UPPER_BOUND, FREE

double *alpha;

const QMatrix *Q;

const double *QD;

double eps;

double Cp,Cn;

double *p;

int *active_set;

double *G_bar; // gradient, if we treat free variables as 0

int l;

bool unshrink; // XXX

double get_C(int i)

{

return (y[i] > 0)? Cp : Cn;

}

void update_alpha_status(int i)

{

if(alpha[i] >= get_C(i))

alpha_status[i] = UPPER_BOUND;

else if(alpha[i] <= 0)

alpha_status[i] = LOWER_BOUND;

else alpha_status[i] = FREE;

}

bool is_upper_bound(int i) { return alpha_status[i] == UPPER_BOUND; }

bool is_lower_bound(int i) { return alpha_status[i] == LOWER_BOUND; }

bool is_free(int i) { return alpha_status[i] == FREE; }

void swap_index(int i, int j);

void reconstruct_gradient();

virtual int select_working_set(int &i, int &j);

virtual double calculate_rho();

virtual void do_shrinking();

private:

bool be_shrunk(int i, double Gmax1, double Gmax2);

};

void Solver::swap_index(int i, int j)

{

Q->swap_index(i,j);

swap(y[i],y[j]);

swap(G[i],G[j]);

swap(alpha_status[i],alpha_status[j]);

swap(alpha[i],alpha[j]);

swap(p[i],p[j]);

swap(active_set[i],active_set[j]);

swap(G_bar[i],G_bar[j]);

}

void Solver::reconstruct_gradient()

{

// reconstruct inactive elements of G from G_bar and free variables

if(active_size == l) return;

int i,j;

int nr_free = 0;

for(j=active_size;j 2*active_size*(l-active_size))

{

for(i=active_size;iget_Q(i,active_size);

for(j=0;jget_Q(i,l);

double alpha_i = alpha[i];

for(j=active_size;jl = l;

this->Q = &Q;

QD=Q.get_QD();

clone(p, p_,l);

clone(y, y_,l);

clone(alpha,alpha_,l);

this->Cp = Cp;

this->Cn = Cn;

this->eps = eps;

unshrink = false;

// initialize alpha_status

{

alpha_status = new char[l];

for(int i=0;iINT_MAX/100 ? INT_MAX : 100*l);

int counter = min(l,1000)+1;

while(iter < max_iter)

{

// show progress and do shrinking

if(--counter == 0)

{

counter = min(l,1000);

if(shrinking) do_shrinking();

info(".");

}

int i,j;

if(select_working_set(i,j)!=0)

{

// reconstruct the whole gradient

reconstruct_gradient();

// reset active set size and check

active_size = l;

info("*");

if(select_working_set(i,j)!=0)

break;

else

counter = 1; // do shrinking next iteration

}

++iter;

// update alpha[i] and alpha[j], handle bounds carefully

const Qfloat *Q_i = Q.get_Q(i,active_size);

const Qfloat *Q_j = Q.get_Q(j,active_size);

double C_i = get_C(i);

double C_j = get_C(j);

double old_alpha_i = alpha[i];

double old_alpha_j = alpha[j];

if(y[i]!=y[j])

{

double quad_coef = QD[i]+QD[j]+2*Q_i[j];

if (quad_coef <= 0)

quad_coef = TAU;

double delta = (-G[i]-G[j])/quad_coef;

double diff = alpha[i] - alpha[j];

alpha[i] += delta;

alpha[j] += delta;

if(diff > 0)

{

if(alpha[j] < 0)

{

alpha[j] = 0;

alpha[i] = diff;

}

}

else

{

if(alpha[i] < 0)

{

alpha[i] = 0;

alpha[j] = -diff;

}

}

if(diff > C_i - C_j)

{

if(alpha[i] > C_i)

{

alpha[i] = C_i;

alpha[j] = C_i - diff;

}

}

else

{

if(alpha[j] > C_j)

{

alpha[j] = C_j;

alpha[i] = C_j + diff;

}

}

}

else

{

double quad_coef = QD[i]+QD[j]-2*Q_i[j];

if (quad_coef <= 0)

quad_coef = TAU;

double delta = (G[i]-G[j])/quad_coef;

double sum = alpha[i] + alpha[j];

alpha[i] -= delta;

alpha[j] += delta;

if(sum > C_i)

{

if(alpha[i] > C_i)

{

alpha[i] = C_i;

alpha[j] = sum - C_i;

}

}

else

{

if(alpha[j] < 0)

{

alpha[j] = 0;

alpha[i] = sum;

}

}

if(sum > C_j)

{

if(alpha[j] > C_j)

{

alpha[j] = C_j;

alpha[i] = sum - C_j;

}

}

else

{

if(alpha[i] < 0)

{

alpha[i] = 0;

alpha[j] = sum;

}

}

}

// update G

double delta_alpha_i = alpha[i] - old_alpha_i;

double delta_alpha_j = alpha[j] - old_alpha_j;

for(int k=0;k= max_iter)

{

if(active_size < l)

{

// reconstruct the whole gradient to calculate objective value

reconstruct_gradient();

active_size = l;

info("*");

}

fprintf(stderr,"\nWARNING: reaching max number of iterations\n");

}

// calculate rho

si->rho = calculate_rho();

// calculate objective value

{

double v = 0;

int i;

for(i=0;iobj = v/2;

}

// put back the solution

{

for(int i=0;iupper_bound_p = Cp;

si->upper_bound_n = Cn;

info("\noptimization finished, #iter = %d\n",iter);

delete[] p;

delete[] y;

delete[] alpha;

delete[] alpha_status;

delete[] active_set;

delete[] G;

delete[] G_bar;

}

// return 1 if already optimal, return 0 otherwise

int Solver::select_working_set(int &out_i, int &out_j)

{

// return i,j such that

// i: maximizes -y_i * grad(f)_i, i in I_up(\alpha)

// j: minimizes the decrease of obj value

// (if quadratic coefficeint <= 0, replace it with tau)

// -y_j*grad(f)_j < -y_i*grad(f)_i, j in I_low(\alpha)

double Gmax = -INF;

double Gmax2 = -INF;

int Gmax_idx = -1;

int Gmin_idx = -1;

double obj_diff_min = INF;

for(int t=0;t= Gmax)

{

Gmax = -G[t];

Gmax_idx = t;

}

}

else

{

if(!is_lower_bound(t))

if(G[t] >= Gmax)

{

Gmax = G[t];

Gmax_idx = t;

}

}

int i = Gmax_idx;

const Qfloat *Q_i = NULL;

if(i != -1) // NULL Q_i not accessed: Gmax=-INF if i=-1

Q_i = Q->get_Q(i,active_size);

for(int j=0;j= Gmax2)

Gmax2 = G[j];

if (grad_diff > 0)

{

double obj_diff;

double quad_coef = QD[i]+QD[j]-2.0*y[i]*Q_i[j];

if (quad_coef > 0)

obj_diff = -(grad_diff*grad_diff)/quad_coef;

else

obj_diff = -(grad_diff*grad_diff)/TAU;

if (obj_diff <= obj_diff_min)

{

Gmin_idx=j;

obj_diff_min = obj_diff;

}

}

}

}

else

{

if (!is_upper_bound(j))

{

double grad_diff= Gmax-G[j];

if (-G[j] >= Gmax2)

Gmax2 = -G[j];

if (grad_diff > 0)

{

double obj_diff;

double quad_coef = QD[i]+QD[j]+2.0*y[i]*Q_i[j];

if (quad_coef > 0)

obj_diff = -(grad_diff*grad_diff)/quad_coef;

else

obj_diff = -(grad_diff*grad_diff)/TAU;

if (obj_diff <= obj_diff_min)

{

Gmin_idx=j;

obj_diff_min = obj_diff;

}

}

}

}

}

if(Gmax+Gmax2 < eps || Gmin_idx == -1)

return 1;

out_i = Gmax_idx;

out_j = Gmin_idx;

return 0;

}

bool Solver::be_shrunk(int i, double Gmax1, double Gmax2)

{

if(is_upper_bound(i))

{

if(y[i]==+1)

return(-G[i] > Gmax1);

else

return(-G[i] > Gmax2);

}

else if(is_lower_bound(i))

{

if(y[i]==+1)

return(G[i] > Gmax2);

else

return(G[i] > Gmax1);

}

else

return(false);

}

void Solver::do_shrinking()

{

int i;

double Gmax1 = -INF; // max { -y_i * grad(f)_i | i in I_up(\alpha) }

double Gmax2 = -INF; // max { y_i * grad(f)_i | i in I_low(\alpha) }

// find maximal violating pair first

for(i=0;i= Gmax1)

Gmax1 = -G[i];

}

if(!is_lower_bound(i))

{

if(G[i] >= Gmax2)

Gmax2 = G[i];

}

}

else

{

if(!is_upper_bound(i))

{

if(-G[i] >= Gmax2)

Gmax2 = -G[i];

}

if(!is_lower_bound(i))

{

if(G[i] >= Gmax1)

Gmax1 = G[i];

}

}

}

if(unshrink == false && Gmax1 + Gmax2 <= eps*10)

{

unshrink = true;

reconstruct_gradient();

active_size = l;

info("*");

}

for(i=0;i i)

{

if (!be_shrunk(active_size, Gmax1, Gmax2))

{

swap_index(i,active_size);

break;

}

active_size--;

}

}

}

double Solver::calculate_rho()

{

double r;

int nr_free = 0;

double ub = INF, lb = -INF, sum_free = 0;

for(int i=0;i0)

r = sum_free/nr_free;

else

r = (ub+lb)/2;

return r;

}

//

// Solver for nu-svm classification and regression

//

// additional constraint: e^T \alpha = constant

//

class Solver_NU: public Solver

{

public:

Solver_NU() {}

void Solve(int l, const QMatrix& Q, const double *p, const schar *y,

double *alpha, double Cp, double Cn, double eps,

SolutionInfo* si, int shrinking)

{

this->si = si;

Solver::Solve(l,Q,p,y,alpha,Cp,Cn,eps,si,shrinking);

}

private:

SolutionInfo *si;

int select_working_set(int &i, int &j);

double calculate_rho();

bool be_shrunk(int i, double Gmax1, double Gmax2, double Gmax3, double Gmax4);

void do_shrinking();

};

// return 1 if already optimal, return 0 otherwise

int Solver_NU::select_working_set(int &out_i, int &out_j)

{

// return i,j such that y_i = y_j and

// i: maximizes -y_i * grad(f)_i, i in I_up(\alpha)

// j: minimizes the decrease of obj value

// (if quadratic coefficeint <= 0, replace it with tau)

// -y_j*grad(f)_j < -y_i*grad(f)_i, j in I_low(\alpha)

double Gmaxp = -INF;

double Gmaxp2 = -INF;

int Gmaxp_idx = -1;

double Gmaxn = -INF;

double Gmaxn2 = -INF;

int Gmaxn_idx = -1;

int Gmin_idx = -1;

double obj_diff_min = INF;

for(int t=0;t= Gmaxp)

{

Gmaxp = -G[t];

Gmaxp_idx = t;

}

}

else

{

if(!is_lower_bound(t))

if(G[t] >= Gmaxn)

{

Gmaxn = G[t];

Gmaxn_idx = t;

}

}

int ip = Gmaxp_idx;

int in = Gmaxn_idx;

const Qfloat *Q_ip = NULL;

const Qfloat *Q_in = NULL;

if(ip != -1) // NULL Q_ip not accessed: Gmaxp=-INF if ip=-1

Q_ip = Q->get_Q(ip,active_size);

if(in != -1)

Q_in = Q->get_Q(in,active_size);

for(int j=0;j= Gmaxp2)

Gmaxp2 = G[j];

if (grad_diff > 0)

{

double obj_diff;

double quad_coef = QD[ip]+QD[j]-2*Q_ip[j];

if (quad_coef > 0)

obj_diff = -(grad_diff*grad_diff)/quad_coef;

else

obj_diff = -(grad_diff*grad_diff)/TAU;

if (obj_diff <= obj_diff_min)

{

Gmin_idx=j;

obj_diff_min = obj_diff;

}

}

}

}

else

{

if (!is_upper_bound(j))

{

double grad_diff=Gmaxn-G[j];

if (-G[j] >= Gmaxn2)

Gmaxn2 = -G[j];

if (grad_diff > 0)

{

double obj_diff;

double quad_coef = QD[in]+QD[j]-2*Q_in[j];

if (quad_coef > 0)

obj_diff = -(grad_diff*grad_diff)/quad_coef;

else

obj_diff = -(grad_diff*grad_diff)/TAU;

if (obj_diff <= obj_diff_min)

{

Gmin_idx=j;

obj_diff_min = obj_diff;

}

}

}

}

}

if(max(Gmaxp+Gmaxp2,Gmaxn+Gmaxn2) < eps || Gmin_idx == -1)

return 1;

if (y[Gmin_idx] == +1)

out_i = Gmaxp_idx;

else

out_i = Gmaxn_idx;

out_j = Gmin_idx;

return 0;

}

bool Solver_NU::be_shrunk(int i, double Gmax1, double Gmax2, double Gmax3, double Gmax4)

{

if(is_upper_bound(i))

{

if(y[i]==+1)

return(-G[i] > Gmax1);

else

return(-G[i] > Gmax4);

}

else if(is_lower_bound(i))

{

if(y[i]==+1)

return(G[i] > Gmax2);

else

return(G[i] > Gmax3);

}

else

return(false);

}

void Solver_NU::do_shrinking()

{

double Gmax1 = -INF; // max { -y_i * grad(f)_i | y_i = +1, i in I_up(\alpha) }

double Gmax2 = -INF; // max { y_i * grad(f)_i | y_i = +1, i in I_low(\alpha) }

double Gmax3 = -INF; // max { -y_i * grad(f)_i | y_i = -1, i in I_up(\alpha) }

double Gmax4 = -INF; // max { y_i * grad(f)_i | y_i = -1, i in I_low(\alpha) }

// find maximal violating pair first

int i;

for(i=0;i Gmax1) Gmax1 = -G[i];

}

else if(-G[i] > Gmax4) Gmax4 = -G[i];

}

if(!is_lower_bound(i))

{

if(y[i]==+1)

{

if(G[i] > Gmax2) Gmax2 = G[i];

}

else if(G[i] > Gmax3) Gmax3 = G[i];

}

}

if(unshrink == false && max(Gmax1+Gmax2,Gmax3+Gmax4) <= eps*10)

{

unshrink = true;

reconstruct_gradient();

active_size = l;

}

for(i=0;i i)

{

if (!be_shrunk(active_size, Gmax1, Gmax2, Gmax3, Gmax4))

{

swap_index(i,active_size);

break;

}

active_size--;

}

}

}

double Solver_NU::calculate_rho()

{

int nr_free1 = 0,nr_free2 = 0;

double ub1 = INF, ub2 = INF;

double lb1 = -INF, lb2 = -INF;

double sum_free1 = 0, sum_free2 = 0;

for(int i=0;i 0)

r1 = sum_free1/nr_free1;

else

r1 = (ub1+lb1)/2;

if(nr_free2 > 0)

r2 = sum_free2/nr_free2;

else

r2 = (ub2+lb2)/2;

si->r = (r1+r2)/2;

return (r1-r2)/2;

}

//

// Q matrices for various formulations

//

class SVC_Q: public Kernel

{

public:

SVC_Q(const svm_problem& prob, const svm_parameter& param, const schar *y_)

:Kernel(prob.l, prob.x, param)

{

clone(y,y_,prob.l);

cache = new Cache(prob.l,(long int)(param.cache_size*(1<<20)));

QD = new double[prob.l];

for(int i=0;i*kernel_function)(i,i);

}

Qfloat *get_Q(int i, int len) const

{

Qfloat *data;

int start, j;

if((start = cache->get_data(i,&data,len)) < len)

{

for(j=start;j*kernel_function)(i,j));

}

return data;

}

double *get_QD() const

{

return QD;

}

void swap_index(int i, int j) const

{

cache->swap_index(i,j);

Kernel::swap_index(i,j);

swap(y[i],y[j]);

swap(QD[i],QD[j]);

}

~SVC_Q()

{

delete[] y;

delete cache;

delete[] QD;

}

private:

schar *y;

Cache *cache;

double *QD;

};

class ONE_CLASS_Q: public Kernel

{

public:

ONE_CLASS_Q(const svm_problem& prob, const svm_parameter& param)

:Kernel(prob.l, prob.x, param)

{

cache = new Cache(prob.l,(long int)(param.cache_size*(1<<20)));

QD = new double[prob.l];

for(int i=0;i*kernel_function)(i,i);

}

Qfloat *get_Q(int i, int len) const

{

Qfloat *data;

int start, j;

if((start = cache->get_data(i,&data,len)) < len)

{

for(j=start;j*kernel_function)(i,j);

}

return data;

}

double *get_QD() const

{

return QD;

}

void swap_index(int i, int j) const

{

cache->swap_index(i,j);

Kernel::swap_index(i,j);

swap(QD[i],QD[j]);

}

~ONE_CLASS_Q()

{

delete cache;

delete[] QD;

}

private:

Cache *cache;

double *QD;

};

class SVR_Q: public Kernel

{

public:

SVR_Q(const svm_problem& prob, const svm_parameter& param)

:Kernel(prob.l, prob.x, param)

{

l = prob.l;

cache = new Cache(l,(long int)(param.cache_size*(1<<20)));

QD = new double[2*l];

sign = new schar[2*l];

index = new int[2*l];

for(int k=0;k*kernel_function)(k,k);

QD[k+l] = QD[k];

}

buffer[0] = new Qfloat[2*l];

buffer[1] = new Qfloat[2*l];

next_buffer = 0;

}

void swap_index(int i, int j) const

{

swap(sign[i],sign[j]);

swap(index[i],index[j]);

swap(QD[i],QD[j]);

}

Qfloat *get_Q(int i, int len) const

{

Qfloat *data;

int j, real_i = index[i];

if(cache->get_data(real_i,&data,l) < l)

{

for(j=0;j*kernel_function)(real_i,j);

}

// reorder and copy

Qfloat *buf = buffer[next_buffer];

next_buffer = 1 - next_buffer;

schar si = sign[i];

for(j=0;jl;

double *minus_ones = new double[l];

schar *y = new schar[l];

int i;

for(i=0;iy[i] > 0) y[i] = +1; else y[i] = -1;

}

Solver s;

s.Solve(l, SVC_Q(*prob,*param,y), minus_ones, y,

alpha, Cp, Cn, param->eps, si, param->shrinking);

double sum_alpha=0;

for(i=0;il));

for(i=0;il;

double nu = param->nu;

schar *y = new schar[l];

for(i=0;iy[i]>0)

y[i] = +1;

else

y[i] = -1;

double sum_pos = nu*l/2;

double sum_neg = nu*l/2;

for(i=0;ieps, si, param->shrinking);

double r = si->r;

info("C = %f\n",1/r);

for(i=0;irho /= r;

si->obj /= (r*r);

si->upper_bound_p = 1/r;

si->upper_bound_n = 1/r;

delete[] y;

delete[] zeros;

}

static void solve_one_class(

const svm_problem *prob, const svm_parameter *param,

double *alpha, Solver::SolutionInfo* si)

{

int l = prob->l;

double *zeros = new double[l];

schar *ones = new schar[l];

int i;

int n = (int)(param->nu*prob->l); // # of alpha's at upper bound

for(i=0;il)

alpha[n] = param->nu * prob->l - n;

for(i=n+1;ieps, si, param->shrinking);

delete[] zeros;

delete[] ones;

}

static void solve_epsilon_svr(

const svm_problem *prob, const svm_parameter *param,

double *alpha, Solver::SolutionInfo* si)

{

int l = prob->l;

double *alpha2 = new double[2*l];

double *linear_term = new double[2*l];

schar *y = new schar[2*l];

int i;

for(i=0;ip - prob->y[i];

y[i] = 1;

alpha2[i+l] = 0;

linear_term[i+l] = param->p + prob->y[i];

y[i+l] = -1;

}

Solver s;

s.Solve(2*l, SVR_Q(*prob,*param), linear_term, y,

alpha2, param->C, param->C, param->eps, si, param->shrinking);

double sum_alpha = 0;

for(i=0;iC*l));

delete[] alpha2;

delete[] linear_term;

delete[] y;

}

static void solve_nu_svr(

const svm_problem *prob, const svm_parameter *param,

double *alpha, Solver::SolutionInfo* si)

{

int l = prob->l;

double C = param->C;

double *alpha2 = new double[2*l];

double *linear_term = new double[2*l];

schar *y = new schar[2*l];

int i;

double sum = C * param->nu * l / 2;

for(i=0;iy[i];

y[i] = 1;

linear_term[i+l] = prob->y[i];

y[i+l] = -1;

}

Solver_NU s;

s.Solve(2*l, SVR_Q(*prob,*param), linear_term, y,

alpha2, C, C, param->eps, si, param->shrinking);

info("epsilon = %f\n",-si->r);

for(i=0;il);

Solver::SolutionInfo si;

switch(param->svm_type)

{

case C_SVC:

solve_c_svc(prob,param,alpha,&si,Cp,Cn);

break;

case NU_SVC:

solve_nu_svc(prob,param,alpha,&si);

break;

case ONE_CLASS:

solve_one_class(prob,param,alpha,&si);

break;

case EPSILON_SVR:

solve_epsilon_svr(prob,param,alpha,&si);

break;

case NU_SVR:

solve_nu_svr(prob,param,alpha,&si);

break;

}

info("obj = %f, rho = %f\n",si.obj,si.rho);

// output SVs

int nSV = 0;

int nBSV = 0;

for(int i=0;il;i++)

{

if(fabs(alpha[i]) > 0)

{

++nSV;

if(prob->y[i] > 0)

{

if(fabs(alpha[i]) >= si.upper_bound_p)

++nBSV;

}

else

{

if(fabs(alpha[i]) >= si.upper_bound_n)

++nBSV;

}

}

}

info("nSV = %d, nBSV = %d\n",nSV,nBSV);

decision_function f;

f.alpha = alpha;

f.rho = si.rho;

return f;

}

// Platt's binary SVM Probablistic Output: an improvement from Lin et al.

static void sigmoid_train(

int l, const double *dec_values, const double *labels,

double& A, double& B)

{

double prior1=0, prior0 = 0;

int i;

for (i=0;i 0) prior1+=1;

else prior0+=1;

int max_iter=100; // Maximal number of iterations

double min_step=1e-10; // Minimal step taken in line search

double sigma=1e-12; // For numerically strict PD of Hessian

double eps=1e-5;

double hiTarget=(prior1+1.0)/(prior1+2.0);

double loTarget=1/(prior0+2.0);

double *t=Malloc(double,l);

double fApB,p,q,h11,h22,h21,g1,g2,det,dA,dB,gd,stepsize;

double newA,newB,newf,d1,d2;

int iter;

// Initial Point and Initial Fun Value

A=0.0; B=log((prior0+1.0)/(prior1+1.0));

double fval = 0.0;

for (i=0;i0) t[i]=hiTarget;

else t[i]=loTarget;

fApB = dec_values[i]*A+B;

if (fApB>=0)

fval += t[i]*fApB + log(1+exp(-fApB));

else

fval += (t[i] - 1)*fApB +log(1+exp(fApB));

}

for (iter=0;iter= 0)

{

p=exp(-fApB)/(1.0+exp(-fApB));

q=1.0/(1.0+exp(-fApB));

}

else

{

p=1.0/(1.0+exp(fApB));

q=exp(fApB)/(1.0+exp(fApB));

}

d2=p*q;

h11+=dec_values[i]*dec_values[i]*d2;

h22+=d2;

h21+=dec_values[i]*d2;

d1=t[i]-p;

g1+=dec_values[i]*d1;

g2+=d1;

}

// Stopping Criteria

if (fabs(g1)= min_step)

{

newA = A + stepsize * dA;

newB = B + stepsize * dB;

// New function value

newf = 0.0;

for (i=0;i= 0)

newf += t[i]*fApB + log(1+exp(-fApB));

else

newf += (t[i] - 1)*fApB +log(1+exp(fApB));

}

// Check sufficient decrease

if (newf=max_iter)

info("Reaching maximal iterations in two-class probability estimates\n");

free(t);

}

static double sigmoid_predict(double decision_value, double A, double B)

{

double fApB = decision_value*A+B;

// 1-p used later; avoid catastrophic cancellation

if (fApB >= 0)

return exp(-fApB)/(1.0+exp(-fApB));

else

return 1.0/(1+exp(fApB)) ;

}

// Method 2 from the multiclass_prob paper by Wu, Lin, and Weng

static void multiclass_probability(int k, double **r, double *p)

{

int t,j;

int iter = 0, max_iter=max(100,k);

double **Q=Malloc(double *,k);

double *Qp=Malloc(double,k);

double pQp, eps=0.005/k;

for (t=0;tmax_error)

max_error=error;

}

if (max_error=max_iter)

info("Exceeds max_iter in multiclass_prob\n");

for(t=0;tl);

double *dec_values = Malloc(double,prob->l);

// random shuffle

for(i=0;il;i++) perm[i]=i;

for(i=0;il;i++)

{

int j = i+rand()%(prob->l-i);

swap(perm[i],perm[j]);

}

for(i=0;il/nr_fold;

int end = (i+1)*prob->l/nr_fold;

int j,k;

struct svm_problem subprob;

subprob.l = prob->l-(end-begin);

subprob.x = Malloc(struct svm_node*,subprob.l);

subprob.y = Malloc(double,subprob.l);

k=0;

for(j=0;jx[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

for(j=end;jl;j++)

{

subprob.x[k] = prob->x[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

int p_count=0,n_count=0;

for(j=0;j0)

p_count++;

else

n_count++;

if(p_count==0 && n_count==0)

for(j=begin;j 0 && n_count == 0)

for(j=begin;j 0)

for(j=begin;jx[perm[j]],&(dec_values[perm[j]]));

// ensure +1 -1 order; reason not using CV subroutine

dec_values[perm[j]] *= submodel->label[0];

}

svm_free_and_destroy_model(&submodel);

svm_destroy_param(&subparam);

}

free(subprob.x);

free(subprob.y);

}

sigmoid_train(prob->l,dec_values,prob->y,probA,probB);

free(dec_values);

free(perm);

}

// Return parameter of a Laplace distribution

static double svm_svr_probability(

const svm_problem *prob, const svm_parameter *param)

{

int i;

int nr_fold = 5;

double *ymv = Malloc(double,prob->l);

double mae = 0;

svm_parameter newparam = *param;

newparam.probability = 0;

svm_cross_validation(prob,&newparam,nr_fold,ymv);

for(i=0;il;i++)

{

ymv[i]=prob->y[i]-ymv[i];

mae += fabs(ymv[i]);

}

mae /= prob->l;

double std=sqrt(2*mae*mae);

int count=0;

mae=0;

for(i=0;il;i++)

if (fabs(ymv[i]) > 5*std)

count=count+1;

else

mae+=fabs(ymv[i]);

mae /= (prob->l-count);

info("Prob. model for test data: target value = predicted value + z,\nz: Laplace distribution e^(-|z|/sigma)/(2sigma),sigma= %g\n",mae);

free(ymv);

return mae;

}

// label: label name, start: begin of each class, count: #data of classes, perm: indices to the original data

// perm, length l, must be allocated before calling this subroutine

static void svm_group_classes(const svm_problem *prob, int *nr_class_ret, int **label_ret, int **start_ret, int **count_ret, int *perm)

{

int l = prob->l;

int max_nr_class = 16;

int nr_class = 0;

int *label = Malloc(int,max_nr_class);

int *count = Malloc(int,max_nr_class);

int *data_label = Malloc(int,l);

int i;

for(i=0;iy[i];

int j;

for(j=0;jparam = *param;

model->free_sv = 0; // XXX

if(param->svm_type == ONE_CLASS ||

param->svm_type == EPSILON_SVR ||

param->svm_type == NU_SVR)

{

// regression or one-class-svm

model->nr_class = 2;

model->label = NULL;

model->nSV = NULL;

model->probA = NULL; model->probB = NULL;

model->sv_coef = Malloc(double *,1);

if(param->probability &&

(param->svm_type == EPSILON_SVR ||

param->svm_type == NU_SVR))

{

model->probA = Malloc(double,1);

model->probA[0] = svm_svr_probability(prob,param);

}

decision_function f = svm_train_one(prob,param,0,0);

model->rho = Malloc(double,1);

model->rho[0] = f.rho;

int nSV = 0;

int i;

for(i=0;il;i++)

if(fabs(f.alpha[i]) > 0) ++nSV;

model->l = nSV;

model->SV = Malloc(svm_node *,nSV);

model->sv_coef[0] = Malloc(double,nSV);

model->sv_indices = Malloc(int,nSV);

int j = 0;

for(i=0;il;i++)

if(fabs(f.alpha[i]) > 0)

{

model->SV[j] = prob->x[i];

model->sv_coef[0][j] = f.alpha[i];

model->sv_indices[j] = i+1;

++j;

}

free(f.alpha);

}

else

{

// classification

int l = prob->l;

int nr_class;

int *label = NULL;

int *start = NULL;

int *count = NULL;

int *perm = Malloc(int,l);

// 对于同label进行归纳

svm_group_classes(prob,&nr_class,&label,&start,&count,perm);

if(nr_class == 1)

info("WARNING: training data in only one class. See README for details.\n");

svm_node **x = Malloc(svm_node *,l);

int i;

for(i=0;ix[perm[i]];

// calculate weighted C

double *weighted_C = Malloc(double, nr_class);

for(i=0;iC;

for(i=0;inr_weight;i++)

{

int j;

for(j=0;jweight_label[i] == label[j])

break;

if(j == nr_class)

fprintf(stderr,"WARNING: class label %d specified in weight is not found\n", param->weight_label[i]);

else

weighted_C[j] *= param->weight[i];

}

// train k*(k-1)/2 models

bool *nonzero = Malloc(bool,l);

for(i=0;iprobability)

{

probA=Malloc(double,nr_class*(nr_class-1)/2);

probB=Malloc(double,nr_class*(nr_class-1)/2);

}

int p = 0;

for(i=0;iprobability)

//输入参数和数据,对probA和probB进行训练并赋值

svm_binary_svc_probability(&sub_prob,param,weighted_C[i],weighted_C[j],probA[p],probB[p]);

f[p] = svm_train_one(&sub_prob,param,weighted_C[i],weighted_C[j]);

for(k=0;k 0)

nonzero[si+k] = true;

for(k=0;k 0)

nonzero[sj+k] = true;

free(sub_prob.x);

free(sub_prob.y);

++p;

}

// build output

model->nr_class = nr_class;

model->label = Malloc(int,nr_class);

for(i=0;ilabel[i] = label[i];

model->rho = Malloc(double,nr_class*(nr_class-1)/2);

for(i=0;irho[i] = f[i].rho;

if(param->probability)

{

model->probA = Malloc(double,nr_class*(nr_class-1)/2);

model->probB = Malloc(double,nr_class*(nr_class-1)/2);

for(i=0;iprobA[i] = probA[i];

model->probB[i] = probB[i];

}

}

else

{

model->probA=NULL;

model->probB=NULL;

}

int total_sv = 0;

int *nz_count = Malloc(int,nr_class);

model->nSV = Malloc(int,nr_class);

for(i=0;inSV[i] = nSV;

nz_count[i] = nSV;

}

info("Total nSV = %d\n",total_sv);

model->l = total_sv;

model->SV = Malloc(svm_node *,total_sv);

model->sv_indices = Malloc(int,total_sv);

p = 0;

for(i=0;iSV[p] = x[i];

model->sv_indices[p++] = perm[i] + 1;

}

int *nz_start = Malloc(int,nr_class);

nz_start[0] = 0;

for(i=1;isv_coef = Malloc(double *,nr_class-1);

for(i=0;isv_coef[i] = Malloc(double,total_sv);

p = 0;

for(i=0;isv_coef[j-1][q++] = f[p].alpha[k];

q = nz_start[j];

for(k=0;ksv_coef[i][q++] = f[p].alpha[ci+k];

++p;

}

free(label);

free(probA);

free(probB);

free(count);

free(perm);

free(start);

free(x);

free(weighted_C);

free(nonzero);

for(i=0;il;

int *perm = Malloc(int,l);

int nr_class;

if (nr_fold > l)

{

nr_fold = l;

fprintf(stderr,"WARNING: # folds > # data. Will use # folds = # data instead (i.e., leave-one-out cross validation)\n");

}

fold_start = Malloc(int,nr_fold+1);

// stratified cv may not give leave-one-out rate

// Each class to l folds -> some folds may have zero elements

if((param->svm_type == C_SVC ||

param->svm_type == NU_SVC) && nr_fold < l)

{

int *start = NULL;

int *label = NULL;

int *count = NULL;

svm_group_classes(prob,&nr_class,&label,&start,&count,perm);

// random shuffle and then data grouped by fold using the array perm

int *fold_count = Malloc(int,nr_fold);

int c;

int *index = Malloc(int,l);

for(i=0;ix[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

for(j=end;jx[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

struct svm_model *submodel = svm_train(&subprob,param);

if(param->probability &&

(param->svm_type == C_SVC || param->svm_type == NU_SVC))

{

double *prob_estimates=Malloc(double,svm_get_nr_class(submodel));

for(j=begin;jx[perm[j]],prob_estimates);

free(prob_estimates);

}

else

for(j=begin;jx[perm[j]]);

svm_free_and_destroy_model(&submodel);

free(subprob.x);

free(subprob.y);

}

free(fold_start);

free(perm);

}

int svm_get_svm_type(const svm_model *model)

{

return model->param.svm_type;

}

int svm_get_nr_class(const svm_model *model)

{

return model->nr_class;

}

void svm_get_labels(const svm_model *model, int* label)

{

if (model->label != NULL)

for(int i=0;inr_class;i++)

label[i] = model->label[i];

}

void svm_get_sv_indices(const svm_model *model, int* indices)

{

if (model->sv_indices != NULL)

for(int i=0;il;i++)

indices[i] = model->sv_indices[i];

}

int svm_get_nr_sv(const svm_model *model)

{

return model->l;

}

double svm_get_svr_probability(const svm_model *model)

{

if ((model->param.svm_type == EPSILON_SVR || model->param.svm_type == NU_SVR) &&

model->probA!=NULL)

return model->probA[0];

else

{

fprintf(stderr,"Model doesn't contain information for SVR probability inference\n");

return 0;

}

}

double svm_predict_values(const svm_model *model, const svm_node *x, double* dec_values)

{

int i;

if(model->param.svm_type == ONE_CLASS ||

model->param.svm_type == EPSILON_SVR ||

model->param.svm_type == NU_SVR)

{

double *sv_coef = model->sv_coef[0];

double sum = 0;

for(i=0;il;i++)

sum += sv_coef[i] * Kernel::k_function(x,model->SV[i],model->param);

sum -= model->rho[0];

*dec_values = sum;

if(model->param.svm_type == ONE_CLASS)

return (sum>0)?1:-1;

else

return sum;

}

else

{

int nr_class = model->nr_class;

int l = model->l;

double *kvalue = Malloc(double,l);

for(i=0;iSV[i],model->param);

int *start = Malloc(int,nr_class);

start[0] = 0;

for(i=1;inSV[i-1];

int *vote = Malloc(int,nr_class);

for(i=0;inSV[i];

int cj = model->nSV[j];

int k;

double *coef1 = model->sv_coef[j-1];

double *coef2 = model->sv_coef[i];

for(k=0;krho[p];

dec_values[p] = sum;

if(dec_values[p] > 0)

++vote[i];

else

++vote[j];

p++;

}

int vote_max_idx = 0;

for(i=1;i vote[vote_max_idx])

vote_max_idx = i;

free(kvalue);

free(start);

free(vote);

return model->label[vote_max_idx];

}

}

double svm_predict(const svm_model *model, const svm_node *x)

{

int nr_class = model->nr_class;

double *dec_values;

if(model->param.svm_type == ONE_CLASS ||

model->param.svm_type == EPSILON_SVR ||

model->param.svm_type == NU_SVR)

dec_values = Malloc(double, 1);

else

dec_values = Malloc(double, nr_class*(nr_class-1)/2);

double pred_result = svm_predict_values(model, x, dec_values);

free(dec_values);

return pred_result;

}

double svm_predict_probability(

const svm_model *model, const svm_node *x, double *prob_estimates)

{

if ((model->param.svm_type == C_SVC || model->param.svm_type == NU_SVC) &&

model->probA!=NULL && model->probB!=NULL)

{

int i;

int nr_class = model->nr_class;

double *dec_values = Malloc(double, nr_class*(nr_class-1)/2);

svm_predict_values(model, x, dec_values);

double min_prob=1e-7;

double **pairwise_prob=Malloc(double *,nr_class);

for(i=0;iprobA[k],model->probB[k]),min_prob),1-min_prob);

pairwise_prob[j][i]=1-pairwise_prob[i][j];

k++;

}

if (nr_class == 2)

{

prob_estimates[0] = pairwise_prob[0][1];

prob_estimates[1] = pairwise_prob[1][0];

}

else

multiclass_probability(nr_class,pairwise_prob,prob_estimates);

int prob_max_idx = 0;

for(i=1;i prob_estimates[prob_max_idx])

prob_max_idx = i;

for(i=0;ilabel[prob_max_idx];

}

else

return svm_predict(model, x);

}

static const char *svm_type_table[] =

{

"c_svc","nu_svc","one_class","epsilon_svr","nu_svr",NULL

};

static const char *kernel_type_table[]=

{

"linear","polynomial","rbf","sigmoid","precomputed",NULL

};

int svm_save_model(const char *model_file_name, const svm_model *model)

{

FILE *fp = fopen(model_file_name,"w");

if(fp==NULL) return -1;

char *old_locale = setlocale(LC_ALL, NULL);

if (old_locale) {

old_locale = _strdup(old_locale);

}

setlocale(LC_ALL, "C");

const svm_parameter& param = model->param;

fprintf(fp,"svm_type %s\n", svm_type_table[param.svm_type]);

fprintf(fp,"kernel_type %s\n", kernel_type_table[param.kernel_type]);

if(param.kernel_type == POLY)

fprintf(fp,"degree %d\n", param.degree);

if(param.kernel_type == POLY || param.kernel_type == RBF || param.kernel_type == SIGMOID)

fprintf(fp,"gamma %.17g\n", param.gamma);

if(param.kernel_type == POLY || param.kernel_type == SIGMOID)

fprintf(fp,"coef0 %.17g\n", param.coef0);

int nr_class = model->nr_class;

int l = model->l;

fprintf(fp, "nr_class %d\n", nr_class);

fprintf(fp, "total_sv %d\n",l);

{

fprintf(fp, "rho");

for(int i=0;irho[i]);

fprintf(fp, "\n");

}

if(model->label)

{

fprintf(fp, "label");

for(int i=0;ilabel[i]);

fprintf(fp, "\n");

}

if(model->probA) // regression has probA only

{

fprintf(fp, "probA");

for(int i=0;iprobA[i]);

fprintf(fp, "\n");

}

if(model->probB)

{

fprintf(fp, "probB");

for(int i=0;iprobB[i]);

fprintf(fp, "\n");

}

if(model->nSV)

{

fprintf(fp, "nr_sv");

for(int i=0;inSV[i]);

fprintf(fp, "\n");

}

fprintf(fp, "SV\n");

const double * const *sv_coef = model->sv_coef;

const svm_node * const *SV = model->SV;

for(int i=0;ivalue));

else

while(p->index != -1)

{

fprintf(fp,"%d:%.8g ",p->index,p->value);

p++;

}

fprintf(fp, "\n");

}

setlocale(LC_ALL, old_locale);

free(old_locale);

if (ferror(fp) != 0 || fclose(fp) != 0) return -1;

else return 0;

}

static char *line = NULL;

static int max_line_len;

static char* readline(FILE *input)

{

int len;

if(fgets(line,max_line_len,input) == NULL)

return NULL;

while(strrchr(line,'\n') == NULL)

{

max_line_len *= 2;

line = (char *) realloc(line,max_line_len);

len = (int) strlen(line);

if(fgets(line+len,max_line_len-len,input) == NULL)

break;

}

return line;

}

//

// FSCANF helps to handle fscanf failures.

// Its do-while block avoids the ambiguity when

// if (...)

// FSCANF();

// is used

//

#define FSCANF(_stream, _format, _var) do{ if (fscanf(_stream, _format, _var) != 1) return false; }while(0)

bool read_model_header(FILE *fp, svm_model* model)

{

svm_parameter& param = model->param;

// parameters for training only won't be assigned, but arrays are assigned as NULL for safety

param.nr_weight = 0;

param.weight_label = NULL;

param.weight = NULL;

char cmd[81];

while(1)

{

FSCANF(fp,"%80s",cmd);

if(strcmp(cmd,"svm_type")==0)

{

FSCANF(fp,"%80s",cmd);

int i;

for(i=0;svm_type_table[i];i++)

{

if(strcmp(svm_type_table[i],cmd)==0)

{

param.svm_type=i;

break;

}

}

if(svm_type_table[i] == NULL)

{

fprintf(stderr,"unknown svm type.\n");

return false;

}

}

else if(strcmp(cmd,"kernel_type")==0)

{

FSCANF(fp,"%80s",cmd);

int i;

for(i=0;kernel_type_table[i];i++)

{

if(strcmp(kernel_type_table[i],cmd)==0)

{

param.kernel_type=i;

break;

}

}

if(kernel_type_table[i] == NULL)

{

fprintf(stderr,"unknown kernel function.\n");

return false;

}

}

else if(strcmp(cmd,"degree")==0)

FSCANF(fp,"%d",¶m.degree);

else if(strcmp(cmd,"gamma")==0)

FSCANF(fp,"%lf",¶m.gamma);

else if(strcmp(cmd,"coef0")==0)

FSCANF(fp,"%lf",¶m.coef0);

else if(strcmp(cmd,"nr_class")==0)

FSCANF(fp,"%d",&model->nr_class);

else if(strcmp(cmd,"total_sv")==0)

FSCANF(fp,"%d",&model->l);

else if(strcmp(cmd,"rho")==0)

{

int n = model->nr_class * (model->nr_class-1)/2;

model->rho = Malloc(double,n);

for(int i=0;irho[i]);

}

else if(strcmp(cmd,"label")==0)

{

int n = model->nr_class;

model->label = Malloc(int,n);

for(int i=0;ilabel[i]);

}

else if(strcmp(cmd,"probA")==0)

{

int n = model->nr_class * (model->nr_class-1)/2;

model->probA = Malloc(double,n);

for(int i=0;iprobA[i]);

}

else if(strcmp(cmd,"probB")==0)

{

int n = model->nr_class * (model->nr_class-1)/2;

model->probB = Malloc(double,n);

for(int i=0;iprobB[i]);

}

else if(strcmp(cmd,"nr_sv")==0)

{

int n = model->nr_class;

model->nSV = Malloc(int,n);

for(int i=0;inSV[i]);

}

else if(strcmp(cmd,"SV")==0)

{

while(1)

{

int c = getc(fp);

if(c==EOF || c=='\n') break;

}

break;

}

else

{

fprintf(stderr,"unknown text in model file: [%s]\n",cmd);

return false;

}

}

return true;

}

svm_model *svm_load_model(const char *model_file_name)

{

FILE *fp = fopen(model_file_name,"rb");

if(fp==NULL) return NULL;

char *old_locale = setlocale(LC_ALL, NULL);

if (old_locale) {

old_locale = _strdup(old_locale);

}

setlocale(LC_ALL, "C");

// read parameters

svm_model *model = Malloc(svm_model,1);

model->rho = NULL;

model->probA = NULL;

model->probB = NULL;

model->sv_indices = NULL;

model->label = NULL;

model->nSV = NULL;

// read header

if (!read_model_header(fp, model))

{

fprintf(stderr, "ERROR: fscanf failed to read model\n");

setlocale(LC_ALL, old_locale);

free(old_locale);

free(model->rho);

free(model->label);

free(model->nSV);

free(model);

return NULL;

}

// read sv_coef and SV

int elements = 0;

long pos = ftell(fp);

max_line_len = 1024;

line = Malloc(char,max_line_len);

char *p,*endptr,*idx,*val;

while(readline(fp)!=NULL)

{

p = strtok(line,":");

while(1)

{

p = strtok(NULL,":");

if(p == NULL)

break;

++elements;

}

}

elements += model->l;

fseek(fp,pos,SEEK_SET);

int m = model->nr_class - 1;

int l = model->l;

model->sv_coef = Malloc(double *,m);

int i;

for(i=0;isv_coef[i] = Malloc(double,l);

model->SV = Malloc(svm_node*,l);

svm_node *x_space = NULL;

if(l>0) x_space = Malloc(svm_node,elements);

int j=0;

for(i=0;iSV[i] = &x_space[j];

p = strtok(line, " \t");

model->sv_coef[0][i] = strtod(p,&endptr);

for(int k=1;ksv_coef[k][i] = strtod(p,&endptr);

}

while(1)

{

idx = strtok(NULL, ":");

val = strtok(NULL, " \t");

if(val == NULL)

break;

x_space[j].index = (int) strtol(idx,&endptr,10);

x_space[j].value = strtod(val,&endptr);

++j;

}

x_space[j++].index = -1;

}

free(line);

setlocale(LC_ALL, old_locale);

free(old_locale);

if (ferror(fp) != 0 || fclose(fp) != 0)

return NULL;

model->free_sv = 1; // XXX

return model;

}

void svm_free_model_content(svm_model* model_ptr)

{

if(model_ptr->free_sv && model_ptr->l > 0 && model_ptr->SV != NULL)

free((void *)(model_ptr->SV[0]));

if(model_ptr->sv_coef)

{

for(int i=0;inr_class-1;i++)

free(model_ptr->sv_coef[i]);

}

free(model_ptr->SV);

model_ptr->SV = NULL;

free(model_ptr->sv_coef);

model_ptr->sv_coef = NULL;

free(model_ptr->rho);

model_ptr->rho = NULL;

free(model_ptr->label);

model_ptr->label= NULL;

free(model_ptr->probA);

model_ptr->probA = NULL;

free(model_ptr->probB);

model_ptr->probB= NULL;

free(model_ptr->sv_indices);

model_ptr->sv_indices = NULL;

free(model_ptr->nSV);

model_ptr->nSV = NULL;

}

void svm_free_and_destroy_model(svm_model** model_ptr_ptr)

{

if(model_ptr_ptr != NULL && *model_ptr_ptr != NULL)

{

svm_free_model_content(*model_ptr_ptr);

free(*model_ptr_ptr);

*model_ptr_ptr = NULL;

}

}

void svm_destroy_param(svm_parameter* param)

{

free(param->weight_label);

free(param->weight);

}

const char *svm_check_parameter(const svm_problem *prob, const svm_parameter *param)

{

// svm_type

int svm_type = param->svm_type;

if(svm_type != C_SVC &&

svm_type != NU_SVC &&

svm_type != ONE_CLASS &&

svm_type != EPSILON_SVR &&

svm_type != NU_SVR)

return "unknown svm type";

// kernel_type, degree

int kernel_type = param->kernel_type;

if(kernel_type != LINEAR &&

kernel_type != POLY &&

kernel_type != RBF &&

kernel_type != SIGMOID &&

kernel_type != PRECOMPUTED)

return "unknown kernel type";

if(param->gamma < 0)

return "gamma < 0";

if(param->degree < 0)

return "degree of polynomial kernel < 0";

// cache_size,eps,C,nu,p,shrinking

if(param->cache_size <= 0)

return "cache_size <= 0";

if(param->eps <= 0)

return "eps <= 0";

if(svm_type == C_SVC ||

svm_type == EPSILON_SVR ||

svm_type == NU_SVR)

if(param->C <= 0)

return "C <= 0";

if(svm_type == NU_SVC ||

svm_type == ONE_CLASS ||

svm_type == NU_SVR)

if(param->nu <= 0 || param->nu > 1)

return "nu <= 0 or nu > 1";

if(svm_type == EPSILON_SVR)

if(param->p < 0)

return "p < 0";

if(param->shrinking != 0 &&

param->shrinking != 1)

return "shrinking != 0 and shrinking != 1";

if(param->probability != 0 &&

param->probability != 1)

return "probability != 0 and probability != 1";

if(param->probability == 1 &&

svm_type == ONE_CLASS)

return "one-class SVM probability output not supported yet";

// check whether nu-svc is feasible

if(svm_type == NU_SVC)

{

int l = prob->l;

int max_nr_class = 16;

int nr_class = 0;

int *label = Malloc(int,max_nr_class);

int *count = Malloc(int,max_nr_class);

int i;

for(i=0;iy[i];

int j;

for(j=0;jnu*(n1+n2)/2 > min(n1,n2))

{

free(label);

free(count);

return "specified nu is infeasible";

}

}

}

free(label);

free(count);

}

return NULL;

}

int svm_check_probability_model(const svm_model *model)

{

return ((model->param.svm_type == C_SVC || model->param.svm_type == NU_SVC) &&

model->probA!=NULL && model->probB!=NULL) ||

((model->param.svm_type == EPSILON_SVR || model->param.svm_type == NU_SVR) &&

model->probA!=NULL);

}

void svm_set_print_string_function(void (*print_func)(const char *))

{

if(print_func == NULL)

svm_print_string = &print_string_stdout;

else

svm_print_string = print_func;

}

#ifndef _LIBSVM_H

#define _LIBSVM_H

#define LIBSVM_VERSION 323

#ifdef __cplusplus

extern "C" {

#endif

extern int libsvm_version;

struct svm_node //特征节点

{

int index; //特征序号

double value; //特征值

};

struct svm_problem

{

int l; //样本个数

double *y; //类别

struct svm_node **x; //一条样本的特征

};

enum { C_SVC, NU_SVC, ONE_CLASS, EPSILON_SVR, NU_SVR }; /* svm_type */

enum { LINEAR, POLY, RBF, SIGMOID, PRECOMPUTED }; /* kernel_type */

struct svm_parameter

{

int svm_type;

int kernel_type;

int degree; /* for poly */

double gamma; /* for poly/rbf/sigmoid */

double coef0; /* for poly/sigmoid */

/* these are for training only */

double cache_size; /* in MB */

double eps; /* stopping criteria */

double C; /* for C_SVC, EPSILON_SVR and NU_SVR */

int nr_weight; /* for C_SVC */

int *weight_label; /* for C_SVC */

double* weight; /* for C_SVC */

double nu; /* for NU_SVC, ONE_CLASS, and NU_SVR */

double p; /* for EPSILON_SVR */

int shrinking; /* use the shrinking heuristics */

int probability; /* do probability estimates */

};

//

// svm_model

//

struct svm_model

{

struct svm_parameter param; /* parameter */

int nr_class; /* number of classes, = 2 in regression/one class svm */

int l; /* total #SV */

struct svm_node **SV; /* 保存支持向量的指针 */

double **sv_coef; /* coefficients for SVs in decision functions (sv_coef[k-1][l]) */

double *rho; /* constants in decision functions (rho[k*(k-1)/2]) */

double *probA; /* pariwise probability information */

double *probB;

int *sv_indices; /* sv_indices[0,...,nSV-1] are values in [1,...,num_traning_data] to indicate SVs in the training set */

/* for classification only */

int *label; /* label of each class (label[k]) */

int *nSV; /* number of SVs for each class (nSV[k]) */

/* nSV[0] + nSV[1] + ... + nSV[k-1] = l */

/* XXX */

int free_sv; /* 1 if svm_model is created by svm_load_model*/

/* 0 if svm_model is created by svm_train */

};

struct svm_model *svm_train(const struct svm_problem *prob, const struct svm_parameter *param);

void svm_cross_validation(const struct svm_problem *prob, const struct svm_parameter *param, int nr_fold, double *target);

int svm_save_model(const char *model_file_name, const struct svm_model *model);

struct svm_model *svm_load_model(const char *model_file_name);

int svm_get_svm_type(const struct svm_model *model);

int svm_get_nr_class(const struct svm_model *model);

void svm_get_labels(const struct svm_model *model, int *label);

void svm_get_sv_indices(const struct svm_model *model, int *sv_indices);

int svm_get_nr_sv(const struct svm_model *model);

double svm_get_svr_probability(const struct svm_model *model);

double svm_predict_values(const struct svm_model *model, const struct svm_node *x, double* dec_values);

double svm_predict(const struct svm_model *model, const struct svm_node *x);

double svm_predict_probability(const struct svm_model *model, const struct svm_node *x, double* prob_estimates);

void svm_free_model_content(struct svm_model *model_ptr);

void svm_free_and_destroy_model(struct svm_model **model_ptr_ptr);

void svm_destroy_param(struct svm_parameter *param);

const char *svm_check_parameter(const struct svm_problem *prob, const struct svm_parameter *param);

int svm_check_probability_model(const struct svm_model *model);

void svm_set_print_string_function(void (*print_func)(const char *));

#ifdef __cplusplus

}

#endif

#endif /* _LIBSVM_H */