Caffe图片训练分类研究、深度学习图片分类

Caffe图片训练分类研究、深度学习图片分类

转载请注明:http://blog.csdn.net/forest_world

一、NSFW研究

1、安装Docker

http://www.linuxidc.com/Linux/2014-08/105656.htm

安装Docker使用apt-get命令:

$ apt-get install docker.io

创建软连接

ln -sf /usr/bin/docker.io /usr/local/bin/docker

sudo service docker stop

sudo service docker start 2、

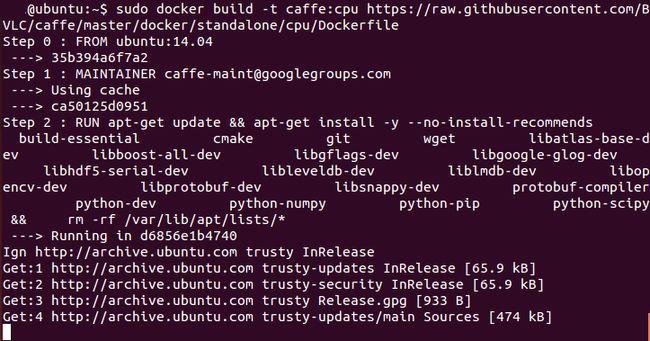

sudo docker build -t caffe:cpu https://raw.githubusercontent.com/BVLC/caffe/master/docker/standalone/cpu/Dockerfile

Step 0 : FROM ubuntu:14.04

---> 35b394a6f7a2

Step 1 : MAINTAINER [email protected]

---> Using cache

---> ca50125d0951

Step 2 : RUN apt-get update && apt-get install -y --no-install-recommends build-essential cmake git wget libatlas-base-dev libboost-all-dev libgflags-dev libgoogle-glog-dev libhdf5-serial-dev libleveldb-dev liblmdb-dev libopencv-dev libprotobuf-dev libsnappy-dev protobuf-compiler python-dev python-numpy python-pip python-scipy && rm -rf /var/lib/apt/lists/*

---> Running in d6856e1b4740

Ign http://archive.ubuntu.com trusty InRelease

Get:1 http://archive.ubuntu.com trusty-updates InRelease [65.9 kB]

Get:2 http://archive.ubuntu.com trusty-security InRelease [65.9 kB]

Get:3 http://archive.ubuntu.com trusty Release.gpg [933 B]

Get:4 http://archive.ubuntu.com trusty-updates/main Sources [474 kB]

Get:5 http://archive.ubuntu.com trusty-updates/main Sources [474 kB]

Get:6 http://archive.ubuntu.com trusty-updates/restricted Sources [5247 B]

Get:7 http://archive.ubuntu.com trusty-updates/universe Sources [209 kB]

Get:8 http://archive.ubuntu.com trusty-updates/main amd64 Packages [1131 kB]

......

Removing intermediate container d3643cce1d7e

Step 7 : ENV PYCAFFE_ROOT $CAFFE_ROOT/python

---> Running in e4e4019889f8

---> e982c669b99b

Removing intermediate container e4e4019889f8

Step 8 : ENV PYTHONPATH $PYCAFFE_ROOT:$PYTHONPATH

---> Running in a9ee4331bbe8

---> 8a1867b64b5c

Removing intermediate container a9ee4331bbe8

Step 9 : ENV PATH $CAFFE_ROOT/build/tools:$PYCAFFE_ROOT:$PATH

---> Running in bc2a271a95bd

---> 864daab5c633

Removing intermediate container bc2a271a95bd

Step 10 : RUN echo "$CAFFE_ROOT/build/lib" >> /etc/ld.so.conf.d/caffe.conf && ldconfig

---> Running in d0af6f3e69ea

---> fa8b1e810492

Removing intermediate container d0af6f3e69ea

Step 11 : WORKDIR /workspace

---> Running in ab94152a0a18

---> 49ffbf2d8fef

Removing intermediate container ab94152a0a18

Successfully built 49ffbf2d8fef

3、

git clone https://github.com/yahoo/open_nsfw

$ cd open_nsfw

@ubuntu:~$ git clone https://github.com/yahoo/open_nsfw

Cloning into 'open_nsfw'...

remote: Counting objects: 31, done.

remote: Compressing objects: 100% (20/20), done.

Unpacking objects: 32% (10/31) 4、

I1012 05:17:23.226325 1 net.cpp:228] relu_stage0_block0 does not need backward computation.

I1012 05:17:23.226327 1 net.cpp:228] eltwise_stage0_block0 does not need backward computation.

I1012 05:17:23.226331 1 net.cpp:228] scale_stage0_block0_branch2c does not need backward computation.

I1012 05:17:23.226333 1 net.cpp:228] bn_stage0_block0_branch2c does not need backward computation.

I1012 05:17:23.226336 1 net.cpp:228] conv_stage0_block0_branch2c does not need backward computation.

I1012 05:17:23.226339 1 net.cpp:228] relu_stage0_block0_branch2b does not need backward computation.

I1012 05:17:23.226342 1 net.cpp:228] scale_stage0_block0_branch2b does not need backward computation.

I1012 05:17:23.226346 1 net.cpp:228] bn_stage0_block0_branch2b does not need backward computation.

I1012 05:17:23.226348 1 net.cpp:228] conv_stage0_block0_branch2b does not need backward computation.

I1012 05:17:23.226351 1 net.cpp:228] relu_stage0_block0_branch2a does not need backward computation.

I1012 05:17:23.226354 1 net.cpp:228] scale_stage0_block0_branch2a does not need backward computation.

I1012 05:17:23.226356 1 net.cpp:228] bn_stage0_block0_branch2a does not need backward computation.

I1012 05:17:23.226359 1 net.cpp:228] conv_stage0_block0_branch2a does not need backward computation.

I1012 05:17:23.226362 1 net.cpp:228] scale_stage0_block0_proj_shortcut does not need backward computation.

I1012 05:17:23.226366 1 net.cpp:228] bn_stage0_block0_proj_shortcut does not need backward computation.

I1012 05:17:23.226368 1 net.cpp:228] conv_stage0_block0_proj_shortcut does not need backward computation.

I1012 05:17:23.226372 1 net.cpp:228] pool1_pool1_0_split does not need backward computation.

I1012 05:17:23.226374 1 net.cpp:228] pool1 does not need backward computation.

I1012 05:17:23.226378 1 net.cpp:228] relu_1 does not need backward computation.

I1012 05:17:23.226380 1 net.cpp:228] scale_1 does not need backward computation.

I1012 05:17:23.226383 1 net.cpp:228] bn_1 does not need backward computation.

I1012 05:17:23.226387 1 net.cpp:228] conv_1 does not need backward computation.

I1012 05:17:23.226389 1 net.cpp:228] data does not need backward computation.

I1012 05:17:23.226392 1 net.cpp:270] This network produces output prob

I1012 05:17:23.226526 1 net.cpp:283] Network initialization done.

I1012 05:17:23.277700 1 upgrade_proto.cpp:77] Attempting to upgrade batch norm layers using deprecated params: nsfw_model/resnet_50_1by2_nsfw.caffemodel

I1012 05:17:23.277819 1 upgrade_proto.cpp:80] Successfully upgraded batch norm layers using deprecated params.

I1012 05:17:23.283418 1 net.cpp:761] Ignoring source layer loss

NSFW score: 0.000410715758335

二、

#include float> > net_;

cv::Size input_geometry_;

int num_channels_;

cv::Mat mean_;

std::vector<string> labels_;

};

Classifier::Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

#ifdef CPU_ONLY

Caffe::set_mode(Caffe::CPU);

#else

Caffe::set_mode(Caffe::GPU);

#endif

/* Load the network. */

net_.reset(new Net<float>(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob<float>* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob<float>* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

static bool PairCompare(const std::pair<float, int>& lhs,

const std::pair<float, int>& rhs) {

return lhs.first > rhs.first;

}

/* Return the indices of the top N values of vector v. */

static std::vector<int> Argmax(const std::vector<float>& v, int N) {

std::vector<std::pair<float, int> > pairs;

for (size_t i = 0; i < v.size(); ++i)

pairs.push_back(std::make_pair(v[i], static_cast<int>(i)));

std::partial_sort(pairs.begin(), pairs.begin() + N, pairs.end(), PairCompare);

std::vector<int> result;

for (int i = 0; i < N; ++i)

result.push_back(pairs[i].second);

return result;

}

/* Return the top N predictions. */

std::vector */

Blob<float> mean_blob;

mean_blob.FromProto(blob_proto);

CHECK_EQ(mean_blob.channels(), num_channels_)

<< "Number of channels of mean file doesn't match input layer.";

/* The format of the mean file is planar 32-bit float BGR or grayscale. */

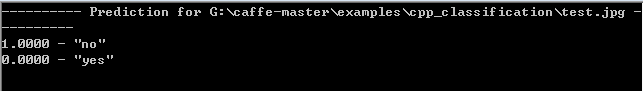

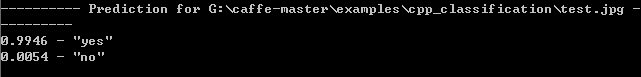

std::vector string model_file ("E:\\ cpp_classification\\caffe.prototxt");

string trained_file ("E:\\ cpp_classification\\caffe.caffemodel");

string mean_file ("E:\\cpp_classification\\mean.binaryproto");

string label_file ("E:\\ cpp_classification\\labels.txt");

Classifier classifier(model_file, trained_file, mean_file, label_file);

string file ("E:\\ cpp_classification\\test.jpg");

参考学习资料:

http://m.blog.csdn.net/article/details?id=52443126 基于深度学习的人脸识别系统系列(Caffe+OpenCV+Dlib)——【一】如何在Visual Studio中像使用OpenCV一样使用Caffe

http://mp.weixin.qq.com/s?__biz=MzI1NTE4NTUwOQ==&mid=2650325557&idx=1&sn=362d476d3b3820ea56e4672369565e4f&chksm=f235a53fc5422c2939f76b7e8f5265333f3159b0ec4275fe733d27e7a03f17395b0460a318d2&mpshare=1&scene=1&srcid=1017Le0xZeDhioc9DxPIGNN9#wechat_redirect IJCAI16论文速读:Deep Learning论文选读(上)

http://www.cnblogs.com/carle-09/p/5779304.html 4 .caffe:train_val.prototxt、 solver.prototxt 、 deploy.prototxt( 创建模型与编写配置文件)

http://blog.csdn.net/deeplearninglc007/article/details/40086503 使用Caffe对图片进行训练并分类的简单流程

http://blog.csdn.net/wang4959520/article/details/51841110 将train_val.prototxt 转换成deploy.prototxt

http://blog.csdn.net/hyman_yx/article/details/51732656 Caffe均值文件mean.binaryproto转mean.npy

http://blog.csdn.net/shakevincent/article/details/51694686微软Caffe编译

http://www.cnblogs.com/alexcai/p/5469436.html caffe简易上手指南(二)—— 训练我们自己的数据

http://www.aiuxian.com/article/p-1659539.html 深度学习–如何利用Caffe进行训练ImageNet网络

http://www.th7.cn/system/win/201602/153606.shtml caffe for windows 下使用caffemodel 实现cifar10的图像分类

http://blog.csdn.net/dcxhun3/article/details/52021296 用训练好的caffemodel来进行分类

http://neuralnetworksanddeeplearning.com/chap1.html CHAPTER 1 Using neural nets to recognize handwritten digits

http://www.cnblogs.com/shishupeng/p/5694775.html 深度卷积网络CNN与图像语义分割

本文地址:http://blog.csdn.net/forest_world