【如何训练一个中译英翻译器】LSTM机器翻译模型部署(三)

系列文章

【如何训练一个中译英翻译器】LSTM机器翻译seq2seq字符编码(一)

【如何训练一个中译英翻译器】LSTM机器翻译模型训练与保存(二)

【如何训练一个中译英翻译器】LSTM机器翻译模型部署(三)

【如何训练一个中译英翻译器】LSTM机器翻译模型部署之onnx(python)(四)

目录

- 系列文章

- 1、加载字符文件

- 2、加载权重文件

- 3、推理模型搭建

- 4、进行推理

模型部署也是很重要的一部分,这里先讲基于python的部署,后面我们还要将模型部署到移动端。

细心的小伙伴会发现前面的文章在模型保存之后进行模型推理时,我们使用的数据是在训练之前我们对数据进行处理的encoder_input_data中读取,而不是我们手动输入的,那么这一章主要来解决自定义输入推理的问题

1、加载字符文件

首先,我们根据 【如何训练一个中译英翻译器】LSTM机器翻译模型训练与保存(二)的操作,到最后

会得到这样的三个文件:input_words.txt,target_words.txt,config.json

需要逐一进行加载

进行加载

# 加载字符

# 从 input_words.txt 文件中读取字符串

with open('input_words.txt', 'r') as f:

input_words = f.readlines()

input_characters = [line.rstrip('\n') for line in input_words]

# 从 target_words.txt 文件中读取字符串

with open('target_words.txt', 'r', newline='') as f:

target_words = [line.strip() for line in f.readlines()]

target_characters = [char.replace('\\t', '\t').replace('\\n', '\n') for char in target_words]

#字符处理,以方便进行编码

input_token_index = dict([(char, i) for i, char in enumerate(input_characters)])

target_token_index = dict([(char, i) for i, char in enumerate(target_characters)])

# something readable.

reverse_input_char_index = dict(

(i, char) for char, i in input_token_index.items())

reverse_target_char_index = dict(

(i, char) for char, i in target_token_index.items())

num_encoder_tokens = len(input_characters) # 英文字符数量

num_decoder_tokens = len(target_characters) # 中文文字数量

读取配置文件

import json

with open('config.json', 'r') as file:

loaded_data = json.load(file)

# 从加载的数据中获取max_encoder_seq_length和max_decoder_seq_length的值

max_encoder_seq_length = loaded_data["max_encoder_seq_length"]

max_decoder_seq_length = loaded_data["max_decoder_seq_length"]

2、加载权重文件

# 加载权重

from keras.models import load_model

encoder_model = load_model('encoder_model.h5')

decoder_model = load_model('decoder_model.h5')

3、推理模型搭建

def decode_sequence(input_seq):

# Encode the input as state vectors.

states_value = encoder_model.predict(input_seq)

# Generate empty target sequence of length 1.

target_seq = np.zeros((1, 1, num_decoder_tokens))

# Populate the first character of target sequence with the start character.

target_seq[0, 0, target_token_index['\t']] = 1.

# this target_seq you can treat as initial state

# Sampling loop for a batch of sequences

# (to simplify, here we assume a batch of size 1).

stop_condition = False

decoded_sentence = ''

while not stop_condition:

output_tokens, h, c = decoder_model.predict([target_seq] + states_value)

# Sample a token

# argmax: Returns the indices of the maximum values along an axis

# just like find the most possible char

sampled_token_index = np.argmax(output_tokens[0, -1, :])

# find char using index

sampled_char = reverse_target_char_index[sampled_token_index]

# and append sentence

decoded_sentence += sampled_char

# Exit condition: either hit max length

# or find stop character.

if (sampled_char == '\n' or len(decoded_sentence) > max_decoder_seq_length):

stop_condition = True

# Update the target sequence (of length 1).

# append then ?

# creating another new target_seq

# and this time assume sampled_token_index to 1.0

target_seq = np.zeros((1, 1, num_decoder_tokens))

target_seq[0, 0, sampled_token_index] = 1.

# Update states

# update states, frome the front parts

states_value = [h, c]

return decoded_sentence

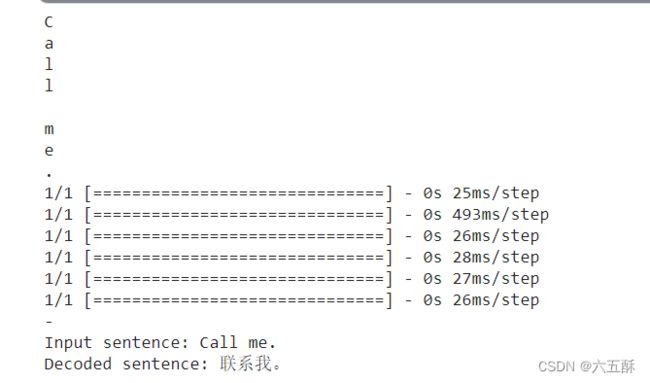

4、进行推理

import numpy as np

input_text = "Call me."

encoder_input_data = np.zeros(

(1,max_encoder_seq_length, num_encoder_tokens),

dtype='float32')

for t, char in enumerate(input_text):

print(char)

# 3D vector only z-index has char its value equals 1.0

encoder_input_data[0,t, input_token_index[char]] = 1.

input_seq = encoder_input_data

decoded_sentence = decode_sequence(input_seq)

print('-')

print('Input sentence:', input_text)

print('Decoded sentence:', decoded_sentence)

以上的代码可在kaggle上运行:how-to-train-a-chinese-to-english-translator-iii