论文精读--CLIP

论文链接:

Learning Transferable Visual Models From Natural Language Supervision (arxiv.org)

视频链接:

CLIP 论文逐段精读【论文精读】_哔哩哔哩_bilibili

核心内容

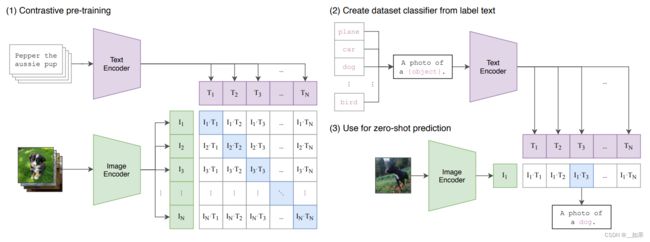

预训练

Learning Transferable Visual Models From Natural Language Supervision通过自然语言处理这边的一些监督信号去训练一个迁移效果很好的视觉模型

左图中输入是一个文字和图片的配对,图片给了一只狗,对应的文字是pepper(小狗)

图片通过图片编码器ImageEncoder(ResNet or Vision Transformer)得到一些特征

文本通过文本编码器TextEncoder得到文本特征

CLIP在这些特征上进行对比学习(对比学习:只需要正样本和负样本的定义)

在这里的正样本是配对的图片和文字,因此特征矩阵的对角线上都是正样本(n个), 其余元素都是负样本(n**2-n个)

特征矩阵

对比学习需要大量数据,因此OpenAI专门收集了一个含4亿个配对的数据集

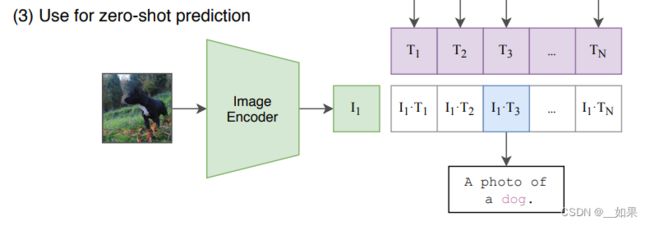

zero-shot推理

CLIP在预训练过后只能得到一些图片和文本的特征,并没有在任何分类任务上进行训练或微调,所以CLIP是没有分类头的,于是作者想到用自然语言处理的一种方法:prompt template

拿ImageNet举例,将飞机、车、狗等类别变成一个句子A photo of a plane,句子通过预训练好的文本编码器得到文本特征

Q:为什么用prompt template?

A:其实直接用单词提取文本特征也可以,但是CLIP预训练时,图片是与一个句子配对的,所以用单词会对效果有负面影响。

如何选取这个句子也是有讲究的,后文提出了prompt engineering和prompt ensemble两种方法

推理时只需要将图片放入预训练的图片编码器得到特征, 去与文本特征做一个余弦相似度(内积越大越相似)

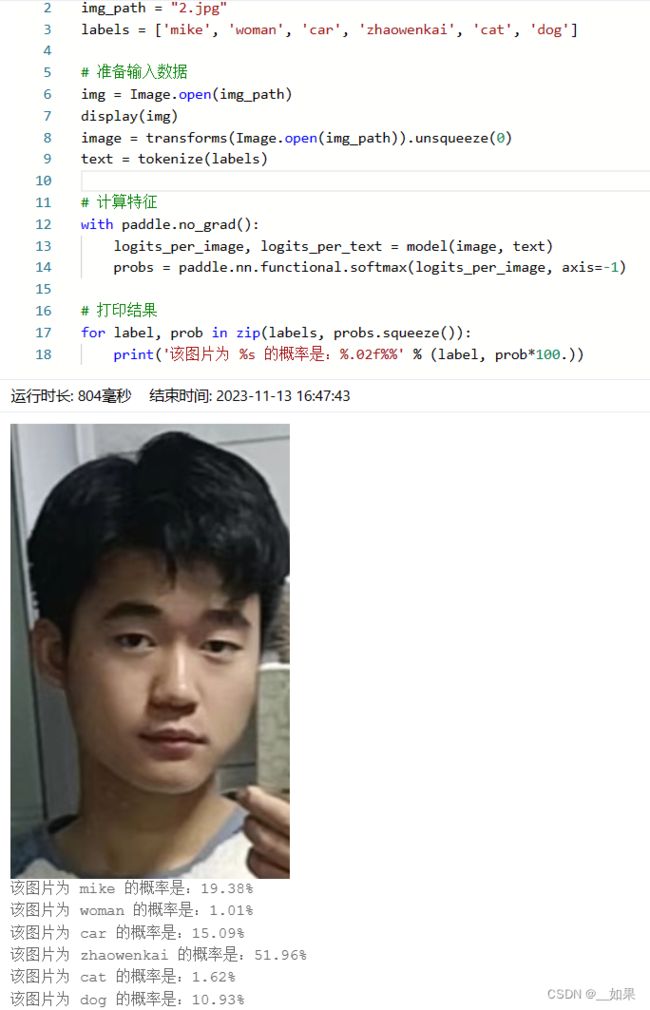

这里的标签是可以更改的,很神奇!可以换成任何的单词与任何的图片,用我自己举例

这里的标签是可以更改的,很神奇!可以换成任何的单词与任何的图片,用我自己举例

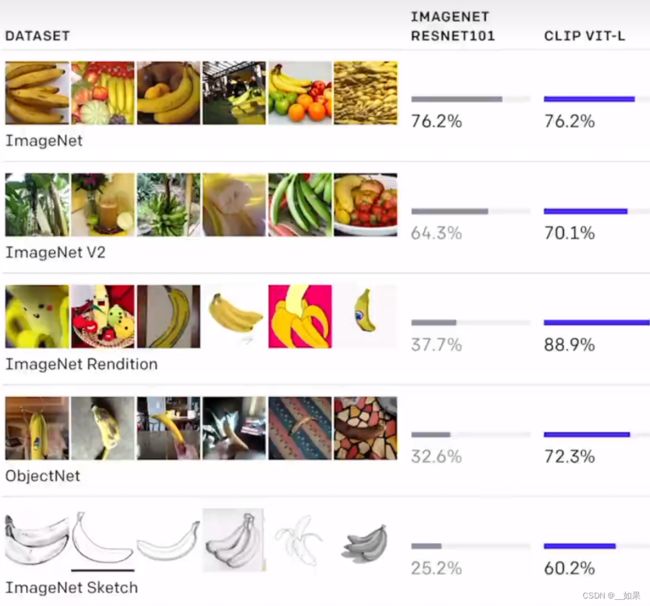

CLIP不光是能识别新的物体,由于它把视觉的语义和文字的语义联系到了一起,所以它学习到的特征语义性特别强,迁移性非常好

不同数据集下ResNet101与CLIP VIT-L对比

基于CLIP的一些应用:StyleCLIP、CLIPDraw、CLIP+物体检测

论文整体架构

全文48页,第一页摘要,第一、二页引言,第三、四页主要讲怎么做预训练,从第六页到第十八页全是实验(怎么做zero-shot的推理、prompt engineering、prompt ensemble),第十九页CLIP的一些局限性,第二十页到第二十四页讨论了一些CLIP可能带来的影响力(bias一些模型的偏见、CLIP可能在监控视频里的应用、展望未来的工作),第二十五、二十六页讲了讲相关工作,第二十七页结论,后面是致谢,最后十页是补充材料

摘要

State-of-the-art computer vision systems are trained to predict a fixed set of predetermined object categories. This restricted form of supervision limits their generality and usability since additional labeled data is needed to specify any other visual concept.

Learning directly from raw text about images is a promising alternative which leverages a much broader source of supervision. We demonstrate that the simple pre-training task of predicting which caption goes with which image is an efficient and scalable way to learn SOTA image representations from scratch on a dataset of 400 million (image, text) pairs collected from the internet. After pre-training, natural language is used to reference learned visual concepts (or describe new ones) enabling zero-shot transfer of the model to downstream tasks.

We study the performance of this approach by benchmarking on over 30 different existing computer vision datasets, spanning tasks such as OCR, action recognition in videos, geo-localization, and many types of fine-grained object classification.

The model transfers non-trivially to most tasks and is often competitive with a fully supervised baseline without the need for any dataset specific training. For instance, we match the accuracy of the original ResNet-50 on ImageNet zero-shot without needing to use any of the 1.28 million training examples it was trained on.

We release our code and pre-trained model weights at GitHub - openai/CLIP: Contrastive Language-Image Pretraining.

翻译:

最先进的计算机视觉系统经过训练可以预测一组固定的预定对象类别。 这种受限的监督形式限制了它们的通用性和可用性,因为需要额外的标记数据来指定任何其他视觉概念。

直接从有关图像的原始文本中学习是一种很有前途的替代方案,它可以利用更广泛的监督来源。 我们证明了预测哪个标题与哪个图像对应的简单预训练任务是一种有效且可扩展的方式,可以在从互联网收集的 4 亿(图像、文本)对数据集上从头开始学习 SOTA 图像表示。 预训练后,使用自然语言来引用学习到的视觉概念(或描述新概念),从而实现模型到下游任务的零样本迁移。

我们通过对 30 多个不同的现有计算机视觉数据集进行基准测试来研究这种方法的性能,涵盖 OCR、视频中的动作识别、地理定位和许多类型的细粒度对象分类等任务。

该模型可以轻松地迁移到大多数任务,并且通常可以与完全监督的基线相媲美,而无需任何数据集特定的训练。 例如,我们在 ImageNet 零镜头上匹配原始 ResNet-50 的准确性,而无需使用它所训练的 128 万个训练示例中的任何一个。

总结:

CLIP的迁移学习能力非常强,预训练好的模型能够在任意一个视觉分类的数据集上取得不错的效果

CLIP是zero-shot零样本学习的模型,也就是说CLIP无需在这些数据集上进行训练就能得到很好的效果

作者在超过30个数据集上做了测试,涵盖了OCR、视频动作检测、坐标定位和许多细分类任务

在所有的测试结果当中,表现最突出的是在ImageNet上的结果,在不使用128万中的任何一张图片训练的情况下,能得到与训练好的Resnet-50同样的效果

引言

Pre-training methods which learn directly from raw text have revolutionized NLP over the last few years (Dai & Le, 2015; Peters et al., 2018; Howard & Ruder, 2018; Radford et al., 2018; Devlin et al., 2018; Raffel et al., 2019).

Task-agnostic objectives such as autoregressive and masked language modeling have scaled across many orders of magnitude in compute, model capacity, and data, steadily improving capabilities. The development of “text-to-text” as a standardized input-output interface (McCann et al., 2018; Radford et al., 2019; Raffel et al., 2019) has enabled task-agnostic architectures to zero-shot transfer to downstream datasets removing the need for specialized output heads or dataset specific customization.

Flagship systems like GPT-3(Brown et al., 2020) are now competitive across many tasks with bespoke models while requiring little to no dataset specific training data.

翻译:

直接从原始文本中学习的预训练方法在过去几年彻底改变了 NLP.

自回归和掩码完形填空等与任务无关的目标已经在计算、模型容量和数据方面扩展了多个数量级,稳步提高了能力。 “文本到文本”作为标准化输入输出接口的发展使任务无关架构能够零样本转移到 下游数据集,并消除了对专门输出头或数据集特定定制的需要。

像 GPT-3这样的标杆系统现在在使用定制模型的许多下游任务中具有竞争力,同时几乎不需要数据集特定的训练数据。

总结:

CLIP对下游任务无需训练或微调,无需分类头

These results suggest that the aggregate supervision accessible to modern pre-training methods within web-scale collections of text surpasses that of high-quality crowd-labeled NLP datasets.

However, in other fields such as computer vision it is still standard practice to pre-train models on crowd-labeled datasets such as ImageNet (Deng et al., 2009).

Could scalable pre-training methods which learn directly from web text result in a similar breakthrough in computer vision? Prior work is encouraging.

翻译:

这些结果表明,现代预训练方法在互联网规模下大规模的文本集合的质量超过了高质量人群标记的 NLP 数据集。

然而,在计算机视觉等其他领域,在 ImageNet 等人工标记数据集上预训练模型仍然是标准做法。

直接从网络文本中学习的可扩展预训练方法能否在计算机视觉领域取得类似的突破? 从之前的工作看来是令人鼓舞的。

总结:

在NLP中,大规模的没有标注的数据效果比人工标注的数据效果要好

Over 20 years ago Mori et al. (1999) explored improving content based image retrieval by training a model to predict the nouns and adjectives in text documents paired with images. Quattoni et al. (2007) demonstrated it was possible to learn more data efficient image representations via manifold learning in the weight space of classifiers trained to predict words in captions associated with images. Sri vastava & Salakhutdinov (2012) explored deep representation learning by training multimodal Deep Boltzmann Machines on top of low-level image and text tag features. Joulin et al. (2016) modernized this line of work and demonstrated that CNNs trained to predict words in image captions learn useful image representations.

They converted the title, description, and hashtag metadata of images in the YFCC100M dataset (Thomee et al., 2016) into a bag-of words multi-label classification task and showed that pre-training AlexNet (Krizhevsky et al., 2012) to predict these labels learned representations which preformed similarly to ImageNet-based pre-training on transfer tasks.

Li et al. (2017) then extended this approach to predicting phrase n-grams in addition to individual words and demonstrated the ability of their system to zero-shot transfer to other image classification datasets by scoring target classes based on their dictionary of learned visual n-grams and predicting the one with the highest score. Adopting more recent architectures and pre-training approaches, VirTex (Desai & Johnson,2020), ICMLM (Bulent Sariyildiz et al., 2020), and ConVIRT (Zhang et al., 2020) have recently demonstrated the potential of transformer-based language modeling, masked language modeling, and contrastive objectives to learn image representations from text.

翻译:

20 多年前,Mori 通过训练模型来预测与图像配对的文本文档中的名词和形容词,探索改进基于内容的图像检索。Quattoni证明可以通过在分类器的权重空间中进行流形学习来学习更多数据有效的图像表示,这些分类器经过训练可以预测与图像相关的字幕中的单词。 Sri vastava & Salakhutdinov (2012) 通过在低级图像和文本标签特征之上训练多模式深度玻尔兹曼机来探索深度表征学习。 Joulin 等人。 (2016) 对这一工作领域进行了现代化改造,并证明经过训练以预测图像说明中的单词的 CNN 学习了有用的图像表示。

他们将 YFCC100M 数据集(Thomee 等人,2016 年)中图像的标题、描述和主题标签元数据转换为词袋多标签分类任务,并展示了预训练 AlexNet预测这些标签学习的表示,这些表示类似于基于 ImageNet 的传输任务预训练。

Li然后将这种方法扩展到预测短语 n-grams 以及单个单词,并展示了他们的系统通过基于他们学习的视觉 n-grams 和 预测得分最高的那个。 采用更新的架构和预训练方法,VirTex、ICMLM 和 ConVIRT最近展示了基于转换器的语言的潜力 建模、掩码语言建模和对比目标,以从文本中学习图像表示。

总结:

从1999年的工作讨论起,一直到21年的工作

CLIP与2017年的Li et al比较像,他们都做了一个zero-shot的迁移性学习,但是在17年没有transformer和这么大这么好的数据集,所以这篇论文的结果并不理想

VirTex (Desai & Johnson,2020), ICMLM (Bulent Sariyildiz et al., 2020), ConVIRT (Zhang et al., 2020)都是基于transformer做的,也与CLIP很相似,但是具体做法有些区别

While exciting as proofs of concept, using natural language supervision for image representation learning is still rare. This is likely because demonstrated performance on common benchmarks is much lower than alternative approaches.

For example, Li et al. (2017) reach only 11.5% accuracy on ImageNet in a zero-shot setting. This is well below the 88.4% accuracy of the current state of the art (Xie et al.,2020). It is even below the 50% accuracy of classic computer vision approaches (Deng et al., 2012).

Instead, more narrowly scoped but well-targeted uses of weak supervision have improved performance. Mahajan et al. (2018) showed that predicting ImageNet-related hashtags on Instagram images is an effective pre-training task.

When fine-tuned to ImageNet these pre-trained models increased accuracy by over 5% and improved the overall state of the art at the time.

Kolesnikov et al. (2019) and Dosovitskiy et al. (2020) have also demonstrated large gains on a broader set of transfer benchmarks by pre-training models to predict the classes of the noisily labeled JFT-300M dataset.

翻译:

虽然作为概念证明令人兴奋,但使用自然语言监督进行图像表示学习仍然很少见。这可能是因为在通用基准测试中证明的性能远低于替代方法。

例如,李等人。 (2017) 在零样本设置下在 ImageNet 上的准确率仅为 11.5%。 这远低于当前最先进技术的准确率为 88.4%。 它甚至低于经典计算机视觉方法 50% 的准确率。

相反,范围更窄但目标明确的弱监督使用提高了性能。 Mahajan表明,预测 Instagram 图像上与 ImageNet 相关的主题标签是一项有效的预训练任务。

当针对 ImageNet 进行微调时,这些预训练模型的准确性提高了 5% 以上,并改善了当时的整体技术水平。

Mahajan和 Dosovitskiy 还展示了通过预训练模型在更广泛的迁移基准上取得的巨大收益,以预测带有噪声标记的 JFT-300M 数据集的类别。

总结:

作者反思为什么利用自然语言的监督信号的思想很好,但是在视觉方面并没有广泛应用,得出结论是因为数据质量、数据集大小、算力不够等时代问题

介绍另一系列的工作是如何利用弱监督信号

This line of work represents the current pragmatic middle ground between learning from a limited amount of supervised “gold-labels” and learning from practically unlimited amounts of raw text.

However, it is not without compromises. Both works carefully design, and in the process limit, their supervision to 1000 and 18291 classes respectively.

Natural language is able to express, and therefore supervise, a much wider set of visual concepts through its generality. Both approaches also use static softmax classifiers to perform prediction and lack a mechanism for dynamic outputs. This severely curtails their flexibility and limits their “zero-shot” capabilities.

翻译:

这一系列工作代表了实用主义的中间地带,介于从有限数量的受监督“黄金标签”中学习和从几乎无限量的原始文本中学习。

然而,它并非没有妥协。 两者都经过精心设计,并且在过程中将他们的监督分别限制在1000和18291分类。

自然语言能够通过其普遍性表达并因此监督更广泛的视觉概念集。 这两种方法都使用静态 softmax 分类器来执行预测,并且缺乏动态输出机制。 这严重削弱了它们的灵活性并限制了它们的“zero-shot”能力。

总结:

对于利用弱监督的有监督模型,仍旧依赖softmax做分类头,无法处理新的类别

A crucial difference between these weakly supervised models and recent explorations of learning image representations directly from natural language is scale. While Mahajan et al. (2018) and Kolesnikov et al. (2019) trained their models for accelerator years on millions to billions of images, VirTex, ICMLM, and ConVIRT trained for accelerator days on one to two hundred thousand images.

In this work, we close this gap and study the behaviors of image classifiers trained with natural language supervision at large scale.

Enabled by the large amounts of publicly available data of this form on the internet, we create a new dataset of 400 million (image, text) pairs and demonstrate that a simplified version of ConVIRT trained from scratch, which we call CLIP, for Contrastive Language-Image Pre-training, is an efficient method of learning from natural language supervision.

We study the scalability of CLIP by training a series of eight models spanning almost 2 orders of magnitude of compute and observe that transfer performance is a smoothly predictable function of compute (Hestness et al., 2017; Kaplan et al.,2020).

We find that CLIP, similar to the GPT family, learns to perform a wide set of tasks during pre-training including OCR, geo-localization, action recognition, and many others.

We measure this by benchmarking the zero-shot transfer performance of CLIP on over 30 existing datasets and find it can be competitive with prior task-specific supervised models. We also confirm these findings with linear-probe representation learning analysis and show that CLIP outperforms the best publicly available ImageNet model while also being more computationally efficient.

We additionally find that zero-shot CLIP models are much more robust than equivalent accuracy supervised ImageNet models which suggests that zero-shot evaluation of task-agnostic models is much more representative of a model’s capability. These results have significant policy and ethical implications, which we consider in Section 7.

翻译:

这些弱监督模型与最近直接从自然语言学习图像表示的探索之间的一个关键区别是规模。 Mahajan 和 Kolesnikov 在数百万至数十亿张图像上训练他们的模型进行年级别的加速,而VirTex、ICMLM 和 ConVIRT 在 10 到 20 万张图像上进行训练做天级别的加速。

在这项工作中,我们缩小了这一差距并研究了在大规模自然语言监督下训练的图像分类器的行为。

借助互联网上这种形式的大量公开可用数据,我们创建了一个包含 4 亿对(图像、文本)对的新数据集,并展示了从头开始训练的 ConVIRT 的简化版本,我们称之为 CLIP,用于对比语言 -图像预训练,是一种从自然语言监督中学习的有效方法。

我们通过训练跨越近 2 个数量级的计算的一系列八个模型来研究 CLIP 的可扩展性,并观察到传输性能是计算的一个平滑可预测函数(Hestness 等人,2017 年;Kaplan 等人,2020 年)。

我们发现 CLIP 与 GPT 家族类似,在预训练期间学习执行一系列广泛的任务,包括 OCR、地理定位、动作识别等。

我们通过在 30 多个现有数据集上对 CLIP 的零样本传输性能进行基准测试来衡量这一点,并发现它可以与先前的特定任务监督模型竞争。 我们还通过线性探针表示学习分析证实了这些发现,并表明 CLIP 优于公开可用的最佳 ImageNet 模型,同时计算效率更高。

我们还发现零样本 CLIP 模型比同等精度的监督 ImageNet 模型更稳健,这表明任务不可知模型的零样本评估更能代表模型的能力。 这些结果具有重要的政策和伦理意义,我们在第 7 节中对此进行了考虑。

总结:

之前的弱监督+有监督模型的规模大,在亿级的数据集上训练,而且训练时间为accelerator years(accelerator指tpu、gpu等加速硬件);而后来与CLIP相似的三个模型都是在几十万的数据集上训练,而且训练时间为accelerator days

之前的三个模型VirTex, ICMLM, and ConVIRT不行不是因为方法错了,而是规模没上去

通过获取四亿个配对的数据并使用VIT-Large模型,做到了数据规模与模型规模双大,从而提出了CLIP(Contrastive Language-Image Pre-training), 是ConVIRT的简化版本

作者在模型方面尝试了8个模型,从ResNet到Vision Transformer,发现迁移学习的能力基本与模型大小是正相关的

作者在30多个数据集上测试CLIP,得出的结果与那些精心设计的模型效果打成平手,甚至更好;并且作者抛开zero-shot的推理,不改变预训练后的骨干网络,用抽取的特征训练一个分类头,结果发现CLIP仍比ImageNet上训练效果最好的模型表现要好,而且计算也更高效

CLIP的zero-shot推理更加稳健,当效果与其他模型打成平手时,CLIP的泛化性能总是强于有监督模型

方法

2.1 Natural Language Supervision

At the core of our approach is the idea of learning perception from supervision contained in natural language.

As discussed in the introduction, this is not at all a new idea, however terminology used to describe work in this space is varied, even seemingly contradictory, and stated motivations are diverse. Zhang et al. (2020), Gomez et al. (2017), Joulin et al. (2016), and Desai & Johnson (2020) all introduce methods which learn visual representations from text paired with images but describe their approaches as unsupervised, self-supervised, weakly supervised, and supervised respectively.We emphasize that what is common across this line of work is not any of the details of the particular methods used but the appreciation of natural language as a training signal.

All these approaches are learning from natural language supervision. Although early work wrestled with the complexity of natural language when using topic model and n-gram representations, improvements in deep contextual representation learning suggest we now have the tools to effectively leverage this abundant source of supervision (McCann et al.,2017).

翻译:

我们方法的核心是从自然语言中包含的监督中学习感知的想法。

正如引言中所讨论的,这根本不是一个新想法,但是用于描述该领域工作的术语多种多样,甚至看似矛盾,并且陈述的动机多种多样。 Zhang,Gomez, Joulin 和 Desai & Johnson 都介绍了从与图像配对的文本中学习视觉表示的方法,但分别将它们的方法描述为无监督、自监督、弱监督和监督。

我们强调,这项工作的共同点不是所用使用到的特定方法的任何细节,而是得益于将自然语言作为训练信号。

所有这些方法都是从自然语言监督中学习的。 尽管早期的工作在使用topic model和 n-gram 表示时与自然语言的复杂性作斗争,但深度上下文表示学习的改进表明我们现在拥有有效利用这种丰富的监督资源的工具。

总结:

核心:利用自然语言的监督信号来训练一个可迁移的视觉模型

nlp之前使用的topic model和n-gram等方法还是非常负责,不适合做跨模态的训练。但是随着transformer和自监督的训练方式兴起, 出现了具有上下文语义环境的学习方式,从此nlp可以使用这种取之不尽用之不竭的文本监督信号了。

Learning from natural language has several potential strengths over other training methods.

It’s much easier to scale natural language supervision compared to standard crowd-sourced labeling for image classification since it does not require annotations to be in a classic “machine learning compatible format” such as the canonical 1-of-N majority vote “gold label”.

Instead, methods which work on natural language can learn passively from the supervision contained in the vast amount of text on the internet.

Learning from natural language also has an important advantage over most unsupervised or self-supervised learning approaches in that it doesn’t “just” learn a representation but also connects that representation to language which enables flexible zero-shot transfer.

In the following subsections, we detail the specific approach we settled on.

翻译:

与其他训练方法相比,从自然语言中学习有几个潜在的优势。与用于图像分类的标准众包标签相比,扩展自然语言监督要容易得多,因为它不需要注释采用经典的“机器学习兼容格式”,例如规范的 1-of-N 多数投票“黄金标签” 。

相反,适用于自然语言的方法可以从互联网上大量文本中包含的监督中被动学习。与大多数无监督或自监督学习方法相比,从自然语言中学习也有一个重要的优势,因为它不仅“只是”学习一种表示,而且还将这种表示与语言联系起来,从而实现灵活的zero-shot迁移。在以下小节中,我们详细介绍了我们确定的具体方法。

总结:

用自然语言的监督信号来训练模型有两个最重要的好处:

(1)不需要再标注数据,不需要再限制有多少个类。监督信号是文本,而不是N选1的标签,模型的输入输出更加自由

(2)学到的特征不再是一个单独的视觉特征,而是一个多模态特征。与语言联系在一起,可以很容易地做zero-shot的迁移学习。如果只是使用单模态的自监督模型,比如单模态的对比学习MoCo、单模态的掩码学习mae,还是只能得到视觉特征,无法做zero-shot的迁移学习

2.2 Creating a Suffificiently Large Dataset

Existing work has mainly used three datasets, MS-COCO (Lin et al., 2014), Visual Genome (Krishna et al., 2017), and YFCC100M (Thomee et al., 2016). While MS-COCO and Visual Genome are high quality crowd-labeled datasets, they are small by modern standards with approximately 100,000 training photos each.

By comparison, other computer vision systems are trained on up to 3.5 billion Instagram photos(Mahajan et al., 2018). YFCC100M, at 100 million photos,is a possible alternative, but the metadata for each image is sparse and of varying quality.

Many images use automatically generated filenames like 20160716 113957.JPG as “titles” or contain “descriptions” of camera exposure settings.

After filtering to keep only images with natural language titles and/or descriptions in English, the dataset shrunk by a factor of 6 to only 15 million photos. This is approximately the same size as ImageNet.

翻译:

现有工作主要使用了三个数据集,MS-COCO (Lin et al., 2014)、Visual Genome (Krishna et al., 2017) 和 YFCC100M (Thomee et al., 2016)。 虽然 MS-COCO 和 Visual Genome 是高质量的人群标记数据集,但按照现代标准,它们很小,每个数据集大约有 100,000 张训练照片。

相比之下,其他计算机视觉系统接受了多达 35 亿张 Instagram 照片的训练(Mahajan 等人,2018 年)。 拥有 1 亿张照片的 YFCC100M 是一个可能的替代方案,但每张图像的元数据稀疏且质量参差不齐。

许多图像使用自动生成的文件名,如 20160716 113957.JPG 作为“标题”或包含相机曝光设置的“描述”。

在过滤以仅保留具有自然语言标题和/或英文描述的图像后,数据集缩小了 6 倍,只有 1500 万张照片。 这与 ImageNet 的大小大致相同。

总结:

现有数据集不够大,质量参差不齐,不如自己动手造一个数据集

A major motivation for natural language supervision is the large quantities of data of this form available publicly on the internet. Since existing datasets do not adequately reflect this possibility, considering results only on them would underestimate the potential of this line of research.

To address this, we constructed a new dataset of 400 million (image,text) pairs collected form a variety of publicly available sources on the Internet.

To attempt to cover as broad a set of visual concepts as possible, we search for (image, text) pairs as part of the construction process whose text includes one of a set of 500,000 queries.

We approximately class balance the results by including up to 20,000 (image, text) pairs per query. The resulting dataset has a similar total word count as the WebText dataset used to train GPT-2. We refer to this dataset as WIT for WebImageText.

翻译:

自然语言监督的一个主要动机是互联网上公开提供的大量这种形式的数据。由于现有数据集没有充分反映这种可能性,仅考虑它们的结果会低估这一研究领域的潜力。

为了解决这个问题,我们构建了一个包含 4 亿对(图像、文本)对的新数据集,这些数据集是从 Internet 上的各种公开来源收集而来的。

为了尝试涵盖尽可能广泛的一组视觉概念,我们搜索(图像,文本)对作为构建过程的一部分,其文本包含一组 500,000 个查询中的一个。

我们通过在每个查询中包含多达 20,000 个(图像、文本)对来大致平衡结果。 生成的数据集的总单词数与用于训练 GPT-2 的 WebText 数据集相似。 我们将此数据集称为 WebImageText 的 WIT。

2.3 Selecting an Effificient Pre-Training Method

State-of-the-art computer vision systems use very large amounts of compute. Mahajan et al. (2018) required 19 GPU years to train their ResNeXt101-32x48d and Xie et al.(2020) required 33 TPUv3 core-years to train their Noisy Student EfficientNet-L2.

When considering that both these systems were trained to predict only 1000 ImageNet classes, the task of learning an open set of visual concepts from natural language seems daunting.

In the course of our efforts, we found training efficiency was key to successfully scaling natural language supervision and we selected our final pre-training method based on this metric.

翻译:

最先进的计算机视觉系统使用非常大量的计算。 Mahajan 需要 19 个 GPU 年来训练他们的 ResNeXt101-32x48d,Xie 需要 33 个 TPUv3 核心年来训练他们的 Noisy Student EfficientNet-L2。

考虑到这两个系统都经过训练只能预测 1000 个 ImageNet 类,从自然语言中学习一组开放的视觉概念的任务似乎令人生畏。

在我们努力的过程中,我们发现训练效率是成功扩展自然语言监督的关键,我们根据这个指标选择了最终的预训练方法。

总结:

之前的模型训练时间太长,且都是预测1000类;而作者面对更大的数据集,且任务为更难的从自然语言处理中直接学习open set of visual concepts(开放世界里的所有的视觉概念),所以要做出新的尝试

Our initial approach, similar to VirTex, jointly trained an image CNN and text transformer from scratch to predict the caption of an image. However, we encountered difficulties efficiently scaling this method.

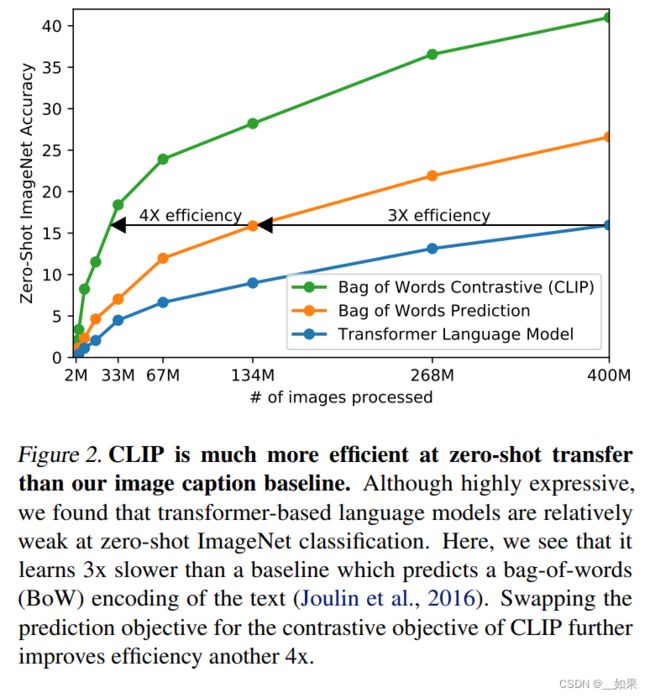

In Figure 2 we show that a 63 million parameter transformer language model, which already uses twice the compute of its ResNet-50 image encoder, learns to recognize ImageNet classes three times slower than a much simpler baseline that predicts a bag-of-words encoding of the same text.

翻译:

我们最初的方法类似于 VirTex,从头开始联合训练图像 CNN 和文本的transformer来预测图像的标题。然而,我们在有效扩展这种方法时遇到了困难。

在图 2 中,我们展示了一个 6300 万参数的变换器语言模型,它已经使用了其 ResNet-50 图像编码器两倍的计算,但在相同的文本上识别 ImageNet 类比预测词袋编码的更简单的基线慢三倍。

图2

Both these approaches share a key similarity. They try to predict the exact words of the text accompanying each image.

This is a difficult task due to the wide variety of descriptions, comments, and related text that co-occur with images. Recent work in contrastive representation learning for images has found that contrastive objectives can learn better representations than their equivalent predictive objective (Tian et al., 2019).

Other work has found that although generative models of images can learn high quality image representations, they require over an order of magnitude more compute than contrastive models with the same performance (Chen et al., 2020a).

Noting these findings, we explored training a system to solve the potentially easier proxy task of predicting only which text as a whole is paired with which image and not the exact words of that text.

Starting with the same bag-of-words encoding baseline, we swapped the predictive objective for a contrastive objective in Figure 2 and observed a further 4x efficiency improvement in the rate of zero-shot transfer to ImageNet.

翻译:

这两种方法有一个关键的相似之处。 他们试图预测每张图片所附文字的确切单词。

由于与图像同时出现的描述、评论和相关文本种类繁多,因此这是一项艰巨的任务。 最近在图像对比表示学习方面的工作发现,对比目标可以比它们的等效预测目标学习更好的表示。

其他工作发现,虽然图像的生成模型可以学习高质量的图像表示,但它们需要比具有相同性能的对比模型多一个数量级的计算量。

注意到这些发现,我们探索了训练一个系统来解决可能更容易的代理任务,即仅预测整个文本与哪个图像配对,而不是预测该文本的确切单词。

从相同的词袋编码基线开始,我们将预测目标换成图 2 中的对比目标,并观察到速率进一步提高了 4 倍到 ImageNet 的零样本传输。

总结:

如果给定一张图片,要去预测对应的文本,需要逐字逐句去预测。这个任务太难了,因为对于一张图片可以有很多种不同的文字描述,导致模型训练特别慢

对比学习的好处:把预测任务变成对比任务,也就是判断这个图片与这个文本是否配对,任务就变简单了。图2中可以看到从预测任务变成对比任务后,训练速度提高了4倍

预训练伪代码

代码解析:

输入图片的形状为(n, h, w, c),文本形状为(n, l)

通过一个编码器,图片的编码器可以是ResNet或者Vision Transformer,文本的编码器可以说CBOW或Text Transformer

W_i,W_t是投射层,用处是学习如何从单模态变成多模态,然后L2归一化,就得到了最终用来对比学习的特征

内积算相似度

labels是0~n,因为对于CLIP来说对角线上的才是正样本,而例如对于MoCo来说,正样本永远是第一个,所以labels为0

交叉熵损失函数得出loss

loss加起来求平均,这个操作在对比学习中是很常见的,从SimCLR到DINO,全都是用的这种对称式目标函数

Due to the large size of our pre-training dataset, over-fitting is not a major concern and the details of training CLIP are simplified compared to the implementation of Zhang et al.

(2020). We train CLIP from scratch without initializing the image encoder with ImageNet weights or the text encoder with pre-trained weights. We do not use the non-linear projection between the representation and the contrastive embedding space, a change which was introduced by Bachman et al. (2019) and popularized by Chen et al. (2020b).

We instead use only a linear projection to map from each encoder’s representation to the multi-modal embedding space.

We did not notice a difference in training efficiency between the two versions and speculate that non-linear projections may be co-adapted with details of current image only in self-supervised representation learning methods.

We also remove the text transformation function tu t_v . A random square crop from resized images is the only data augmentation used during training. Finally, the temperature parameter which controls the range of the logits in the softmax, τ , is directly optimized during training as a log-parameterized multiplicative scalar to avoid turning as a hyper-parameter.

翻译:

由于我们的预训练数据集很大,过拟合不是主要问题,与 Zhang 等人的实施相比,训练 CLIP 的细节得到了简化。

我们从头开始训练 CLIP,而没有使用 ImageNet 权重初始化图像编码器或使用预训练权重的文本编码器。 我们不使用表示和对比嵌入空间之间的非线性投影,这是 Bachman 等人引入的变化。 并由 Chen 等人推广。

相反,我们仅使用线性投影将每个编码器的表示映射到多模态嵌入空间。

我们没有注意到两个版本之间训练效率的差异,并推测非线性投影可能仅在自监督表示学习方法中与当前图像的细节共同适应。

我们还删除了 Zhang 等人的文本转换函数 tut_u 。 从文本中统一采样单个句子,因为 CLIP 的预训练数据集中的许多(图像,文本)对只是一个句子。

我们还简化了图像变换函数 tu t_v 。 来自调整大小的图像的随机正方形裁剪是训练期间使用的唯一数据增强。 最后,控制 softmax 中 logits 范围的temperature参数 τ 在训练期间直接优化为对数参数化乘法标量,以避免转为超参数。

总结:

数据量很大,过拟合不是主要问题

CLIP从单模态投射到多模态并没有用非线性投射层,而是用了一个线性投射层。之前的SimCLR、MoCo一系列论文中都使用了非线性投射层,且带来了将近10个百分点的提升,但是在训练CLIP的过程中,非线性投射层与线性投射层效果相差不大,作者怀疑可能非线性投射层只是用来适配单模态的视觉模型

数据量很大,无需过多的数据增强,只用了一个随机裁剪

模型大,调参困难,在对比学习中temperature是非常重要的超参数,往往能带来性能的大提升。但是作者不想调,所以把temperature变成了一个可学习的标量

2.4 Choosing and Scaling a Model

We consider two different architectures for the image encoder.

For the first, we use ResNet-50 (He et al., 2016a)as the base architecture for the image encoder due to its widespread adoption and proven performance.

We make several modifications to the original version using the ResNetD improvements from He et al. (2019) and the antialiased rect-2 blur pooling from Zhang (2019). We also replace the global average pooling layer with an attention pooling mechanism.

The attention pooling is implemented as a single layer of “transformer-style” multi-head QKV attention where the query is conditioned on the global average-pooled representation of the image. For the second architecture, we experiment with the recently introduced Vision Transformer (ViT) (Dosovitskiy et al., 2020).

We closely follow their implementation with only the minor modification of adding an additional layer normalization to the combined patch and position embeddings before the transformer and use a slightly different initialization scheme.

翻译:

我们考虑图像编码器的两种不同架构。

首先,我们使用 ResNet-50 (He et al., 2016a) 作为图像编码器的基础架构,因为它被广泛采用并且经过验证有良好的性能。

我们使用 He 等人的 ResNetD 改进对原始版本进行了一些改进,同时采用了 Zhang 的抗锯齿 rect-2 模糊池。 我们还用注意力池机制替换了全局平均池层。

注意力池被实现为单层“transformer形式”的多头 QKV 注意力,其中查询以图像的全局平均池表示为条件。 对于第二种架构,我们试验了最近推出的 Vision Transformer (ViT)。

我们密切关注它们的实现,仅对变换器之前的组合补丁和位置嵌入添加额外的层归一化并使用略有不同的初始化方案进行了微小的修改。

总结:

图片编码器既可以是ResNet,也可以是Vision Transformer,并进行了一些更改

The text encoder is a Transformer (Vaswani et al., 2017) with the architecture modifications described in Radford et al. (2019).

As a base size we use a 63M-parameter 12-layer 512-wide model with 8 attention heads. The transformer operates on a lower-cased byte pair encoding (BPE) representation of the text with a 49,152 vocab size (Sennrich et al., 2015). For computational efficiency, the max sequence length was capped at 76.

The text sequence is bracketed with [SOS] and [EOS] tokens and the activations of the highest layer of the transformer at the [EOS] token are treated as the feature representation of the text which is layer normalized and then linearly projected into the multi-modal embedding space.

Masked self-attention was used in the text encoder to preserve the ability to initialize with a pre-trained language model or add language modeling as an auxiliary objective, though exploration of this is left as future work.

翻译:

文本编码器是一个 Transformer,具有 Radford 等人中描述的架构修改。

作为基础尺寸,我们使用具有 8 个注意力头的 63M 参数 12 层 512 宽模型。 转换器对具有 49,152 个词汇大小的文本的小写字节对编码 (BPE) 表示进行操作。 为了计算效率,最大序列长度上限为 76。

文本序列用 [SOS] 和 [EOS] 标记括起来,转换器最高层在 [EOS] 标记处的激活被视为文本的特征表示,该文本被层归一化,然后线性投影到多 -模态嵌入空间。

Masked self-attention 在文本编码器中使用,以保留使用预训练语言模型进行初始化或添加语言建模作为辅助目标的能力,尽管对此的探索留待未来的工作。

总结:

文本编码器使用Transformer,并且有所调整

While previous computer vision research has often scaled models by increasing the width (Mahajan et al., 2018) or depth (He et al., 2016a) in isolation, for the ResNet image encoders we adapt the approach of Tan & Le (2019) which found that allocating additional compute across all of widthdepth, and resolution outperforms only allocating it to only one dimension of the model. While Tan & Le (2019) tune the ratio of compute allocated to each dimension for their EfficientNet architecture, we use a simple baseline of allocating additional compute equally to increasing the width, depth, and resolution of the model.

For the text encoder, we only scale the width of the model to be proportional to the calculated increase in width of the ResNet and do not scale the depth at all, as we found CLIP’s performance to be less sensitive to the capacity of the text encoder.

翻译:

虽然之前的计算机视觉研究通常通过单独增加宽度或深度来缩放模型,但对于 ResNet 图像编码器,我们采用了 Tan & Le(2019)的方法,该方法发现 在所有宽度、深度和分辨率上分配额外的计算优于仅将其分配给模型的一个维度。 虽然 Tan & Le (2019) 调整了为其 EfficientNet 架构分配给每个维度的计算比率,但我们使用了一个简单的基线,即平均分配额外的计算以增加模型的宽度、深度和分辨率。

对于文本编码器,我们只缩放模型的宽度,使其与计算出的 ResNet 宽度增加成正比,根本不缩放深度,因为我们发现 CLIP 的性能对文本编码器的容量不太敏感 .

总结:

缩放两个编码器的宽度或深度

2.5 Training

We train a series of 5 ResNets and 3 Vision Transformers.

For the ResNets we train a ResNet-50, a ResNet-101, and then 3 more which follow EfficientNet-style model scaling and use approximately 4x, 16x, and 64x the compute of a ResNet-50. They are denoted as RN50x4, RN50x16, and RN50x64 respectively. For the Vision Transformers we train a ViT-B/32, a ViT-B/16, and a ViT-L/14.

We train all models for 32 epochs. We use the Adam optimizer (Kingma& Ba, 2014) with decoupled weight decay regularization(Loshchilov & Hutter, 2017) applied to all weights that are not gains or biases, and decay the learning rate using a cosine schedule (Loshchilov & Hutter, 2016).

Initial hyper-parameters were set using a combination of grid searches, random search, and manual tuning on the baseline ResNet-50 model when trained for 1 epoch. Hyper-parameters were then adapted heuristically for larger models due to computational constraints.

The learnable temperature parameter τ was initialized to the equivalent of 0.07 from (Wu et al.,2018) and clipped to prevent scaling the logits by more than 100 which we found necessary to prevent training instability.

We use a very large minibatch size of 32,768. Mixed-precision (Micikevicius et al., 2017) was used to accelerate training and save memory. To save additional memory, gradient checkpointing (Griewank & Walther, 2000; Chen et al., 2016), half-precision Adam statistics (Dhariwal et al., 2020), and half-precision stochastically rounded text encoder weights were used.

The calculation of embedding similarities was also sharded with individual GPUs computing only the subset of the pairwise similarities necessary for their local batch of embeddings.

The largest ResNet model, RN50x64, took 18 days to train on 592 V100 GPUs while the largest Vision Transformer took 12 days on 256 V100 GPUs.

For the ViT-L/14 we also pre-train at a higher 336 pixel resolution for one additional epoch to boost performance similar to FixRes (Touvron et al., 2019).

We denote this model as ViT-L/14@336px. Unless otherwise specified, all results reported in this paper as “CLIP” use this model which we found to perform best.

翻译:

我们训练了一系列的 5 个 ResNets 和 3 个 Vision Transformer。

对于 ResNet,我们训练了一个 ResNet-50、一个 ResNet-101,然后是另外 3 个,它们遵循 EfficientNet 风格的模型缩放,并使用大约 4 倍、16 倍和 64 倍的 ResNet-50 计算。 它们分别表示为 RN50x4、RN50x16 和 RN50x64。对于 Vision Transformers,我们训练了一个 ViT-B/32、一个 ViT-B/16 和一个 ViT-L/14。

我们训练所有模型 32 个epoch。 我们使用 Adam 优化器将解耦权重衰减正则化应用于所有不是增益或偏差的权重,并使用余弦计划衰减学习率 (Loshchilov & Hutter, 2016) .

当训练 1 个epoch时,初始超参数是使用网格搜索、随机搜索和手动调整的组合在基线 ResNet-50 模型上设置的。 由于计算限制,超参数然后启发式地适应更大的模型。

可学习的温度参数 τ 从初始化为相当于 0.07 并被剪裁以防止将 logits 缩放超过 100,我们发现这是防止训练不稳定所必需的。

我们使用 32,768 的非常大的minibatch。 混合精度用于加速训练和节省内存。 为了节省额外的内存,使用了梯度检查点 、半精度 Adam 统计和半精度随机舍入文本编码器权重。

嵌入相似度的计算也与单个 GPU 进行了分片,仅计算其本地批量嵌入所需的成对相似度的子集。

最大的 ResNet 模型 RN50x64 在 592 个 V100 GPU 上训练了 18 天,而最大的 Vision Transformer 在 256 个 V100 GPU 上训练了 12 天。

对于 ViT-L/14,我们还以更高的 336 像素分辨率对一个额外的 epoch 进行了预训练,以提高类似于 FixRes 的性能。

我们将此模型表示为 ViT-L/14@336px。 除非另有说明,否则本文中报告为“CLIP”的所有结果均使用我们发现性能最佳的模型。

总结:

未完待续。。。

论文翻译取自:

CLIP论文翻译、Learning Transferable Visual Models From Natural Language Supervision翻译_Jiliang.Li的博客-CSDN博客