- 「AI 中国」榜单揭晓,OpenBayes贝式计算入选「大模型最具潜力创业企业 TOP 10」

日前,「AI中国」机器之心2024年度评选正式揭晓,OpenBayes贝式计算有幸入选「大模型最具潜力创业企业TOP10」。作为专业的人工智能媒体与产业服务平台,机器之心于2017年发布了AI榜单「SyncedMachineIntelligenceAwards」,在随后的时间里,伴随AI的跨越式发展,机器之心的年度评选也逐渐成为了产业风向标之一,覆盖的领域、范围更加广泛,维度更加细化。机器之心20

- 论文阅读:Deep Bilateral Learning for Real-Time Image Enhancement-google-hdrnet-slicing

SetMaker

论文阅读

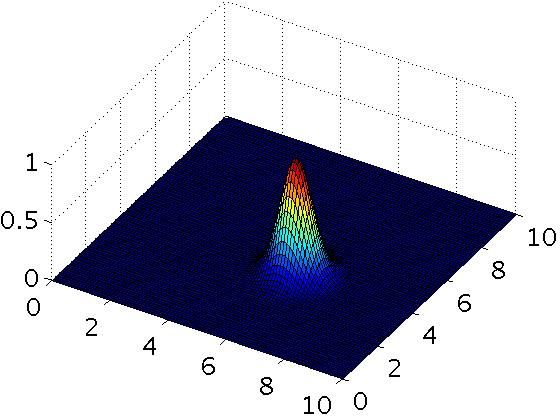

项目地址:https://gitcode.com/google/hdrnethdrnet作为超分领域的经典文章,由google提出主要用来用轻量化的方法来实现高分辨率的图像生成,hdrnet结合cnn可以让更高分辨率的图像部署在板端。如图所示,原始图像比如4k图像,首先分为两个主要模块:grid和guide。grid就是对应图上面的那一条特征提取网络,具体来说,原始图像经过下采样之后,默认256分

- 2017-SIGGRAPH-Google,MIT-(HDRNet)Deep Bilateral Learning for Real-Time Image Enhancements

WX Chen

HDR技术深度学习神经网络机器学习

双边网格本质上是一个可以保存边缘信息的3维的数据结构。对于一张2维图片,在2维空间中增加了一维代表像素的强度slice操作(上采样)BilateralGuidedUpsampling这篇文章用双边网格实现图像的操作算子的加速。算法的核心思想是将一幅高分辨率的图像通过下采样转换成一个双边网格,在双边网格中每个格子就是一个图像的仿射变换算子,它的原理是在空间与值域相近的区域内,相似输入图像的亮度经算子

- MCP(Model Context Protocol)模型上下文协议 进阶篇4 - 发展计划

AIQL

MCP(ModelContextProtocol)MCPailanguagemodel开源协议人工智能

ModelContextProtocol(MCP)正在快速发展。这一章概述了2025年上半年关键优先事项和未来方向的当前思考,尽管这些内容可能会随着项目的进展而发生显著变化。目前MCP的主要内容,除实战篇外(包括理论篇、番外篇和进阶篇)均已进入收尾阶段。在官方未发布重大更新前,预计短期不会新增其他篇章。远程MCP支持(RemoteMCPSupport)我们的首要任务是启用远程MCP连接,允许客户端

- Dufs开源Web文件服务器

爱辉弟啦

linux运维linux运维服务器Web文件服务器开源软件

介绍:Dufs是一个独特的实用文件服务器,支持静态服务,上传,搜索,访问控制,webdav…GitHub-sigoden/dufs:Afileserverthatsupportsstaticserving,uploading,searching,accessingcontrol,webdav…功能列表提供静态文件下载文件夹为zip文件上传文件和文件夹(拖放)创建/编辑/搜索文件可恢复的部分上传/下

- AWS GCR EKS Resource:构建高效弹性云原生应用的利器

杨女嫚

AWSGCREKSResource:构建高效弹性云原生应用的利器eks-workshop-greater-chinaAWSWorkshopforLearningEKSforGreaterChina项目地址:https://gitcode.com/gh_mirrors/ek/eks-workshop-greater-china在云计算的浪潮中,AWS(AmazonWebServices)一直处于创新

- PostgreSQL - pgvector 插件构建向量数据库并进行相似度查询

花千树-010

RAG数据库postgresqlAI编程

在现代的机器学习和人工智能应用中,向量相似度检索是一个非常重要的技术,尤其是在文本、图像或其他类型的嵌入向量的操作中。本文将介绍如何在PostgreSQL中安装pgvector插件,用于存储和检索向量数据,并展示如何通过Python脚本向数据库插入向量并执行相似度查询。一、安装PostgreSQL并配置pgvector插件1.安装PostgreSQL首先,确保你已经安装了PostgreSQL。可以

- HTML<blockquote>标签

新生派

html前端

例子引用自其他来源的部分:For50years,WWFhasbeenprotectingthefutureofnature.Theworld'sleadingconservationorganization,WWFworksin100countriesandissupportedby1.2millionmembersintheUnitedStatesandcloseto5millionglobal

- 使用 Go 语言生成样式美观的 PDF 文件

Ai 编码

Golang教程golangpdf开发语言

文章精选推荐1JetBrainsAiassistant编程工具让你的工作效率翻倍2ExtraIcons:JetBrainsIDE的图标增强神器3IDEA插件推荐-SequenceDiagram,自动生成时序图4BashSupportPro这个ides插件主要是用来干嘛的?5IDEA必装的插件:SpringBootHelper的使用与功能特点6Aiassistant,又是一个写代码神器7Cursor

- 什么是多模态机器学习:跨感知融合的智能前沿

非凡暖阳

人工智能神经网络

在人工智能的广阔天地里,多模态机器学习(MultimodalMachineLearning)作为一项前沿技术,正逐步解锁人机交互和信息理解的新境界。它超越了单一感官输入的限制,通过整合视觉、听觉、文本等多种数据类型,构建了一个更加丰富、立体的认知模型,为机器赋予了接近人类的综合感知与理解能力。本文将深入探讨多模态机器学习的定义、核心原理、关键技术、面临的挑战以及未来的应用前景,旨在为读者勾勒出这一

- iMac电脑启动ideal跑Java项目报错(Class JavaLaunchHelper is implemented in both...One of the two will be used.)

学习时长两年半的小学生

开发的小坑小洼编辑器java

第一次在iMac上面跑ideal,启动一个main方法出现报错(objc[19374]:ClassJavaLaunchHelperisimplementedinboth/Library/Java/JavaVirtualMachines/jdk1.8.0_121.jdk/Contents/Home/bin/java(0x10d1cb4c0)and/Library/Java/JavaVirtualMa

- pythonsvm模型优化_Python进化算法工具箱的使用(三)用进化算法优化SVM参数

weixin_39878698

pythonsvm模型优化

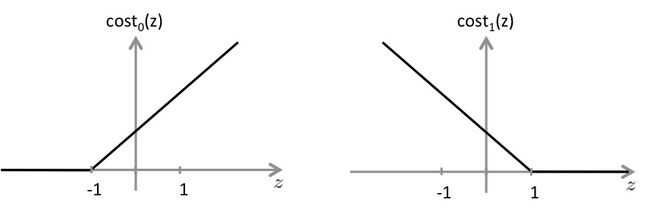

前言自从上两篇博客详细讲解了Python遗传和进化算法工具箱及其在带约束的单目标函数值优化中的应用以及利用遗传算法求解有向图的最短路径之后,我经过不断学习工具箱的官方文档以及对源码的研究,更加掌握如何利用遗传算法求解更多有趣的问题了。与前面的文章不同,本篇采用差分进化算法来优化SVM中的参数C和Gamma。(用遗传算法也可以,下面会给出效果比较)首先简单回顾一下Python高性能实用型遗传和进化算

- 差分进化算法_Python进化算法工具箱的使用(三)用进化算法优化SVM参数

weixin_39747075

差分进化算法

前言自从上两篇博客详细讲解了Python遗传和进化算法工具箱及其在带约束的单目标函数值优化中的应用以及利用遗传算法求解有向图的最短路径之后,我经过不断学习工具箱的官方文档以及对源码的研究,更加掌握如何利用遗传算法求解更多有趣的问题了。与前面的文章不同,本篇采用差分进化算法来优化SVM中的参数C和Gamma。(用遗传算法也可以,下面会给出效果比较)首先简单回顾一下Python高性能实用型遗传和进化算

- Leetcode416. 分割等和子集-代码随想录

meeiuliuus

#leetcode---medium算法leetcode动态规划

目录题目:代码(首刷看解析2024年2月23日:代码(二刷看解析2024年3月10日)代码(三刷自解2024年6月26日go)题目:代码(首刷看解析2024年2月23日:classSolution{public:boolcanPartition(vector&nums){/*因为数值dp(10001,0);intsum=accumulate(nums.begin(),nums.end(),0);i

- 力扣hot100——矩阵

cloud___fly

leetcode矩阵算法

73.矩阵置零classSolution{public:voidsetZeroes(vector>&a){intn=a.size(),m=a[0].size();vectorr(n+10,0);vectorc(m+10,0);for(inti=0;ispiralOrder(vector>&a){intn=a.size(),m=a[0].size();intx=0,y=0;intsum=m*n;in

- 17-7 向量数据库之野望7 - PostgreSQL 和pgvector

拉达曼迪斯II

AIGC学习数据库管理工具AI创业数据库postgresql人工智能机器学习AIGC搜索引擎

PostgreSQL是一款功能强大的开源对象关系数据库系统,它已将其功能扩展到传统数据管理之外,通过pgvector扩展支持矢量数据。这一新增功能满足了对高效处理高维矢量数据日益增长的需求,这些数据通常用于机器学习、自然语言处理(NLP)和推荐系统等应用。https://github.com/mazzasaverio/find-your-opensource-project什么是pgvector?

- matlab代码实现了一个基于 SVM(支持向量机)的图像分割系统

go5463158465

MATLAB专栏算法深度学习matlab支持向量机开发语言

clear;clc;main();%1.数据加载和预处理function[features,labels]=prepareData(imageFolder)%获取所有图像和JSON文件imgFiles

- 冲刺蓝桥杯之速通vector!!!!!

爱吃生蚝的于勒

备战蓝桥杯蓝桥杯算法数据结构开发语言c语言c++柔性数组

文章目录知识点创建增删查改习题1习题2习题3习题4:习题5:知识点C++的STL提供已经封装好的容器vector,也可叫做可变长的数组,vector底层就是自动扩容的顺序表,其中的增删查改已经封装好创建constintN=30;vectora1;//创建叫a1的空的可变长的数组vectora2(N);//创建大小为30的可变长的数组,里面每个元素为0vectora3(N,2);//创建大小30的可

- leetcode 403. 青蛙过河

fks143

leetcodeleetcode

题目:403.青蛙过河-力扣(LeetCode)O(n^2)水题classSolution{public:boolcanCross(vector&stones){intn=(int)stones.size();vector>f;f.resize(n);f[0].push_back(1);int64_ttemp;for(inti=0;i&t=f[i];sort(t.begin(),t.end());

- leetcode 66. 加一

fks143

leetcodeleetcode

题目:66.加一-力扣(LeetCode)继续水题classSolution{public:vectorplusOne(vector&digits){vectorret;for(inti=digits.size()-1;i>=0;i--){ret.push_back(digits[i]);}ret[0]++;inti=0;while(ret[i]>9){if(i==digits.size()-1)

- 蓝桥杯真题 - 公因数匹配 - 题解

ExRoc

蓝桥杯算法c++

题目链接:https://www.lanqiao.cn/problems/3525/learning/个人评价:难度2星(满星:5)前置知识:调和级数整体思路题目描述不严谨,没说在无解的情况下要输出什么(比如nnn个111),所以我们先假设数据保证有解;从222到10610^6106枚举xxx作为约数,对于约数xxx去扫所有xxx的倍数,总共需要扫n2+n3+n4+⋯+nn≈nlnn\frac{

- 蓝桥杯真题 - 子树的大小 - 题解

ExRoc

蓝桥杯算法c++

题目链接:https://www.lanqiao.cn/problems/3526/learning/个人评价:难度2星(满星:5)前置知识:无整体思路整体将节点编号−1-1−1,通过找规律可以发现,节点iii下一层最左边的节点编号是im+1im+1im+1,最右边的节点编号是im+mim+mim+m;用l,rl,rl,r分别标记当前层子树的最小节点编号与最大节点编号,每次让最左边的节点往下一层的

- C#遇见TensorFlow.NET:开启机器学习的全新时代

墨夶

C#学习资料1机器学习c#tensorflow

在当今快速发展的科技世界里,机器学习(MachineLearning,ML)已经成为推动创新的重要力量。从个性化推荐系统到自动驾驶汽车,ML的应用无处不在。对于那些习惯于使用C#进行开发的程序员来说,将机器学习集成到他们的项目中似乎是一项具有挑战性的任务。但随着TensorFlow.NET的出现,这一切变得不再困难。今天,我们将一起探索如何利用这一强大的工具,在熟悉的.NET环境中轻松构建、训练和

- 【JVM】—G1 GC日志详解

一棵___大树

JVMjvm

G1GC日志详解⭐⭐⭐⭐⭐⭐Github主页https://github.com/A-BigTree笔记链接https://github.com/A-BigTree/Code_Learning⭐⭐⭐⭐⭐⭐如果可以,麻烦各位看官顺手点个star~文章目录G1GC日志详解1G1GC周期2G1日志开启与设置3YoungGC日志4MixedGC5FullGC关于G1回收器的前置知识点:【JVM】—深入理解

- Scaleph:基于Kubernetes的开放式数据平台

尤淞渊

Scaleph:基于Kubernetes的开放式数据平台scalephOpendataplatformbasedonFlinkandKubernetes,supportsweb-uiclick-and-dropdataintegrationwithSeaTunnelbackendedbyFlinkengine,flinkonlinesqldevelopmentbackendedbyFlinkSql

- 7-2 Merging Linked Lists

J_北冥有鱼

原来L1和L2不一定是L1》#include#include#includeusingnamespacestd;intconstmaxn=100010;structnode{intfirst,data,next;}Node[maxn];intmain(){inth1,h2,n,num,a,d,b;vectorv1,v2

- NLP 中文拼写检测纠正论文-04-Learning from the Dictionary

后端java

拼写纠正系列NLP中文拼写检测实现思路NLP中文拼写检测纠正算法整理NLP英文拼写算法,如果提升100W倍的性能?NLP中文拼写检测纠正Paperjava实现中英文拼写检查和错误纠正?可我只会写CRUD啊!一个提升英文单词拼写检测性能1000倍的算法?单词拼写纠正-03-leetcodeedit-distance72.力扣编辑距离NLP开源项目nlp-hanzi-similar汉字相似度word-

- C++ initializer_list 列表初始化(八股总结)

fadtes

C++八股c++游戏

定义std::initializer_list是C++11引入的一个类模板,用于支持列表初始化。它允许开发者使用花括号{}提供一组值直接初始化容器或自定义类型。std::initializer_list提供了一种简洁优雅的语法来传递多个值。主要用途初始化容器使用列表初始化方式为容器赋值。#include#includeintmain(){std::vectorvec={1,2,3,4,5};for

- 【已解决】ImportError: libnvinfer.so.8: cannot open shared object file: No such file or directory

小小小小祥

python

问题描述:按照tensorrt官方安装文档:https://docs.nvidia.com/deeplearning/tensorrt/install-guide/index.html#installing-tar安装完成后,使用python测试导入tensorrtimporttensorrt上述代码报错:Traceback(mostrecentcalllast):File“main.py”,li

- ASPICE 4.0引领自动驾驶未来:机器学习模型的特点与实践

亚远景aspice

机器学习自动驾驶人工智能

ASPICE4.0-ML机器学习模型是针对汽车行业,特别是在汽车软件开发中,针对机器学习(MachineLearning,ML)应用的特定标准和过程。ASPICE(AutomotiveSPICE)是一种基于软件控制的系统开发过程的国际标准,旨在提升软件开发过程的质量、效率和可靠性。ASPICE4.0中的ML模型部分则进一步细化了机器学习在汽车软件开发中的具体要求和流程。以下是对ASPICE4.0-

- TOMCAT在POST方法提交参数丢失问题

357029540

javatomcatjsp

摘自http://my.oschina.net/luckyi/blog/213209

昨天在解决一个BUG时发现一个奇怪的问题,一个AJAX提交数据在之前都是木有问题的,突然提交出错影响其他处理流程。

检查时发现页面处理数据较多,起初以为是提交顺序不正确修改后发现不是由此问题引起。于是删除掉一部分数据进行提交,较少数据能够提交成功。

恢复较多数据后跟踪提交FORM DATA ,发现数

- 在MyEclipse中增加JSP模板 删除-2008-08-18

ljy325

jspxmlMyEclipse

在D:\Program Files\MyEclipse 6.0\myeclipse\eclipse\plugins\com.genuitec.eclipse.wizards_6.0.1.zmyeclipse601200710\templates\jsp 目录下找到Jsp.vtl,复制一份,重命名为jsp2.vtl,然后把里面的内容修改为自己想要的格式,保存。

然后在 D:\Progr

- JavaScript常用验证脚本总结

eksliang

JavaScriptjavaScript表单验证

转载请出自出处:http://eksliang.iteye.com/blog/2098985

下面这些验证脚本,是我在这几年开发中的总结,今天把他放出来,也算是一种分享吧,现在在我的项目中也在用!包括日期验证、比较,非空验证、身份证验证、数值验证、Email验证、电话验证等等...!

&nb

- 微软BI(4)

18289753290

微软BI SSIS

1)

Q:查看ssis里面某个控件输出的结果:

A MessageBox.Show(Dts.Variables["v_lastTimestamp"].Value.ToString());

这是我们在包里面定义的变量

2):在关联目的端表的时候如果是一对多的关系,一定要选择唯一的那个键作为关联字段。

3)

Q:ssis里面如果将多个数据源的数据插入目的端一

- 定时对大数据量的表进行分表对数据备份

酷的飞上天空

大数据量

工作中遇到数据库中一个表的数据量比较大,属于日志表。正常情况下是不会有查询操作的,但如果不进行分表数据太多,执行一条简单sql语句要等好几分钟。。

分表工具:linux的shell + mysql自身提供的管理命令

原理:使用一个和原表数据结构一样的表,替换原表。

linux shell内容如下:

=======================开始

- 本质的描述与因材施教

永夜-极光

感想随笔

不管碰到什么事,我都下意识的想去探索本质,找寻一个最形象的描述方式。

我坚信,世界上对一件事物的描述和解释,肯定有一种最形象,最贴近本质,最容易让人理解

&

- 很迷茫。。。

随便小屋

随笔

小弟我今年研一,也是从事的咱们现在最流行的专业(计算机)。本科三流学校,为了能有个更好的跳板,进入了考研大军,非常有幸能进入研究生的行业(具体学校就不说了,怕把学校的名誉给损了)。

先说一下自身的条件,本科专业软件工程。主要学习就是软件开发,几乎和计算机没有什么区别。因为学校本身三流,也就是让老师带着学生学点东西,然后让学生毕业就行了。对专业性的东西了解的非常浅。就那学的语言来说

- 23种设计模式的意图和适用范围

aijuans

设计模式

Factory Method 意图 定义一个用于创建对象的接口,让子类决定实例化哪一个类。Factory Method 使一个类的实例化延迟到其子类。 适用性 当一个类不知道它所必须创建的对象的类的时候。 当一个类希望由它的子类来指定它所创建的对象的时候。 当类将创建对象的职责委托给多个帮助子类中的某一个,并且你希望将哪一个帮助子类是代理者这一信息局部化的时候。

Abstr

- Java中的synchronized和volatile

aoyouzi

javavolatilesynchronized

说到Java的线程同步问题肯定要说到两个关键字synchronized和volatile。说到这两个关键字,又要说道JVM的内存模型。JVM里内存分为main memory和working memory。 Main memory是所有线程共享的,working memory则是线程的工作内存,它保存有部分main memory变量的拷贝,对这些变量的更新直接发生在working memo

- js数组的操作和this关键字

百合不是茶

js数组操作this关键字

js数组的操作;

一:数组的创建:

1、数组的创建

var array = new Array(); //创建一个数组

var array = new Array([size]); //创建一个数组并指定长度,注意不是上限,是长度

var arrayObj = new Array([element0[, element1[, ...[, elementN]]]

- 别人的阿里面试感悟

bijian1013

面试分享工作感悟阿里面试

原文如下:http://greemranqq.iteye.com/blog/2007170

一直做企业系统,虽然也自己一直学习技术,但是感觉还是有所欠缺,准备花几个月的时间,把互联网的东西,以及一些基础更加的深入透析,结果这次比较意外,有点突然,下面分享一下感受吧!

&nb

- 淘宝的测试框架Itest

Bill_chen

springmaven框架单元测试JUnit

Itest测试框架是TaoBao测试部门开发的一套单元测试框架,以Junit4为核心,

集合DbUnit、Unitils等主流测试框架,应该算是比较好用的了。

近期项目中用了下,有关itest的具体使用如下:

1.在Maven中引入itest框架:

<dependency>

<groupId>com.taobao.test</groupId&g

- 【Java多线程二】多路条件解决生产者消费者问题

bit1129

java多线程

package com.tom;

import java.util.LinkedList;

import java.util.Queue;

import java.util.concurrent.ThreadLocalRandom;

import java.util.concurrent.locks.Condition;

import java.util.concurrent.loc

- 汉字转拼音pinyin4j

白糖_

pinyin4j

以前在项目中遇到汉字转拼音的情况,于是在网上找到了pinyin4j这个工具包,非常有用,别的不说了,直接下代码:

import java.util.HashSet;

import java.util.Set;

import net.sourceforge.pinyin4j.PinyinHelper;

import net.sourceforge.pinyin

- org.hibernate.TransactionException: JDBC begin failed解决方案

bozch

ssh数据库异常DBCP

org.hibernate.TransactionException: JDBC begin failed: at org.hibernate.transaction.JDBCTransaction.begin(JDBCTransaction.java:68) at org.hibernate.impl.SessionImp

- java-并查集(Disjoint-set)-将多个集合合并成没有交集的集合

bylijinnan

java

import java.util.ArrayList;

import java.util.Arrays;

import java.util.HashMap;

import java.util.HashSet;

import java.util.Iterator;

import java.util.List;

import java.util.Map;

import java.ut

- Java PrintWriter打印乱码

chenbowen00

java

一个小程序读写文件,发现PrintWriter输出后文件存在乱码,解决办法主要统一输入输出流编码格式。

读文件:

BufferedReader

从字符输入流中读取文本,缓冲各个字符,从而提供字符、数组和行的高效读取。

可以指定缓冲区的大小,或者可使用默认的大小。大多数情况下,默认值就足够大了。

通常,Reader 所作的每个读取请求都会导致对基础字符或字节流进行相应的读取请求。因

- [天气与气候]极端气候环境

comsci

环境

如果空间环境出现异变...外星文明并未出现,而只是用某种气象武器对地球的气候系统进行攻击,并挑唆地球国家间的战争,经过一段时间的准备...最大限度的削弱地球文明的整体力量,然后再进行入侵......

那么地球上的国家应该做什么样的防备工作呢?

&n

- oracle order by与union一起使用的用法

daizj

UNIONoracleorder by

当使用union操作时,排序语句必须放在最后面才正确,如下:

只能在union的最后一个子查询中使用order by,而这个order by是针对整个unioning后的结果集的。So:

如果unoin的几个子查询列名不同,如

Sql代码

select supplier_id, supplier_name

from suppliers

UNI

- zeus持久层读写分离单元测试

deng520159

单元测试

本文是zeus读写分离单元测试,距离分库分表,只有一步了.上代码:

1.ZeusMasterSlaveTest.java

package com.dengliang.zeus.webdemo.test;

import java.util.ArrayList;

import java.util.List;

import org.junit.Assert;

import org.j

- Yii 截取字符串(UTF-8) 使用组件

dcj3sjt126com

yii

1.将Helper.php放进protected\components文件夹下。

2.调用方法:

Helper::truncate_utf8_string($content,20,false); //不显示省略号 Helper::truncate_utf8_string($content,20); //显示省略号

&n

- 安装memcache及php扩展

dcj3sjt126com

PHP

安装memcache tar zxvf memcache-2.2.5.tgz cd memcache-2.2.5/ /usr/local/php/bin/phpize (?) ./configure --with-php-confi

- JsonObject 处理日期

feifeilinlin521

javajsonJsonOjbectJsonArrayJSONException

写这边文章的初衷就是遇到了json在转换日期格式出现了异常 net.sf.json.JSONException: java.lang.reflect.InvocationTargetException 原因是当你用Map接收数据库返回了java.sql.Date 日期的数据进行json转换出的问题话不多说 直接上代码

&n

- Ehcache(06)——监听器

234390216

监听器listenerehcache

监听器

Ehcache中监听器有两种,监听CacheManager的CacheManagerEventListener和监听Cache的CacheEventListener。在Ehcache中,Listener是通过对应的监听器工厂来生产和发生作用的。下面我们将来介绍一下这两种类型的监听器。

- activiti 自带设计器中chrome 34版本不能打开bug的解决

jackyrong

Activiti

在acitivti modeler中,如果是chrome 34,则不能打开该设计器,其他浏览器可以,

经证实为bug,参考

http://forums.activiti.org/content/activiti-modeler-doesnt-work-chrome-v34

修改为,找到

oryx.debug.js

在最头部增加

if (!Document.

- 微信收货地址共享接口-终极解决

laotu5i0

微信开发

最近要接入微信的收货地址共享接口,总是不成功,折腾了好几天,实在没办法网上搜到的帖子也是骂声一片。我把我碰到并解决问题的过程分享出来,希望能给微信的接口文档起到一个辅助作用,让后面进来的开发者能快速的接入,而不需要像我们一样苦逼的浪费好几天,甚至一周的青春。各种羞辱、谩骂的话就不说了,本人还算文明。

如果你能搜到本贴,说明你已经碰到了各种 ed

- 关于人才

netkiller.github.com

工作面试招聘netkiller人才

关于人才

每个月我都会接到许多猎头的电话,有些猎头比较专业,但绝大多数在我看来与猎头二字还是有很大差距的。 与猎头接触多了,自然也了解了他们的工作,包括操作手法,总体上国内的猎头行业还处在初级阶段。

总结就是“盲目推荐,以量取胜”。

目前现状

许多从事人力资源工作的人,根本不懂得怎么找人才。处在人才找不到企业,企业找不到人才的尴尬处境。

企业招聘,通常是需要用人的部门提出招聘条件,由人

- 搭建 CentOS 6 服务器 - 目录

rensanning

centos

(1) 安装CentOS

ISO(desktop/minimal)、Cloud(AWS/阿里云)、Virtualization(VMWare、VirtualBox)

详细内容

(2) Linux常用命令

cd、ls、rm、chmod......

详细内容

(3) 初始环境设置

用户管理、网络设置、安全设置......

详细内容

(4) 常驻服务Daemon

- 【求助】mongoDB无法更新主键

toknowme

mongodb

Query query = new Query(); query.addCriteria(new Criteria("_id").is(o.getId())); &n

- jquery 页面滚动到底部自动加载插件集合

xp9802

jquery

很多社交网站都使用无限滚动的翻页技术来提高用户体验,当你页面滑到列表底部时候无需点击就自动加载更多的内容。下面为你推荐 10 个 jQuery 的无限滚动的插件:

1. jQuery ScrollPagination

jQuery ScrollPagination plugin 是一个 jQuery 实现的支持无限滚动加载数据的插件。

2. jQuery Screw

S