实践数据湖iceberg 第二十四课 iceberg元数据详细解析

系列文章目录

实践数据湖iceberg 第一课 入门

实践数据湖iceberg 第二课 iceberg基于hadoop的底层数据格式

实践数据湖iceberg 第三课 在sqlclient中,以sql方式从kafka读数据到iceberg

实践数据湖iceberg 第四课 在sqlclient中,以sql方式从kafka读数据到iceberg(升级版本到flink1.12.7)

实践数据湖iceberg 第五课 hive catalog特点

实践数据湖iceberg 第六课 从kafka写入到iceberg失败问题 解决

实践数据湖iceberg 第七课 实时写入到iceberg

实践数据湖iceberg 第八课 hive与iceberg集成

实践数据湖iceberg 第九课 合并小文件

实践数据湖iceberg 第十课 快照删除

实践数据湖iceberg 第十一课 测试分区表完整流程(造数、建表、合并、删快照)

实践数据湖iceberg 第十二课 catalog是什么

实践数据湖iceberg 第十三课 metadata比数据文件大很多倍的问题

实践数据湖iceberg 第十四课 元数据合并(解决元数据随时间增加而元数据膨胀的问题)

实践数据湖iceberg 第十五课 spark安装与集成iceberg(jersey包冲突)

实践数据湖iceberg 第十六课 通过spark3打开iceberg的认知之门

实践数据湖iceberg 第十七课 hadoop2.7,spark3 on yarn运行iceberg配置

实践数据湖iceberg 第十八课 多种客户端与iceberg交互启动命令(常用命令)

实践数据湖iceberg 第十九课 flink count iceberg,无结果问题

实践数据湖iceberg 第二十课 flink + iceberg CDC场景(版本问题,测试失败)

实践数据湖iceberg 第二十一课 flink1.13.5 + iceberg0.131 CDC(测试成功INSERT,变更操作失败)

实践数据湖iceberg 第二十二课 flink1.13.5 + iceberg0.131 CDC(CRUD测试成功)

实践数据湖iceberg 第二十三课 flink-sql从checkpoint重启

实践数据湖iceberg 第二十四课 iceberg元数据详细解析

实践数据湖iceberg 第二十五课 后台运行flink sql 增删改的效果

实践数据湖iceberg 第二十六课 checkpoint设置方法

实践数据湖iceberg 第二十七课 flink cdc 测试程序故障重启:能从上次checkpoint点继续工作

实践数据湖iceberg 第二十八课 把公有仓库上不存在的包部署到本地仓库

实践数据湖iceberg 第二十九课 如何优雅高效获取flink的jobId

实践数据湖iceberg 第三十课 mysql->iceberg,不同客户端有时区问题

实践数据湖iceberg 第三十一课 使用github的flink-streaming-platform-web工具,管理flink任务流,测试cdc重启场景

实践数据湖iceberg 第三十二课 DDL语句通过hive catalog持久化方法

实践数据湖iceberg 第三十三课 升级flink到1.14,自带functioin支持json函数

实践数据湖iceberg 第三十四课 基于数据湖icerberg的流批一体架构-流架构测试

实践数据湖iceberg 第三十五课 基于数据湖icerberg的流批一体架构–测试增量读是读全量还是仅读增量

实践数据湖iceberg 第三十六课 基于数据湖icerberg的流批一体架构–update mysql select from icberg语法是增量更新测试

实践数据湖iceberg 第三十七课 kakfa写入iceberg的 icberg表的 enfource ,not enfource测试

实践数据湖iceberg 第三十八课 spark sql, Procedures语法进行数据治理(小文件合并,清理快照)

实践数据湖iceberg 第三十九课 清理快照前后数据文件变化分析

实践数据湖iceberg 第四十课 iceberg的运维(合并文件、合并元数据、清理历史快照)

实践数据湖iceberg 更多的内容目录

文章目录

- 系列文章目录

- 前言

- 一、元数据管理概要

-

- 1.每次写入都会成一个snapshot

- 2 读写并发原理

- 3 精准完善的元数据信息:

- 二、测试CRUD在iceberg中是如何记录的

- 三、hdfs的影响

-

- 2. insert

- 3.再insert

- 4.update

- 四、元数据分析

-

- 1.从简单入手,看看看 version-hint.text

- 2.metadata.json文件分析

-

- 2.1 v1.metadata.json (insert)

- 2.2 v2.metadata.json (insert)

- 2.3 v3.metadata.json (insert)

- 2.4 v4.metadata.json (update)

- 2.5 metadata.json特点总结

- 3. snapshot文件分析

-

- 3.1 分析第一个快照

- 3.2 分析第二个snapshot

- 3.3 分析第三个快照(update)

- 四、数据变更图解

-

-

- 总结

前言

一、元数据管理概要

1.每次写入都会成一个snapshot

每次写入都会成一个snapshot, 每个snapshot包含着一系列的文件列表

2 读写并发原理

基于MVCC(Multi Version Concurrency Control)的机制,默认读取文件会从最新的的版本, 每次写入都会产生一个新的snapshot, 读写相互不干扰

3 精准完善的元数据信息:

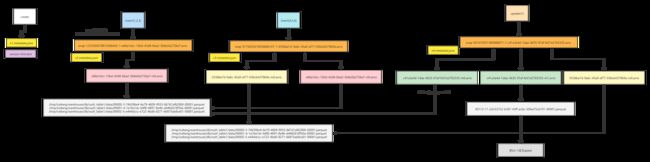

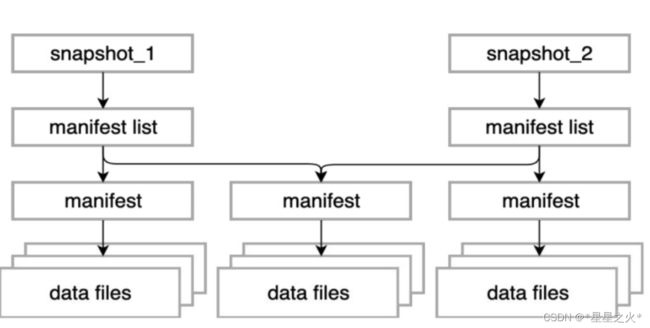

如上图所示, snapshot信息、manifest信息以及文件信息, 一个snapshot包含一系列的manifest信息, 每个manifest存储了一系列的文件列表

snapshot列表信息:包含了详细的manifest列表,产生snapshot的操作,以及详细记录数、文件数、甚至任务信息,充分考虑到了数据血缘的追踪

manifest列表信息:保存了每个manifest包含的分区信息

文件列表信息:保存了每个文件字段级别的统计信息,以及分区信息

如此完善的统计信息,利用查询引擎层的条件下推,可以快速的过滤掉不必要文件,提高查询效率,熟悉了Iceberg 的机制,在写入Iceberg 的表时按照需求以及字段的分布,合理的写入有序的数据,能够达到非常好的过滤效果。

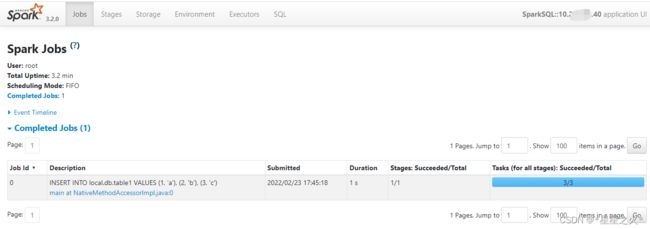

二、测试CRUD在iceberg中是如何记录的

CREATE TABLE local.db.xxzh_table1 (id bigint, data string) USING iceberg;

INSERT INTO local.db.xxzh_table1 VALUES (1, 'a'), (2, 'b'), (3, 'c');

INSERT INTO local.db.xxzh_table1 VALUES (4, 'd'), (5, 'e'), (6, 'f');

update local.db.xxzh_table1 set data='apple' where id=1;

delete from local.db.xxzh_table1 ;

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# bin/spark-sql --packages org.apache.iceberg:iceberg-spark-runtime-3.2_2.12:0.13.1 --conf spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions --conf spark.sql.catalog.spark_catalog=org.apache.iceberg.spark.SparkSessionCatalog --conf spark.sql.catalog.spark_catalog.type=hive --conf spark.sql.catalog.local=org.apache.iceberg.spark.SparkCatalog --conf spark.sql.catalog.local.type=hadoop --conf spark.sql.catalog.local.warehouse=/tmp/iceberg/warehouse

三、hdfs的影响

执行:

CREATE TABLE local.db.xxzh_table1 (id bigint, data string) USING iceberg;

查hdfs文件:

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -ls -R /tmp/iceberg/warehouse/db/xxzh_table1

drwxrwx--- - root supergroup 0 2022-02-23 17:44 /tmp/iceberg/warehouse/db/xxzh_table1/metadata

-rw-r--r-- 2 root supergroup 1169 2022-02-23 17:44 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json

-rw-r--r-- 2 root supergroup 1 2022-02-23 17:44 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/version-hint.text

2. insert

INSERT INTO local.db.xxzh_table1 VALUES (1, ‘a’), (2, ‘b’), (3, ‘c’);

查询:

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -ls -R /tmp/iceberg/warehouse/db/xxzh_table1

drwxrwx--- - root supergroup 0 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet

drwxrwx--- - root supergroup 0 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata

-rw-r--r-- 2 root supergroup 5860 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7-m0.avro

-rw-r--r-- 2 root supergroup 3754 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro

-rw-r--r-- 2 root supergroup 1169 2022-02-23 17:44 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json

-rw-r--r-- 2 root supergroup 2073 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v2.metadata.json

-rw-r--r-- 2 root supergroup 1 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/version-hint.text

生成了三个数据文件,猜一下应该是spark的并行度是3

3.再insert

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -ls -R /tmp/iceberg/warehouse/db/xxzh_table1

drwxrwx--- - root supergroup 0 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/data

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet

-rw-r--r-- 2 root supergroup 642 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/data/00000-3-746396e4-6e79-4609-9933-867d1af62900-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/data/00001-4-1a16a1dc-b8f8-4897-8e4b-d4482618f50a-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/data/00002-5-e444dccc-e722-4bd6-8271-66875ab8ce01-00001.parquet

drwxrwx--- - root supergroup 0 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata

-rw-r--r-- 2 root supergroup 5869 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/0336be7d-9a6c-45a9-af77-036cb4379b9a-m0.avro

-rw-r--r-- 2 root supergroup 5860 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7-m0.avro

-rw-r--r-- 2 root supergroup 3754 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro

-rw-r--r-- 2 root supergroup 3827 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-9179203419030846107-1-0336be7d-9a6c-45a9-af77-036cb4379b9a.avro

-rw-r--r-- 2 root supergroup 1169 2022-02-23 17:44 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json

-rw-r--r-- 2 root supergroup 2073 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v2.metadata.json

-rw-r--r-- 2 root supergroup 3011 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v3.metadata.json

-rw-r--r-- 2 root supergroup 1 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/version-hint.text

4.update

spark-sql (default)> select * from local.db.xxzh_table1;

id data

1 a

2 b

3 c

4 d

5 e

6 f

Time taken: 0.159 seconds, Fetched 6 row(s)

spark-sql (default)> update local.db.xxzh_table1 set data='apple' where id=1;

Response code

Time taken: 2.394 seconds

spark-sql (default)> select * from local.db.xxzh_table1;

id data

1 apple

4 d

5 e

6 f

2 b

3 c

Time taken: 0.165 seconds, Fetched 6 row(s)

看日期,最新的时间,就是刚刚执行的效果。

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -ls -R /tmp/iceberg/warehouse/db/xxzh_table1

drwxrwx--- - root supergroup 0 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/data

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet

-rw-r--r-- 2 root supergroup 642 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/data/00000-3-746396e4-6e79-4609-9933-867d1af62900-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/data/00001-4-1a16a1dc-b8f8-4897-8e4b-d4482618f50a-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet

-rw-r--r-- 2 root supergroup 643 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/data/00002-5-e444dccc-e722-4bd6-8271-66875ab8ce01-00001.parquet

-rw-r--r-- 2 root supergroup 686 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/data/00112-11-2d333752-b387-43ff-ac6e-30fea75cb791-00001.parquet

drwxrwx--- - root supergroup 0 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/metadata

-rw-r--r-- 2 root supergroup 5869 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/0336be7d-9a6c-45a9-af77-036cb4379b9a-m0.avro

-rw-r--r-- 2 root supergroup 5876 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/c41a3e4d-1dae-4635-97af-fe57e2765335-m0.avro

-rw-r--r-- 2 root supergroup 5780 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/c41a3e4d-1dae-4635-97af-fe57e2765335-m1.avro

-rw-r--r-- 2 root supergroup 5860 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7-m0.avro

-rw-r--r-- 2 root supergroup 3754 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro

-rw-r--r-- 2 root supergroup 3827 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-9179203419030846107-1-0336be7d-9a6c-45a9-af77-036cb4379b9a.avro

-rw-r--r-- 2 root supergroup 3848 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-941010551389488871-1-c41a3e4d-1dae-4635-97af-fe57e2765335.avro

-rw-r--r-- 2 root supergroup 1169 2022-02-23 17:44 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json

-rw-r--r-- 2 root supergroup 2073 2022-02-23 17:45 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v2.metadata.json

-rw-r--r-- 2 root supergroup 3011 2022-02-23 17:48 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v3.metadata.json

-rw-r--r-- 2 root supergroup 4048 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v4.metadata.json

-rw-r--r-- 2 root supergroup 1 2022-02-23 17:50 /tmp/iceberg/warehouse/db/xxzh_table1/metadata/version-hint.text

四、元数据分析

1.从简单入手,看看看 version-hint.text

每次修改时,version-hint.text的日期都变更。

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -cat /tmp/iceberg/warehouse/db/xxzh_table1/metadata/version-hint.text

4[root@hadoop103 spark-3.2.0-bin-hadoop2.7]#

结合version-hint的名字, 猜一下就知道是获取当前是第几个版本

2.metadata.json文件分析

2.1 v1.metadata.json (insert)

建表的元信息

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -text /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json

{

"format-version" : 1,

"table-uuid" : "756090df-d197-4881-9d8d-aea0b9354077",

"location" : "/tmp/iceberg/warehouse/db/xxzh_table1",

"last-updated-ms" : 1645609485795,

"last-column-id" : 2,

"schema" : {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

},

"current-schema-id" : 0,

"schemas" : [ {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

} ],

"partition-spec" : [ ],

"default-spec-id" : 0,

"partition-specs" : [ {

"spec-id" : 0,

"fields" : [ ]

} ],

"last-partition-id" : 999,

"default-sort-order-id" : 0,

"sort-orders" : [ {

"order-id" : 0,

"fields" : [ ]

} ],

"properties" : {

"owner" : "root"

},

"current-snapshot-id" : -1,

"snapshots" : [ ],

"snapshot-log" : [ ],

"metadata-log" : [ ]

2.2 v2.metadata.json (insert)

这个文件:manifest-list, snapshot-log,metadata-log 都有数据了

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -text /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v2.metadata.json

{

"format-version" : 1,

"table-uuid" : "756090df-d197-4881-9d8d-aea0b9354077",

"location" : "/tmp/iceberg/warehouse/db/xxzh_table1",

"last-updated-ms" : 1645609519412,

"last-column-id" : 2,

"schema" : {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

},

"current-schema-id" : 0,

"schemas" : [ {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

} ],

"partition-spec" : [ ],

"default-spec-id" : 0,

"partition-specs" : [ {

"spec-id" : 0,

"fields" : [ ]

} ],

"last-partition-id" : 999,

"default-sort-order-id" : 0,

"sort-orders" : [ {

"order-id" : 0,

"fields" : [ ]

} ],

"properties" : {

"owner" : "root"

},

"current-snapshot-id" : 1233304278810386445,

"snapshots" : [ {

"snapshot-id" : 1233304278810386445,

"timestamp-ms" : 1645609519412,

"summary" : {

"operation" : "append",

"spark.app.id" : "local-1645609363060",

"added-data-files" : "3",

"added-records" : "3",

"added-files-size" : "1929",

"changed-partition-count" : "1",

"total-records" : "3",

"total-files-size" : "1929",

"total-data-files" : "3",

"total-delete-files" : "0",

"total-position-deletes" : "0",

"total-equality-deletes" : "0"

},

"manifest-list" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro",

"schema-id" : 0

} ],

"snapshot-log" : [ {

"timestamp-ms" : 1645609519412,

"snapshot-id" : 1233304278810386445

} ],

"metadata-log" : [ {

"timestamp-ms" : 1645609485795,

"metadata-file" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json"

} ]

}

2.3 v3.metadata.json (insert)

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -text /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v3.metadata.json

{

"format-version" : 1,

"table-uuid" : "756090df-d197-4881-9d8d-aea0b9354077",

"location" : "/tmp/iceberg/warehouse/db/xxzh_table1",

"last-updated-ms" : 1645609708761,

"last-column-id" : 2,

"schema" : {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

},

"current-schema-id" : 0,

"schemas" : [ {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

} ],

"partition-spec" : [ ],

"default-spec-id" : 0,

"partition-specs" : [ {

"spec-id" : 0,

"fields" : [ ]

} ],

"last-partition-id" : 999,

"default-sort-order-id" : 0,

"sort-orders" : [ {

"order-id" : 0,

"fields" : [ ]

} ],

"properties" : {

"owner" : "root"

},

"current-snapshot-id" : 9179203419030846107,

"snapshots" : [ {

"snapshot-id" : 1233304278810386445,

"timestamp-ms" : 1645609519412,

"summary" : {

"operation" : "append",

"spark.app.id" : "local-1645609363060",

"added-data-files" : "3",

"added-records" : "3",

"added-files-size" : "1929",

"changed-partition-count" : "1",

"total-records" : "3",

"total-files-size" : "1929",

"total-data-files" : "3",

"total-delete-files" : "0",

"total-position-deletes" : "0",

"total-equality-deletes" : "0"

},

"manifest-list" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro",

"schema-id" : 0

}, {

"snapshot-id" : 9179203419030846107,

"parent-snapshot-id" : 1233304278810386445,

"timestamp-ms" : 1645609708761,

"summary" : {

"operation" : "append",

"spark.app.id" : "local-1645609363060",

"added-data-files" : "3",

"added-records" : "3",

"added-files-size" : "1928",

"changed-partition-count" : "1",

"total-records" : "6",

"total-files-size" : "3857",

"total-data-files" : "6",

"total-delete-files" : "0",

"total-position-deletes" : "0",

"total-equality-deletes" : "0"

},

"manifest-list" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-9179203419030846107-1-0336be7d-9a6c-45a9-af77-036cb4379b9a.avro",

"schema-id" : 0

} ],

"snapshot-log" : [ {

"timestamp-ms" : 1645609519412,

"snapshot-id" : 1233304278810386445

}, {

"timestamp-ms" : 1645609708761,

"snapshot-id" : 9179203419030846107

} ],

"metadata-log" : [ {

"timestamp-ms" : 1645609485795,

"metadata-file" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json"

}, {

"timestamp-ms" : 1645609519412,

"metadata-file" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/v2.metadata.json"

} ]

}

2.4 v4.metadata.json (update)

[root@hadoop103 spark-3.2.0-bin-hadoop2.7]# hadoop fs -text /tmp/iceberg/warehouse/db/xxzh_table1/metadata/v4.metadata.json

{

"format-version" : 1,

"table-uuid" : "756090df-d197-4881-9d8d-aea0b9354077",

"location" : "/tmp/iceberg/warehouse/db/xxzh_table1",

"last-updated-ms" : 1645609828216,

"last-column-id" : 2,

"schema" : {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

},

"current-schema-id" : 0,

"schemas" : [ {

"type" : "struct",

"schema-id" : 0,

"fields" : [ {

"id" : 1,

"name" : "id",

"required" : false,

"type" : "long"

}, {

"id" : 2,

"name" : "data",

"required" : false,

"type" : "string"

} ]

} ],

"partition-spec" : [ ],

"default-spec-id" : 0,

"partition-specs" : [ {

"spec-id" : 0,

"fields" : [ ]

} ],

"last-partition-id" : 999,

"default-sort-order-id" : 0,

"sort-orders" : [ {

"order-id" : 0,

"fields" : [ ]

} ],

"properties" : {

"owner" : "root"

},

"current-snapshot-id" : 941010551389488871,

"snapshots" : [ {

"snapshot-id" : 1233304278810386445,

"timestamp-ms" : 1645609519412,

"summary" : {

"operation" : "append",

"spark.app.id" : "local-1645609363060",

"added-data-files" : "3",

"added-records" : "3",

"added-files-size" : "1929",

"changed-partition-count" : "1",

"total-records" : "3",

"total-files-size" : "1929",

"total-data-files" : "3",

"total-delete-files" : "0",

"total-position-deletes" : "0",

"total-equality-deletes" : "0"

},

"manifest-list" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro",

"schema-id" : 0

}, {

"snapshot-id" : 9179203419030846107,

"parent-snapshot-id" : 1233304278810386445,

"timestamp-ms" : 1645609708761,

"summary" : {

"operation" : "append",

"spark.app.id" : "local-1645609363060",

"added-data-files" : "3",

"added-records" : "3",

"added-files-size" : "1928",

"changed-partition-count" : "1",

"total-records" : "6",

"total-files-size" : "3857",

"total-data-files" : "6",

"total-delete-files" : "0",

"total-position-deletes" : "0",

"total-equality-deletes" : "0"

},

"manifest-list" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-9179203419030846107-1-0336be7d-9a6c-45a9-af77-036cb4379b9a.avro",

"schema-id" : 0

}, {

"snapshot-id" : 941010551389488871,

"parent-snapshot-id" : 9179203419030846107,

"timestamp-ms" : 1645609828216,

"summary" : {

"operation" : "overwrite",

"spark.app.id" : "local-1645609363060",

"added-data-files" : "1",

"deleted-data-files" : "1",

"added-records" : "1",

"deleted-records" : "1",

"added-files-size" : "686",

"removed-files-size" : "643",

"changed-partition-count" : "1",

"total-records" : "6",

"total-files-size" : "3900",

"total-data-files" : "6",

"total-delete-files" : "0",

"total-position-deletes" : "0",

"total-equality-deletes" : "0"

},

"manifest-list" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-941010551389488871-1-c41a3e4d-1dae-4635-97af-fe57e2765335.avro",

"schema-id" : 0

} ],

"snapshot-log" : [ {

"timestamp-ms" : 1645609519412,

"snapshot-id" : 1233304278810386445

}, {

"timestamp-ms" : 1645609708761,

"snapshot-id" : 9179203419030846107

}, {

"timestamp-ms" : 1645609828216,

"snapshot-id" : 941010551389488871

} ],

"metadata-log" : [ {

"timestamp-ms" : 1645609485795,

"metadata-file" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/v1.metadata.json"

}, {

"timestamp-ms" : 1645609519412,

"metadata-file" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/v2.metadata.json"

}, {

"timestamp-ms" : 1645609708761,

"metadata-file" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/v3.metadata.json"

} ]

}

2.5 metadata.json特点总结

- 新的metadata.json包含前面修改的全部信息

- xxzh_table1.snapshots 查的就是metadata文件

spark-sql (default)> select * from local.db.xxzh_table1.snapshots;

committed_at snapshot_id parent_id operation manifest_list summary

2022-02-23 17:45:19.412 1233304278810386445 NULL append /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro {"added-data-files":"3","added-files-size":"1929","added-records":"3","changed-partition-count":"1","spark.app.id":"local-1645609363060","total-data-files":"3","total-delete-files":"0","total-equality-deletes":"0","total-files-size":"1929","total-position-deletes":"0","total-records":"3"}

2022-02-23 17:48:28.761 9179203419030846107 1233304278810386445 append /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-9179203419030846107-1-0336be7d-9a6c-45a9-af77-036cb4379b9a.avro {"added-data-files":"3","added-files-size":"1928","added-records":"3","changed-partition-count":"1","spark.app.id":"local-1645609363060","total-data-files":"6","total-delete-files":"0","total-equality-deletes":"0","total-files-size":"3857","total-position-deletes":"0","total-records":"6"}

2022-02-23 17:50:28.216 941010551389488871 9179203419030846107 overwrite /tmp/iceberg/warehouse/db/xxzh_table1/metadata/snap-941010551389488871-1-c41a3e4d-1dae-4635-97af-fe57e2765335.avro {"added-data-files":"1","added-files-size":"686","added-records":"1","changed-partition-count":"1","deleted-data-files":"1","deleted-records":"1","removed-files-size":"643","spark.app.id":"local-1645609363060","total-data-files":"6","total-delete-files":"0","total-equality-deletes":"0","total-files-size":"3900","total-position-deletes":"0","total-records":"6"}

Time taken: 0.179 seconds, Fetched 3 row(s)

3. snapshot文件分析

3.1 分析第一个快照

把表文件down会本地

[root@hadoop101 software]# hadoop fs -get /tmp/iceberg/warehouse/db/xxzh_table1 .

分析第一个 snap

[root@hadoop101 metadata]# java -jar /opt/software/avro-tools-1.11.0.jar tojson --pretty snap-1233304278810386445-1-e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7.avro

22/02/23 20:09:11 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

{

"manifest_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7-m0.avro",

"manifest_length" : 5860,

"partition_spec_id" : 0,

"added_snapshot_id" : {

"long" : 1233304278810386445

},

"added_data_files_count" : {

"int" : 3

},

"existing_data_files_count" : {

"int" : 0

},

"deleted_data_files_count" : {

"int" : 0

},

"partitions" : {

"array" : [ ]

},

"added_rows_count" : {

"long" : 3

},

"existing_rows_count" : {

"long" : 0

},

"deleted_rows_count" : {

"long" : 0

}

}

分析这个manifest文件

“manifest_path” : “/tmp/iceberg/warehouse/db/xxzh_table1/metadata/e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7-m0.avro”,

[root@hadoop101 metadata]# java -jar /opt/software/avro-tools-1.11.0.jar tojson --pretty e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7-m0.avro

22/02/23 20:12:15 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

{

"status" : 1,

"snapshot_id" : {

"long" : 1233304278810386445

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0001\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "a"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0001\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "a"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

{

"status" : 1,

"snapshot_id" : {

"long" : 1233304278810386445

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0002\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "b"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0002\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "b"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

{

"status" : 1,

"snapshot_id" : {

"long" : 1233304278810386445

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0003\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "c"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0003\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "c"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

提到的这个三个data文件,去查一下:

/tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet

/tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet

/tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 1| a|

+---+----+

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 2| b|

+---+----+

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 3| c|

+---+----+

其实记录有哪几个数据文件,以及他们的位置。

3.2 分析第二个snapshot

查内容:

发现:它存储了所有snapshot信息(目前是2个)。

[root@hadoop101 metadata]# java -jar /opt/software/avro-tools-1.11.0.jar tojson --pretty snap-9179203419030846107-1-0336be7d-9a6c-45a9-af77-036cb4379b9a.avro

22/02/23 20:24:42 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

{

"manifest_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/0336be7d-9a6c-45a9-af77-036cb4379b9a-m0.avro",

"manifest_length" : 5869,

"partition_spec_id" : 0,

"added_snapshot_id" : {

"long" : 9179203419030846107

},

"added_data_files_count" : {

"int" : 3

},

"existing_data_files_count" : {

"int" : 0

},

"deleted_data_files_count" : {

"int" : 0

},

"partitions" : {

"array" : [ ]

},

"added_rows_count" : {

"long" : 3

},

"existing_rows_count" : {

"long" : 0

},

"deleted_rows_count" : {

"long" : 0

}

}

{

"manifest_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/e66a1dcc-15bd-43d8-bba2-5b8a5b2726a7-m0.avro",

"manifest_length" : 5860,

"partition_spec_id" : 0,

"added_snapshot_id" : {

"long" : 1233304278810386445

},

"added_data_files_count" : {

"int" : 3

},

"existing_data_files_count" : {

"int" : 0

},

"deleted_data_files_count" : {

"int" : 0

},

"partitions" : {

"array" : [ ]

},

"added_rows_count" : {

"long" : 3

},

"existing_rows_count" : {

"long" : 0

},

"deleted_rows_count" : {

"long" : 0

}

}

查本manifest文件的内容

[root@hadoop101 metadata]# java -jar /opt/software/avro-tools-1.11.0.jar tojson --pretty 0336be7d-9a6c-45a9-af77-036cb4379b9a-m0.avro

22/02/23 20:29:50 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

{

"status" : 1,

"snapshot_id" : {

"long" : 9179203419030846107

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00000-3-746396e4-6e79-4609-9933-867d1af62900-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 642,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 45

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0004\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "d"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0004\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "d"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

{

"status" : 1,

"snapshot_id" : {

"long" : 9179203419030846107

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00001-4-1a16a1dc-b8f8-4897-8e4b-d4482618f50a-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0005\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "e"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0005\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "e"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

{

"status" : 1,

"snapshot_id" : {

"long" : 9179203419030846107

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00002-5-e444dccc-e722-4bd6-8271-66875ab8ce01-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0006\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "f"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0006\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "f"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

数据文件对应的内容:

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00000-3-746396e4-6e79-4609-9933-867d1af62900-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 4| d|

+---+----+

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00001-4-1a16a1dc-b8f8-4897-8e4b-d4482618f50a-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 5| e|

+---+----+

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00002-5-e444dccc-e722-4bd6-8271-66875ab8ce01-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 6| f|

+---+----+

3.3 分析第三个快照(update)

分析发现:包含三个快照的内容,也就可以推出,这个文件是存储所有快照信息的元数据

update分为delete和insert,观察snap文件如何记录

记录删除:/tmp/iceberg/warehouse/db/xxzh_table1/metadata/c41a3e4d-1dae-4635-97af-fe57e2765335-m0.avro

记录新增:/tmp/iceberg/warehouse/db/xxzh_table1/metadata/c41a3e4d-1dae-4635-97af-fe57e2765335-m1.avro

上一个snapshot的manifest: /tmp/iceberg/warehouse/db/xxzh_table1/metadata/0336be7d-9a6c-45a9-af77-036cb4379b9a-m0.avro

[root@hadoop101 metadata]# java -jar /opt/software/avro-tools-1.11.0.jar tojson --pretty snap-941010551389488871-1-c41a3e4d-1dae-4635-97af-fe57e2765335.avro

22/02/23 20:35:28 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

{

"manifest_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/c41a3e4d-1dae-4635-97af-fe57e2765335-m1.avro",

"manifest_length" : 5780,

"partition_spec_id" : 0,

"added_snapshot_id" : {

"long" : 941010551389488871

},

"added_data_files_count" : {

"int" : 1

},

"existing_data_files_count" : {

"int" : 0

},

"deleted_data_files_count" : {

"int" : 0

},

"partitions" : {

"array" : [ ]

},

"added_rows_count" : {

"long" : 1

},

"existing_rows_count" : {

"long" : 0

},

"deleted_rows_count" : {

"long" : 0

}

}

{

"manifest_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/0336be7d-9a6c-45a9-af77-036cb4379b9a-m0.avro",

"manifest_length" : 5869,

"partition_spec_id" : 0,

"added_snapshot_id" : {

"long" : 9179203419030846107

},

"added_data_files_count" : {

"int" : 3

},

"existing_data_files_count" : {

"int" : 0

},

"deleted_data_files_count" : {

"int" : 0

},

"partitions" : {

"array" : [ ]

},

"added_rows_count" : {

"long" : 3

},

"existing_rows_count" : {

"long" : 0

},

"deleted_rows_count" : {

"long" : 0

}

}

{

"manifest_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/metadata/c41a3e4d-1dae-4635-97af-fe57e2765335-m0.avro",

"manifest_length" : 5876,

"partition_spec_id" : 0,

"added_snapshot_id" : {

"long" : 941010551389488871

},

"added_data_files_count" : {

"int" : 0

},

"existing_data_files_count" : {

"int" : 2

},

"deleted_data_files_count" : {

"int" : 1

},

"partitions" : {

"array" : [ ]

},

"added_rows_count" : {

"long" : 0

},

"existing_rows_count" : {

"long" : 2

},

"deleted_rows_count" : {

"long" : 1

}

}

manifest内容:

[root@hadoop101 metadata]# java -jar /opt/software/avro-tools-1.11.0.jar tojson --pretty c41a3e4d-1dae-4635-97af-fe57e2765335-m1.avro

22/02/23 20:37:36 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

{

"status" : 1,

"snapshot_id" : {

"long" : 941010551389488871

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00112-11-2d333752-b387-43ff-ac6e-30fea75cb791-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 686,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 52

}, {

"key" : 2,

"value" : 56

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0001\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "apple"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0001\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "apple"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

数据文件内容(update操作: 把id=1改为 apple)

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00112-11-2d333752-b387-43ff-ac6e-30fea75cb791-00001.parquet").show

+---+-----+

| id| data|

+---+-----+

| 1|apple|

+---+-----+

哪还有个delete操作,回去看看delete是怎样存储的

原来在snap文件中,记录各个manifest_path,manaifest文件增加,删除的信息。

c41a3e4d-1dae-4635-97af-fe57e2765335-m0.avro

“deleted_rows_count” : {

“long” : 1

}

但删除具体哪一行,是怎样记录呢?

查一下这个文件内容:

[root@hadoop101 metadata]# java -jar /opt/software/avro-tools-1.11.0.jar tojson --pretty c41a3e4d-1dae-4635-97af-fe57e2765335-m0.avro

22/02/24 10:37:36 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

{

"status" : 2,

"snapshot_id" : {

"long" : 941010551389488871

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0001\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "a"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0001\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "a"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

{

"status" : 0,

"snapshot_id" : {

"long" : 1233304278810386445

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0002\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "b"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0002\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "b"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

{

"status" : 0,

"snapshot_id" : {

"long" : 1233304278810386445

},

"data_file" : {

"file_path" : "/tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet",

"file_format" : "PARQUET",

"partition" : { },

"record_count" : 1,

"file_size_in_bytes" : 643,

"block_size_in_bytes" : 67108864,

"column_sizes" : {

"array" : [ {

"key" : 1,

"value" : 46

}, {

"key" : 2,

"value" : 48

} ]

},

"value_counts" : {

"array" : [ {

"key" : 1,

"value" : 1

}, {

"key" : 2,

"value" : 1

} ]

},

"null_value_counts" : {

"array" : [ {

"key" : 1,

"value" : 0

}, {

"key" : 2,

"value" : 0

} ]

},

"nan_value_counts" : {

"array" : [ ]

},

"lower_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0003\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "c"

} ]

},

"upper_bounds" : {

"array" : [ {

"key" : 1,

"value" : "\u0003\u0000\u0000\u0000\u0000\u0000\u0000\u0000"

}, {

"key" : 2,

"value" : "c"

} ]

},

"key_metadata" : null,

"split_offsets" : {

"array" : [ 4 ]

},

"sort_order_id" : {

"int" : 0

}

}

}

看它指向的文件名:一看就是第一次insert对应的文件

00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet

00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet

00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet

读出来看看:

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00000-0-59d54827-c03f-47f6-a6a1-fc74e6861dcf-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 1| a|

+---+----+

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00001-1-3445ca12-3b21-4795-8fd7-5124ecc16c85-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 2| b|

+---+----+

scala> spark.read.parquet("/tmp/iceberg/warehouse/db/xxzh_table1/data/00002-2-6659369d-638d-432a-83e8-0da1b0abce4f-00001.parquet").show

+---+----+

| id|data|

+---+----+

| 3| c|

+---+----+

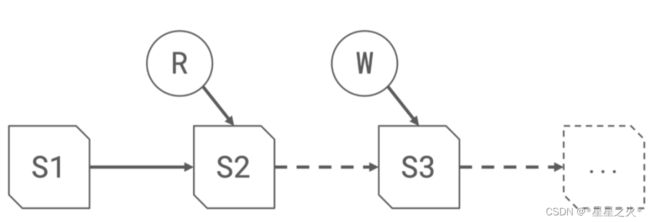

四、数据变更图解

分析结论:

- 把涉及到删除快照对应的所有文件(不仅仅是update的,没变更的也记录),进行记录

- 没有说明哪个记录被改,也没有直接改原来数据

- 重复insert也是可以写入,怎么知道需要合并呢?

总结

manifest-list 对应一个snap文件,这个snap文件下记录多个manifest文件(本次新增、本次修改、上一次snap 新增的manifest文件),一个manifest文件记录多个数据文件。