Opencv4安装踩坑(SLAM十四讲ch8)

SLAM十四讲第二版ch8中,LK光流的代码是新的,和第一版不一样,并且用到了opencv4

如果不装4,但是cmakelists里面写的是

find_package(OpenCV 4 REQUIRED)

找4的包就会出问题。

因此需要重新安装opencv4(与opencv3和opencv2共存的情况下)

但是opencv4和opencv3的安装和配置有一些不同

如果想看opencv3安装的,请见我另一篇blog

https://blog.csdn.net/weixin_44684139/article/details/104837210

0.准备工作

即依赖包的安装

sudo apt-get install build-essential libgtk2.0-dev libgtk-3-dev libavcodec-dev libavformat-dev libjpeg-dev libswscale-dev libtiff5-dev

sudo apt install python3-dev python3-numpy

sudo apt install libgstreamer-plugins-base1.0-dev libgstreamer1.0-dev

sudo apt install libpng-dev libopenexr-dev libtiff-dev libwebp-dev

安装之前还是建议locate一下看看包是都已经存在

这里要注意python3-numpy (或python3-dev)一般玩ros的是没有的,因此需要装一下

当然,如果后面安装过程中报错,提示哪些依赖库没有,那么回头来安装即可。

1.opencv4的安装

1.下载opencv4.1.2的包,链接在此

当然了,能fq的话直接网上自己找也可以

2.提取,然后进入解压后的文件夹,在终端打开

输入(但是有一些注意点,看下面)

mkdir build

cd build

cmake -D CMAKE_BUILD_TYPE=Release -D OPENCV_GENERATE_PKGCONFIG=ON -D CMAKE_INSTALL_PREFIX=/usr/local/opencv4 ..

make -j4

sudo make install

注意点① 比opencv3的安装多一个 OPENCV_GENERATE_PKGCONFIG=ON -D,产生pkgconfig

注意点② 由于要共存,所以把opencv4安装于一个特定的位置,与之前的opencv3在同一个目录之下,以共存

目录为/usr/local/opencv4,与/usr/local/opencv3共存

特别注意

安装中间会出现一个包download太慢!ippicv_2019_lnx_intel64_general_20180723.tgz 也就是他

所以先ctrl+c中断安装

我们手动离线下载它,下载链接为这个

把包下载下来以后,根据这个链接进行配置

简而言之,配置过程为将之前最开始下载并提取的opencv4.1.2文件夹中的opencv4.1.2/3rdparty/ippicv/ippicv.cmake文件将47行引号内部改为:“file:~/Downloads/” 改为自己放置ippicv_2019_lnx_intel64_general_20180723.tgz的路径,我的是

"file:/home/mjy/slambook2/3rdparty/opencv4/ippicv/"

这样就会在本地下载这个包了。这个地方与opencv3也是不同的。

2.检查与测试

1.检查

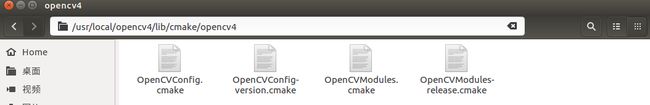

首先检查OpenCVConfig.cmake文件是不是正确安装了。

先locate OpenCVConfig.cmake 看看这玩意在哪,发现是在/usr/local/opencv4/lib/cmake/opencv4

所以进入路径 :/usr/local/opencv4/lib/cmake/opencv4

有这些文件,find_package就能找到包了,这就放心了,现在只是设定路径的事情了。

2.测试

首先在CMakeLists.txt中如下设置:

cmake_minimum_required(VERSION 2.8)

project(ch8)

set(CMAKE_BUILD_TYPE "Release")

add_definitions("-DENABLE_SSE")

set(CMAKE_CXX_FLAGS "-std=c++11 ${SSE_FLAGS} -g -O3 -march=native")

set(OpenCV_DIR "/usr/local/opencv4/lib/cmake/opencv4")

find_package(OpenCV 4 REQUIRED)

find_package(Sophus REQUIRED)

find_package(Pangolin REQUIRED)

include_directories(

${OpenCV_INCLUDE_DIRS}

${G2O_INCLUDE_DIRS}

${Sophus_INCLUDE_DIRS}

"/usr/local/include/eigen3"

${Pangolin_INCLUDE_DIRS}

)

add_executable(optical_flow optical_flow.cpp)

target_link_libraries(optical_flow ${OpenCV_LIBS})

# add_executable(direct_method direct_method.cpp)

# target_link_libraries(direct_method ${OpenCV_LIBS} ${Pangolin_LIBRARIES})

当然了,重点看opencv4的部分:

set(OpenCV_DIR "/usr/local/opencv4/lib/cmake/opencv4")

find_package(OpenCV 4 REQUIRED)

这个路径与之前locate的路径一致

编译一下

如果代码还报错,

error: ‘CV_GRAY2BGR’ was not declared in this scope cv::cvtColor(img2, img2_single, CV_GRAY2BGR);

则需要将CV_GRAY2BGR,更新为COLOR_GRAY2BGR。

附上测试代码(高博ch8的LK光流)

//

// Created by Xiang on 2017/12/19.

//

#include

#include

#include

#include

#include

using namespace std;

using namespace cv;

string file_1 = "/home/mjy/slambook2/ch8/LK1.png"; // first image

string file_2 = "/home/mjy/slambook2/ch8/LK2.png"; // second image

/// Optical flow tracker and interface

class OpticalFlowTracker {

public:

OpticalFlowTracker(

const Mat &img1_,

const Mat &img2_,

const vector &kp1_,

vector &kp2_,

vector &success_,

bool inverse_ = true, bool has_initial_ = false) :

img1(img1_), img2(img2_), kp1(kp1_), kp2(kp2_), success(success_), inverse(inverse_),

has_initial(has_initial_) {}

void calculateOpticalFlow(const Range &range);

private:

const Mat &img1;

const Mat &img2;

const vector &kp1;

vector &kp2;

vector &success;

bool inverse = true;

bool has_initial = false;

};

/**

* single level optical flow

* @param [in] img1 the first image

* @param [in] img2 the second image

* @param [in] kp1 keypoints in img1

* @param [in|out] kp2 keypoints in img2, if empty, use initial guess in kp1

* @param [out] success true if a keypoint is tracked successfully

* @param [in] inverse use inverse formulation?

*/

void OpticalFlowSingleLevel(

const Mat &img1,

const Mat &img2,

const vector &kp1,

vector &kp2,

vector &success,

bool inverse = false,

bool has_initial_guess = false

);

/**

* multi level optical flow, scale of pyramid is set to 2 by default

* the image pyramid will be create inside the function

* @param [in] img1 the first pyramid

* @param [in] img2 the second pyramid

* @param [in] kp1 keypoints in img1

* @param [out] kp2 keypoints in img2

* @param [out] success true if a keypoint is tracked successfully

* @param [in] inverse set true to enable inverse formulation

*/

void OpticalFlowMultiLevel(

const Mat &img1,

const Mat &img2,

const vector &kp1,

vector &kp2,

vector &success,

bool inverse = false

);

/**

* get a gray scale value from reference image (bi-linear interpolated)

* @param img

* @param x

* @param y

* @return the interpolated value of this pixel

*/

inline float GetPixelValue(const cv::Mat &img, float x, float y) {

// boundary check

if (x < 0) x = 0;

if (y < 0) y = 0;

if (x >= img.cols) x = img.cols - 1;

if (y >= img.rows) y = img.rows - 1;

uchar *data = &img.data[int(y) * img.step + int(x)];

float xx = x - floor(x);

float yy = y - floor(y);

return float(

(1 - xx) * (1 - yy) * data[0] +

xx * (1 - yy) * data[1] +

(1 - xx) * yy * data[img.step] +

xx * yy * data[img.step + 1]

);

}

int main(int argc, char **argv) {

// images, note they are CV_8UC1, not CV_8UC3

Mat img1 = imread(file_1, 0);

Mat img2 = imread(file_2, 0);

// key points, using GFTT here.

vector kp1;

Ptr detector = GFTTDetector::create(500, 0.01, 20); // maximum 500 keypoints

detector->detect(img1, kp1);

// now lets track these key points in the second image

// first use single level LK in the validation picture

vector kp2_single;

vector success_single;

OpticalFlowSingleLevel(img1, img2, kp1, kp2_single, success_single);

// then test multi-level LK

vector kp2_multi;

vector success_multi;

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

OpticalFlowMultiLevel(img1, img2, kp1, kp2_multi, success_multi, true);

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

auto time_used = chrono::duration_cast>(t2 - t1);

cout << "optical flow by gauss-newton: " << time_used.count() << endl;

// use opencv's flow for validation

vector pt1, pt2;

for (auto &kp: kp1) pt1.push_back(kp.pt);

vector status;

vector error;

t1 = chrono::steady_clock::now();

cv::calcOpticalFlowPyrLK(img1, img2, pt1, pt2, status, error);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast>(t2 - t1);

cout << "optical flow by opencv: " << time_used.count() << endl;

// plot the differences of those functions

Mat img2_single;

cv::cvtColor(img2, img2_single, COLOR_GRAY2BGR);

for (int i = 0; i < kp2_single.size(); i++) {

if (success_single[i]) {

cv::circle(img2_single, kp2_single[i].pt, 2, cv::Scalar(0, 250, 0), 2);

cv::line(img2_single, kp1[i].pt, kp2_single[i].pt, cv::Scalar(0, 250, 0));

}

}

Mat img2_multi;

cv::cvtColor(img2, img2_multi, COLOR_GRAY2BGR);

for (int i = 0; i < kp2_multi.size(); i++) {

if (success_multi[i]) {

cv::circle(img2_multi, kp2_multi[i].pt, 2, cv::Scalar(0, 250, 0), 2);

cv::line(img2_multi, kp1[i].pt, kp2_multi[i].pt, cv::Scalar(0, 250, 0));

}

}

Mat img2_CV;

cv::cvtColor(img2, img2_CV, COLOR_GRAY2BGR);

for (int i = 0; i < pt2.size(); i++) {

if (status[i]) {

cv::circle(img2_CV, pt2[i], 2, cv::Scalar(0, 250, 0), 2);

cv::line(img2_CV, pt1[i], pt2[i], cv::Scalar(0, 250, 0));

}

}

cv::imshow("tracked single level", img2_single);

cv::imshow("tracked multi level", img2_multi);

cv::imshow("tracked by opencv", img2_CV);

cv::waitKey(0);

return 0;

}

void OpticalFlowSingleLevel(

const Mat &img1,

const Mat &img2,

const vector &kp1,

vector &kp2,

vector &success,

bool inverse, bool has_initial) {

kp2.resize(kp1.size());

success.resize(kp1.size());

OpticalFlowTracker tracker(img1, img2, kp1, kp2, success, inverse, has_initial);

parallel_for_(Range(0, kp1.size()),

std::bind(&OpticalFlowTracker::calculateOpticalFlow, &tracker, placeholders::_1));

}

void OpticalFlowTracker::calculateOpticalFlow(const Range &range) {

// parameters

int half_patch_size = 4;

int iterations = 10;

for (size_t i = range.start; i < range.end; i++) {

auto kp = kp1[i];

double dx = 0, dy = 0; // dx,dy need to be estimated

if (has_initial) {

dx = kp2[i].pt.x - kp.pt.x;

dy = kp2[i].pt.y - kp.pt.y;

}

double cost = 0, lastCost = 0;

bool succ = true; // indicate if this point succeeded

// Gauss-Newton iterations

Eigen::Matrix2d H = Eigen::Matrix2d::Zero(); // hessian

Eigen::Vector2d b = Eigen::Vector2d::Zero(); // bias

Eigen::Vector2d J; // jacobian

for (int iter = 0; iter < iterations; iter++) {

if (inverse == false) {

H = Eigen::Matrix2d::Zero();

b = Eigen::Vector2d::Zero();

} else {

// only reset b

b = Eigen::Vector2d::Zero();

}

cost = 0;

// compute cost and jacobian

for (int x = -half_patch_size; x < half_patch_size; x++)

for (int y = -half_patch_size; y < half_patch_size; y++) {

double error = GetPixelValue(img1, kp.pt.x + x, kp.pt.y + y) -

GetPixelValue(img2, kp.pt.x + x + dx, kp.pt.y + y + dy);; // Jacobian

if (inverse == false) {

J = -1.0 * Eigen::Vector2d(

0.5 * (GetPixelValue(img2, kp.pt.x + dx + x + 1, kp.pt.y + dy + y) -

GetPixelValue(img2, kp.pt.x + dx + x - 1, kp.pt.y + dy + y)),

0.5 * (GetPixelValue(img2, kp.pt.x + dx + x, kp.pt.y + dy + y + 1) -

GetPixelValue(img2, kp.pt.x + dx + x, kp.pt.y + dy + y - 1))

);

} else if (iter == 0) {

// in inverse mode, J keeps same for all iterations

// NOTE this J does not change when dx, dy is updated, so we can store it and only compute error

J = -1.0 * Eigen::Vector2d(

0.5 * (GetPixelValue(img1, kp.pt.x + x + 1, kp.pt.y + y) -

GetPixelValue(img1, kp.pt.x + x - 1, kp.pt.y + y)),

0.5 * (GetPixelValue(img1, kp.pt.x + x, kp.pt.y + y + 1) -

GetPixelValue(img1, kp.pt.x + x, kp.pt.y + y - 1))

);

}

// compute H, b and set cost;

b += -error * J;

cost += error * error;

if (inverse == false || iter == 0) {

// also update H

H += J * J.transpose();

}

}

// compute update

Eigen::Vector2d update = H.ldlt().solve(b);

if (std::isnan(update[0])) {

// sometimes occurred when we have a black or white patch and H is irreversible

cout << "update is nan" << endl;

succ = false;

break;

}

if (iter > 0 && cost > lastCost) {

break;

}

// update dx, dy

dx += update[0];

dy += update[1];

lastCost = cost;

succ = true;

if (update.norm() < 1e-2) {

// converge

break;

}

}

success[i] = succ;

// set kp2

kp2[i].pt = kp.pt + Point2f(dx, dy);

}

}

void OpticalFlowMultiLevel(

const Mat &img1,

const Mat &img2,

const vector &kp1,

vector &kp2,

vector &success,

bool inverse) {

// parameters

int pyramids = 4;

double pyramid_scale = 0.5;

double scales[] = {1.0, 0.5, 0.25, 0.125};

// create pyramids

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

vector pyr1, pyr2; // image pyramids

for (int i = 0; i < pyramids; i++) {

if (i == 0) {

pyr1.push_back(img1);

pyr2.push_back(img2);

} else {

Mat img1_pyr, img2_pyr;

cv::resize(pyr1[i - 1], img1_pyr,

cv::Size(pyr1[i - 1].cols * pyramid_scale, pyr1[i - 1].rows * pyramid_scale));

cv::resize(pyr2[i - 1], img2_pyr,

cv::Size(pyr2[i - 1].cols * pyramid_scale, pyr2[i - 1].rows * pyramid_scale));

pyr1.push_back(img1_pyr);

pyr2.push_back(img2_pyr);

}

}

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

auto time_used = chrono::duration_cast>(t2 - t1);

cout << "build pyramid time: " << time_used.count() << endl;

// coarse-to-fine LK tracking in pyramids

vector kp1_pyr, kp2_pyr;

for (auto &kp:kp1) {

auto kp_top = kp;

kp_top.pt *= scales[pyramids - 1];

kp1_pyr.push_back(kp_top);

kp2_pyr.push_back(kp_top);

}

for (int level = pyramids - 1; level >= 0; level--) {

// from coarse to fine

success.clear();

t1 = chrono::steady_clock::now();

OpticalFlowSingleLevel(pyr1[level], pyr2[level], kp1_pyr, kp2_pyr, success, inverse, true);

t2 = chrono::steady_clock::now();

auto time_used = chrono::duration_cast>(t2 - t1);

cout << "track pyr " << level << " cost time: " << time_used.count() << endl;

if (level > 0) {

for (auto &kp: kp1_pyr)

kp.pt /= pyramid_scale;

for (auto &kp: kp2_pyr)

kp.pt /= pyramid_scale;

}

}

for (auto &kp: kp2_pyr)

kp2.push_back(kp);

}