Kinect+OpenNI学习笔记之8(Robert Walter手部提取代码的分析)

前言

一般情况下,手势识别的第一步就是先手势定位,即手势所在部位的提取。本文是基于kinect来提取手势识别的,即先通过kinect找出人体的轮廓,然后定位轮廓中与手部有关的点,在该点的周围提取出满足一定要求的区域,对该区域进行滤波后得到的区域就是手部了。然后利用凸包和凹陷的数学几何方法,画出手指和手指凹陷处的点,以及手的轮廓线,并在图像中显示出来。文章所有代码都是网友Robert Walter提供的,它的代码下载网站为:http://dl.dropbox.com/u/5505209/FingertipTuio3d.zip

本人因为要做这方面的研究,所有本文只是读了他的代码,并稍加分析了下。

开发环境:OpenNI+OpenCV

实验说明

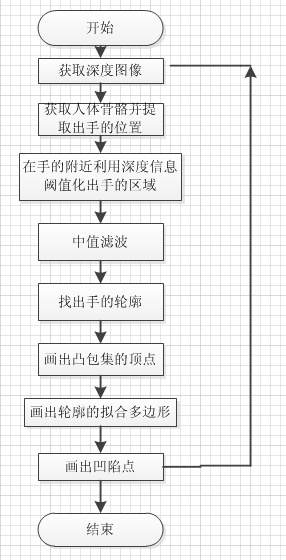

手势定位和提取功能的实现主要是依靠OpenNI和OpenCV的实现,定位部分依靠OpenNI的人体骨架跟踪功能,手部的提取依靠OpenCV中一些与轮廓有关的函数。整个实验的流程图如下:

手部提取时用到的几个OpenCV函数解释如下:

void convexHull(InputArray points, OutputArray hull, bool clockwise=false, bool returnPoints=true )

该函数的作用是找到输入点集points的凸包集合,参数1为输入的点集;参数2为是与输出凸包集合有关的,它是一个向量,如果向量元素的类型为整型,则表示其为凸包集合的点在原始输入集合点的下标索引,如果向量的数据类型为Point型,则表示其为凸包的点集;参数3表示输出凸包集合的方向,为true时表示顺时针方向输出;参数4表示是否输出凸包的集合中的点坐标,这个只有在参数2的类型为Mat型的时候有效,如果参数2为vector类型,则根据vector中元素类型来选择输出凸包到底是点的坐标还是原始输入点的索引,也就是说此时的参数4没有作用。

void convexityDefects(InputArray contour, InputArray convexhull, OutputArray convexityDefects)

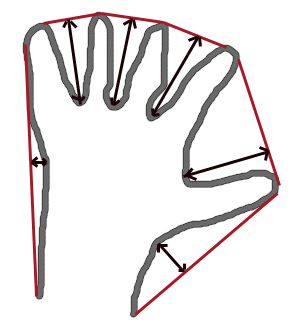

该函数的作用是对输入的轮廓contour,凸包集合来检测其轮廓的凸型缺陷,一个凸型缺陷结构体包括4个元素,缺陷起点坐标,缺陷终点坐标,缺陷中离凸包线距离最远的点的坐标,以及此时最远的距离。参数3即其输出的凸型缺陷结构体向量。

其凸型缺陷的示意图如下所示:

void findContours(InputOutputArray image, OutputArrayOfArrays contours, OutputArray hierarchy, int mode, int method, Point offset=Point()

该函数是找到输入图像image的轮廓,存储在contours中。输入的图像类型必须要求是8位单通道的二值图像,只要是非0元素都被看成是1,只要是0元素就被看做是0;参数hierarchy存储的是每个轮廓的拓扑信息,参数method表示轮廓提取的模式;参数4表示轮廓提取的近似方法,即怎么保存轮廓信息;参数5表示轮廓可移动的位移。

void *memcpy(void *dest, const void *src, size_t n);

该函数的作用是从源src所指的内存地址的起始位置开始拷贝n个字节到目标dest所指的内存地址的起始位置中,主要不要把源地址和目的地址的顺序搞反了。

实验结果

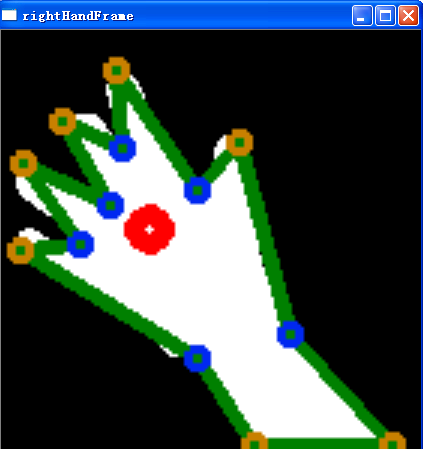

在进行手部的提取时,因为要先提取出人体全身的骨骼,再定位手的坐标,所以人必须先处于站立状态,一旦人体骨骼提取成功后,可以改为坐立姿态,其手部提取并显示的一张图如下所示:

网友Robert Walter把它的演示视频放在这里: http://www.youtube.com/watch?v=lCuItHQEgEQ&feature=player_embedded

实验主要部分代码和注释(参考资料中有工程代码下载地址):

main.cpp:

#include "SkeletonSensor.h" // openCV #include <opencv/highgui.h> #include <opencv/cv.h> using namespace cv; #include <iostream> using namespace std; // globals SkeletonSensor* sensor; const unsigned int XRES = 640; const unsigned int YRES = 480; const float DEPTH_SCALE_FACTOR = 255./4096.; // defines the value about which thresholding occurs const unsigned int BIN_THRESH_OFFSET = 5; // defines the value about witch the region of interest is extracted const unsigned int ROI_OFFSET = 70; // median blur factor const unsigned int MEDIAN_BLUR_K = 5; // grasping threshold const double GRASPING_THRESH = 0.9; // colors const Scalar COLOR_BLUE = Scalar(240,40,0); const Scalar COLOR_DARK_GREEN = Scalar(0, 128, 0); const Scalar COLOR_LIGHT_GREEN = Scalar(0,255,0); const Scalar COLOR_YELLOW = Scalar(0,128,200); const Scalar COLOR_RED = Scalar(0,0,255); // returns true if the hand is near the sensor area bool handApproachingDisplayPerimeter(float x, float y) { return (x > (XRES - ROI_OFFSET)) || (x < (ROI_OFFSET)) || (y > (YRES - ROI_OFFSET)) || (y < (ROI_OFFSET)); } // conversion from cvConvexityDefect struct ConvexityDefect { Point start; Point end; Point depth_point; float depth; }; // Thanks to Jose Manuel Cabrera for part of this C++ wrapper function //Convexity為凸的意思,Defect為缺陷的意思,hull為殼的意思 //貌似這個函數在opencv中已經被實現了 void findConvexityDefects(vector<Point>& contour, vector<int>& hull, vector<ConvexityDefect>& convexDefects) { if(hull.size() > 0 && contour.size() > 0) { CvSeq* contourPoints; CvSeq* defects; CvMemStorage* storage; CvMemStorage* strDefects; CvMemStorage* contourStr; CvConvexityDefect *defectArray = 0; strDefects = cvCreateMemStorage(); defects = cvCreateSeq( CV_SEQ_KIND_GENERIC|CV_32SC2, sizeof(CvSeq),sizeof(CvPoint), strDefects ); //We transform our vector<Point> into a CvSeq* object of CvPoint. contourStr = cvCreateMemStorage(); contourPoints = cvCreateSeq(CV_SEQ_KIND_GENERIC|CV_32SC2, sizeof(CvSeq), sizeof(CvPoint), contourStr); for(int i = 0; i < (int)contour.size(); i++) { CvPoint cp = {contour[i].x, contour[i].y}; cvSeqPush(contourPoints, &cp); } //Now, we do the same thing with the hull index int count = (int) hull.size(); //int hullK[count]; int* hullK = (int*) malloc(count*sizeof(int)); for(int i = 0; i < count; i++) { hullK[i] = hull.at(i); } CvMat hullMat = cvMat(1, count, CV_32SC1, hullK); // calculate convexity defects storage = cvCreateMemStorage(0); defects = cvConvexityDefects(contourPoints, &hullMat, storage); defectArray = (CvConvexityDefect*)malloc(sizeof(CvConvexityDefect)*defects->total); cvCvtSeqToArray(defects, defectArray, CV_WHOLE_SEQ); //printf("DefectArray %i %i\n",defectArray->end->x, defectArray->end->y); //We store defects points in the convexDefects parameter. for(int i = 0; i<defects->total; i++){ ConvexityDefect def; def.start = Point(defectArray[i].start->x, defectArray[i].start->y); def.end = Point(defectArray[i].end->x, defectArray[i].end->y); def.depth_point = Point(defectArray[i].depth_point->x, defectArray[i].depth_point->y); def.depth = defectArray[i].depth; convexDefects.push_back(def); } // release memory cvReleaseMemStorage(&contourStr); cvReleaseMemStorage(&strDefects); cvReleaseMemStorage(&storage); } } int main(int argc, char** argv) { // initialize the kinect sensor = new SkeletonSensor(); sensor->initialize(); sensor->setPointModeToProjective(); Mat depthRaw(YRES, XRES, CV_16UC1); Mat depthShow(YRES, XRES, CV_8UC1); Mat handDebug; // this vector holds the displayed images of the hands vector<Mat> debugFrames; // rectangle used to extract hand regions from depth map Rect roi; roi.width = ROI_OFFSET*2; roi.height = ROI_OFFSET*2; namedWindow("depthFrame", CV_WINDOW_AUTOSIZE); namedWindow("leftHandFrame", CV_WINDOW_AUTOSIZE); namedWindow("rightHandFrame", CV_WINDOW_AUTOSIZE); int key = 0; while(key != 27 && key != 'q') { sensor->waitForDeviceUpdateOnUser(); // update 16 bit depth matrix //參數3後面乘以2是因為kinect獲得的深度數據是1個像素2字節的 memcpy(depthRaw.data, sensor->getDepthData(), XRES*YRES*2); //轉換成8位深度圖 depthRaw.convertTo(depthShow, CV_8U, DEPTH_SCALE_FACTOR); for(int handI = 0; handI < 2; handI++) { int handDepth; if(sensor->getNumTrackedUsers() > 0) { //Skeleton是包含15個人體骨骼節點的結構體 Skeleton skel = sensor->getSkeleton(sensor->getUID(0)); //struct SkeletonPoint //{ // float x, y, z, confidence; //}; SkeletonPoint hand; if( handI == 0) hand = skel.leftHand;//hand中保存左手點的座標 else hand = skel.rightHand;//hand中保存有手點的座標 if(hand.confidence == 1.0)//手部的置信度为1 { handDepth = hand.z * (DEPTH_SCALE_FACTOR);//轉換為8bit后的深度值 //handApproachingDisplayPerimeter返回為真是說明,手的位置已經越界 if(!handApproachingDisplayPerimeter(hand.x, hand.y)) { roi.x = hand.x - ROI_OFFSET; //截取出感興趣的區域roi,其區域大小由經驗值來設定 roi.y = hand.y - ROI_OFFSET; } } } else handDepth = -1; // extract hand from image Mat handCpy(depthShow, roi); //handCpy只是與depthShow共用數據內存而已 Mat handMat = handCpy.clone(); //真正的擁有自己的內存區域 // binary threshold if(handDepth != -1) //BIN_THRESH_OFFSET == 5; //手部的閾值化,檢測到手部后,根據手部前後的深度信息來提取手的輪廓,此時,handMat就是0和1的矩陣了 handMat = (handMat > (handDepth - BIN_THRESH_OFFSET)) & (handMat < (handDepth + BIN_THRESH_OFFSET)); // last pre-filtering step, apply median blur medianBlur(handMat, handMat, MEDIAN_BLUR_K); // create debug image of thresholded hand and cvt to RGB so hints show in color handDebug = handMat.clone(); debugFrames.push_back(handDebug); //CV_GRAY2RGB表示3个通道的值是一样的 cvtColor(debugFrames[handI], debugFrames[handI], CV_GRAY2RGB); std::vector< std::vector<Point> > contours; //提取全部轮廓信息到contours,轮廓信息采用水平,垂直对角线的末断点存储。 findContours(handMat, contours, CV_RETR_LIST, CV_CHAIN_APPROX_SIMPLE); //下面就是画多边形和点 if (contours.size()) { for (int i = 0; i < contours.size(); i++) { vector<Point> contour = contours[i]; //将vector转换成Mat型,此时的Mat还是列向量,只不过是2个通道的列向量而已 Mat contourMat = Mat(contour); //返回轮廓的面积 double cArea = contourArea(contourMat); if(cArea > 2000) // likely the hand { //找到轮廓的中心点 Scalar center = mean(contourMat); Point centerPoint = Point(center.val[0], center.val[1]); // approximate the contour by a simple curve vector<Point> approxCurve; //求出轮廓的封闭的曲线,保存在approxCurve,轮廓和封闭曲线直接的最大距离为10 approxPolyDP(contourMat, approxCurve, 10, true); vector< vector<Point> > debugContourV; debugContourV.push_back(approxCurve); //在参数1中画出轮廓参数2,参数2必须是轮廓的集合,所以参数2是 //vector< vector<Point> >类型 //深绿色代表近似多边形 drawContours(debugFrames[handI], debugContourV, 0, COLOR_DARK_GREEN, 3); vector<int> hull; //找出近似曲线的凸包集合,集合hull中存储的是轮廓中凸包点的下标 convexHull(Mat(approxCurve), hull, false, false); // draw the hull points for(int j = 0; j < hull.size(); j++) { int index = hull[j]; //凸顶点用黄色表示 circle(debugFrames[handI], approxCurve[index], 3, COLOR_YELLOW, 2); } // find convexity defects vector<ConvexityDefect> convexDefects; findConvexityDefects(approxCurve, hull, convexDefects); printf("Number of defects: %d.\n", (int) convexDefects.size()); for(int j = 0; j < convexDefects.size(); j++) { //缺陷点用蓝色表示 circle(debugFrames[handI], convexDefects[j].depth_point, 3, COLOR_BLUE, 2); } // assemble point set of convex hull //将凸包集以点的坐标形式保存下来 vector<Point> hullPoints; for(int k = 0; k < hull.size(); k++) { int curveIndex = hull[k]; Point p = approxCurve[curveIndex]; hullPoints.push_back(p); } // area of hull and curve double hullArea = contourArea(Mat(hullPoints)); double curveArea = contourArea(Mat(approxCurve)); double handRatio = curveArea/hullArea; // hand is grasping //GRASPING_THRESH == 0.9 if(handRatio > GRASPING_THRESH) //握拳表示绿色 circle(debugFrames[handI], centerPoint, 5, COLOR_LIGHT_GREEN, 5); else //一般情况下手张开其中心点是显示红色 circle(debugFrames[handI], centerPoint, 5, COLOR_RED, 5); } } // contour conditional } // hands loop } imshow("depthFrame", depthShow); //debugFrames只保存2帧图像 if(debugFrames.size() >= 2 ) { //长和宽的尺寸都扩大3倍 resize(debugFrames[0], debugFrames[0], Size(), 3, 3); resize(debugFrames[1], debugFrames[1], Size(), 3, 3); imshow("leftHandFrame", debugFrames[0]); imshow("rightHandFrame", debugFrames[1]); debugFrames.clear(); } key = waitKey(10); } delete sensor; return 0; }

skeletonSensor.h:

#ifndef SKELETON_SENSOR_H #define SKELETON_SENSOR_H #include <XnCppWrapper.h> #include <vector> // A 3D point with the confidence of the point's location. confidence_ > 0.5 is good struct SkeletonPoint { float x, y, z, confidence; }; struct Skeleton { SkeletonPoint head; SkeletonPoint neck; SkeletonPoint rightShoulder; SkeletonPoint leftShoulder; SkeletonPoint rightElbow; SkeletonPoint leftElbow; SkeletonPoint rightHand; SkeletonPoint leftHand; SkeletonPoint rightHip; SkeletonPoint leftHip; SkeletonPoint rightKnee; SkeletonPoint leftKnee; SkeletonPoint rightFoot; SkeletonPoint leftFoot; SkeletonPoint torso; }; // SkeletonSensor: A wrapper for OpenNI Skeleton tracking devices // // Requires the OpenNI + NITE framework installation and the device driver // Tracks users within the device FOV, and assists in collection of user joints data class SkeletonSensor { public: SkeletonSensor(); ~SkeletonSensor(); // set up the device resolution and data generators int initialize(); // non-blocking wait for new data on the device void waitForDeviceUpdateOnUser(); // update vector of tracked users void updateTrackedUsers(); // return true if UID is among the tracked users bool isTracking(const unsigned int uid); // returns skeleton of specified user Skeleton getSkeleton(const unsigned int uid); // returns vector of skeletons for all users std::vector<Skeleton> getSkeletons(); // get number of tracked users unsigned int getNumTrackedUsers(); // map tracked user index to UID unsigned int getUID(const unsigned int index); // change point mode void setPointModeToProjective(); void setPointModeToReal(); // get depth and image data const XnDepthPixel* getDepthData(); const XnDepthPixel* getWritableDepthData(){}; const XnUInt8* getImageData(); const XnLabel* getLabels(); private: xn::Context context_; xn::DepthGenerator depthG_; xn::UserGenerator userG_; xn::ImageGenerator imageG_; std::vector<unsigned int> trackedUsers_; // current list of hands //std::list<XnPoint3D> handCursors; bool pointModeProjective_; // on user detection and calibration, call specified functions int setCalibrationPoseCallbacks(); // joint to point conversion, considers point mode void convertXnJointsToPoints(XnSkeletonJointPosition* const j, SkeletonPoint* const p, unsigned int numPoints); // callback functions for user and skeleton calibration events static void XN_CALLBACK_TYPE newUserCallback(xn::UserGenerator& generator, XnUserID nId, void* pCookie); static void XN_CALLBACK_TYPE lostUserCallback(xn::UserGenerator& generator, XnUserID nId, void* pCookie); static void XN_CALLBACK_TYPE calibrationStartCallback(xn::SkeletonCapability& capability, XnUserID nId, void* pCookie); static void XN_CALLBACK_TYPE calibrationCompleteCallback(xn::SkeletonCapability& capability, XnUserID nId, XnCalibrationStatus eStatus, void* pCookie); static void XN_CALLBACK_TYPE poseDetectedCallback(xn::PoseDetectionCapability& capability, const XnChar* strPose, XnUserID nId, void* pCookie); }; #endif

skeletonSensor.cpp:

#include "SkeletonSensor.h" #include "log.h" inline int CHECK_RC(const unsigned int rc, const char* const description) { if(rc != XN_STATUS_OK) { put_flog(LOG_ERROR, "%s failed: %s", description, xnGetStatusString(rc)); return -1; } return 0; } SkeletonSensor::SkeletonSensor() { pointModeProjective_ = false; } SkeletonSensor::~SkeletonSensor() { context_.Shutdown(); } int SkeletonSensor::initialize() { context_.Init(); XnStatus rc = XN_STATUS_OK; // create depth and user generators rc = depthG_.Create(context_); if(CHECK_RC(rc, "Create depth generator") == -1) return -1; rc = userG_.Create(context_); if(CHECK_RC(rc, "Create user generator") == -1) return -1; rc = imageG_.Create(context_); if(CHECK_RC(rc, "Create image generator") == -1) return -1; XnMapOutputMode mapMode; depthG_.GetMapOutputMode(mapMode); // for now, make output map VGA resolution at 30 FPS mapMode.nXRes = XN_VGA_X_RES; mapMode.nYRes = XN_VGA_Y_RES; mapMode.nFPS = 30; depthG_.SetMapOutputMode(mapMode); imageG_.SetMapOutputMode(mapMode); // turn on device mirroring if(depthG_.IsCapabilitySupported("Mirror") == true) { rc = depthG_.GetMirrorCap().SetMirror(true); CHECK_RC(rc, "Setting Image Mirroring on depthG"); } // turn on device mirroring if(imageG_.IsCapabilitySupported("Mirror") == true) { rc = imageG_.GetMirrorCap().SetMirror(true); CHECK_RC(rc, "Setting Image Mirroring on imageG"); } // make sure the user points are reported from the POV of the depth generator userG_.GetAlternativeViewPointCap().SetViewPoint(depthG_); depthG_.GetAlternativeViewPointCap().SetViewPoint(imageG_); // set smoothing factor userG_.GetSkeletonCap().SetSmoothing(0.9); // start data streams context_.StartGeneratingAll(); // setup callbacks setCalibrationPoseCallbacks(); return 0; } void SkeletonSensor::waitForDeviceUpdateOnUser() { context_.WaitOneUpdateAll(userG_); updateTrackedUsers(); } void SkeletonSensor::updateTrackedUsers() { XnUserID users[64]; XnUInt16 nUsers = userG_.GetNumberOfUsers(); trackedUsers_.clear(); userG_.GetUsers(users, nUsers); for(int i = 0; i < nUsers; i++) { if(userG_.GetSkeletonCap().IsTracking(users[i])) { trackedUsers_.push_back(users[i]); } } } bool SkeletonSensor::isTracking(const unsigned int uid) { return userG_.GetSkeletonCap().IsTracking(uid); } Skeleton SkeletonSensor::getSkeleton(const unsigned int uid) { Skeleton result; // not tracking user if(!userG_.GetSkeletonCap().IsTracking(uid)) return result; // Array of available joints const unsigned int nJoints = 15; XnSkeletonJoint joints[nJoints] = { XN_SKEL_HEAD, XN_SKEL_NECK, XN_SKEL_RIGHT_SHOULDER, XN_SKEL_LEFT_SHOULDER, XN_SKEL_RIGHT_ELBOW, XN_SKEL_LEFT_ELBOW, XN_SKEL_RIGHT_HAND, XN_SKEL_LEFT_HAND, XN_SKEL_RIGHT_HIP, XN_SKEL_LEFT_HIP, XN_SKEL_RIGHT_KNEE, XN_SKEL_LEFT_KNEE, XN_SKEL_RIGHT_FOOT, XN_SKEL_LEFT_FOOT, XN_SKEL_TORSO }; // holds the joint position components XnSkeletonJointPosition positions[nJoints]; for (unsigned int i = 0; i < nJoints; i++) { userG_.GetSkeletonCap().GetSkeletonJointPosition(uid, joints[i], *(positions+i)); } SkeletonPoint points[15]; convertXnJointsToPoints(positions, points, nJoints); result.head = points[0]; result.neck = points[1]; result.rightShoulder = points[2]; result.leftShoulder = points[3]; result.rightElbow = points[4]; result.leftElbow = points[5]; result.rightHand = points[6]; result.leftHand = points[7]; result.rightHip = points[8]; result.leftHip = points[9]; result.rightKnee = points[10]; result.leftKnee = points[11]; result.rightFoot = points[12]; result.leftFoot = points[13]; result.torso = points[14]; return result; } std::vector<Skeleton> SkeletonSensor::getSkeletons() { std::vector<Skeleton> skeletons; for(unsigned int i = 0; i < getNumTrackedUsers(); i++) { Skeleton s = getSkeleton(trackedUsers_[i]); skeletons.push_back(s); } return skeletons; } unsigned int SkeletonSensor::getNumTrackedUsers() { return trackedUsers_.size(); } unsigned int SkeletonSensor::getUID(const unsigned int index) { return trackedUsers_[index]; } void SkeletonSensor::setPointModeToProjective() { pointModeProjective_ = true; } void SkeletonSensor::setPointModeToReal() { pointModeProjective_ = false; } const XnDepthPixel* SkeletonSensor::getDepthData() { return depthG_.GetDepthMap(); } const XnUInt8* SkeletonSensor::getImageData() { return imageG_.GetImageMap(); } const XnLabel* SkeletonSensor::getLabels() { xn::SceneMetaData sceneMD; userG_.GetUserPixels(0, sceneMD); return sceneMD.Data(); } int SkeletonSensor::setCalibrationPoseCallbacks() { XnCallbackHandle hUserCallbacks, hCalibrationStart, hCalibrationComplete; userG_.RegisterUserCallbacks(newUserCallback, lostUserCallback, this, hUserCallbacks); userG_.GetSkeletonCap().RegisterToCalibrationStart(calibrationStartCallback, this, hCalibrationStart); userG_.GetSkeletonCap().RegisterToCalibrationComplete(calibrationCompleteCallback, this, hCalibrationComplete); // turn on tracking of all joints userG_.GetSkeletonCap().SetSkeletonProfile(XN_SKEL_PROFILE_ALL); return 0; } void SkeletonSensor::convertXnJointsToPoints(XnSkeletonJointPosition* const joints, SkeletonPoint* const points, unsigned int numPoints) { XnPoint3D xpt; for(unsigned int i = 0; i < numPoints; i++) { xpt = joints[i].position; if(pointModeProjective_) depthG_.ConvertRealWorldToProjective(1, &xpt, &xpt); points[i].confidence = joints[i].fConfidence; points[i].x = xpt.X; points[i].y = xpt.Y; points[i].z = xpt.Z; } } void XN_CALLBACK_TYPE SkeletonSensor::newUserCallback(xn::UserGenerator& generator, XnUserID nId, void* pCookie) { put_flog(LOG_DEBUG, "New user %d, auto-calibrating", nId); SkeletonSensor* sensor = (SkeletonSensor*) pCookie; sensor->userG_.GetSkeletonCap().RequestCalibration(nId, true); } void XN_CALLBACK_TYPE SkeletonSensor::lostUserCallback(xn::UserGenerator& generator, XnUserID nId, void* pCookie) { put_flog(LOG_DEBUG, "Lost user %d", nId); } void XN_CALLBACK_TYPE SkeletonSensor::calibrationStartCallback(xn::SkeletonCapability& capability, XnUserID nId, void* pCookie) { put_flog(LOG_DEBUG, "Calibration started for user %d", nId); } void XN_CALLBACK_TYPE SkeletonSensor::calibrationCompleteCallback(xn::SkeletonCapability& capability, XnUserID nId, XnCalibrationStatus eStatus, void* pCookie) { SkeletonSensor* sensor = (SkeletonSensor*) pCookie; if(eStatus == XN_CALIBRATION_STATUS_OK) { put_flog(LOG_DEBUG, "Calibration completed: start tracking user %d", nId); sensor->userG_.GetSkeletonCap().StartTracking(nId); } else { put_flog(LOG_DEBUG, "Calibration failed for user %d", nId); sensor->userG_.GetSkeletonCap().RequestCalibration(nId, true); } } void XN_CALLBACK_TYPE SkeletonSensor::poseDetectedCallback(xn::PoseDetectionCapability& capability, const XnChar* strPose, XnUserID nId, void* pCookie) { put_flog(LOG_DEBUG, "Pose detected for user %d", nId); SkeletonSensor* sensor = (SkeletonSensor*) pCookie; sensor->userG_.GetPoseDetectionCap().StopPoseDetection(nId); sensor->userG_.GetSkeletonCap().RequestCalibration(nId, true); }

实验总结:通过本次实验,可以学会初步结合OpenCV和OpenNI来简单的提取手部所在的区域。

参考资料:

heresy博文:http://kheresy.wordpress.com/2012/08/23/hand-processing-with-openni/

Robert Walter工程代码下载:http://dl.dropbox.com/u/5505209/FingertipTuio3d.zip

附录:

听网友说本文提供的工程代码下载地址失效了,故现在提供本人以前测试的工程:http://download.csdn.net/detail/wuweigreat/5101183