kinect学习笔记(四)——各种数据流

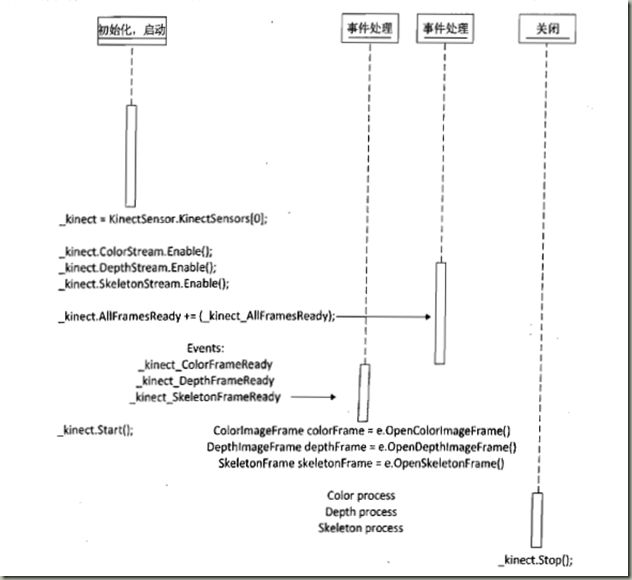

一、kinect开发的一个流程图

1、我们可以知道一个简单的框架就是几部分

(1)选择使用的kinect传感器

KinectSensor.KinectSensors[0]

(2)打开需要的数据流

_kinect.DepthStream.Enable();

_kinect.ColorStream.Enable();

_kinect.SkeletonStream.Enable();

(3)注册事件

其实就是主要的算法在这里体现。

有个小窍门:VS的CodeSnippet快速生成事件代码,如在代码“_kinect.DepthFrameReady+=”后面连续按两次“Tab”键,就会生成相应的时间并处理相应的代码。

二、初始化、启用kinect设备

代码如下,记得要声明一个私有成员变量_kinect,并在MainWindow()里面调用。

KinectSensor _kinect; private void startKinect() { if(KinectSensor.KinectSensors.Count>0) { //选择第一个kinect设备 _kinect = KinectSensor.KinectSensors[0]; MessageBox.Show("Kinect目前状态为:" + _kinect.Status); //初始化设定,启用彩色图像,深度图像和骨骼追踪 _kinect.ColorStream.Enable(ColorImageFormat.RgbResolution640x480Fps30); _kinect.DepthStream.Enable(DepthImageFormat.Resolution640x480Fps30); _kinect.SkeletonStream.Enable(); //注册时间,该方法将保证彩色图像,深度图像和骨骼图像的同步 _kinect.AllFramesReady += _kinect_AllFramesReady; //启动kinect _kinect.Start(); } else { MessageBox.Show("没有发现任何kinect设备"); } }

void _kinect_AllFramesReady(object sender, AllFramesReadyEventArgs e)

{

}

三、彩色图像流数据处理

1、在MainWindows窗体上新增一个Image控件,命名为imageCamera

2、在_kinect_AllFramesReady事件处理中增加如下代码、

//显示彩色摄像头 using(ColorImageFrame colorFrame = e.OpenColorImageFrame()) { if(colorFrame == null) { return; } byte[] pixels = new byte[colorFrame.PixelDataLength]; colorFrame.CopyPixelDataTo(pixels); //BGR32格式图片一个像素为4个字节 int stride = colorFrame.Width * 4; ImageCamera.Source = BitmapSource.Create(colorFrame.Width, colorFrame.Height, 96, 96, PixelFormats.Bgr32, null, pixels, stride); }

BGR32图像的1像素对应4字节(32位),分别是B,G,R,阿尔法通道(透明度)。

BitmapSource.Create是一个从数组到二维矩阵的过程。

Stride为图片步长,代表图片一行像素所占的字节数,为摄像头传输图片的宽度乘以4,

DPI,越高越清晰,普通的显示器就是96差不多。

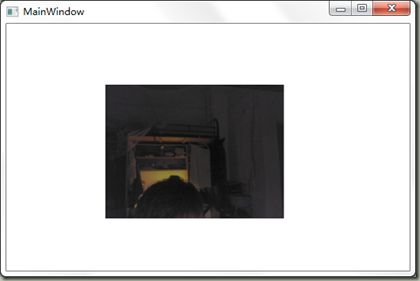

3、效果图

四、深度数据捕获

1、定义深度图像的有效视角范围。

const float MaxDepthDistance = 4095; const float MinDepthDistance = 850; const float MaxDepthDistanceOffest = MaxDepthDistance - MinDepthDistance; private const int RedIndex = 2; private const int GreenIndex = 1; private const int BlueIndex = 0;

2、代码

private byte[]convertDepthFrameToColorFrame(DepthImageFrame depthFrame) { short[] rawDepthData = new short[depthFrame.PixelDataLength]; depthFrame.CopyPixelDataTo(rawDepthData); Byte[] piexls = new byte[depthFrame.Height * depthFrame.Width * 4]; for(int depthIndex = 0,colorIndex=0;depthIndex<rawDepthData.Length&&colorIndex<piexls.Length;depthIndex++,colorIndex+=4) { int player = rawDepthData[depthIndex] & DepthImageFrame.PlayerIndexBitmask; int depth = rawDepthData[depthIndex]; if(depth<=900) { piexls[colorIndex + BlueIndex] = 255; piexls[colorIndex + GreenIndex] = 0; piexls[colorIndex + RedIndex] = 0; } else if(depth>900&&depth<2000) { piexls[colorIndex + BlueIndex] = 0; piexls[colorIndex + GreenIndex] = 255; piexls[colorIndex + RedIndex] = 0; } else if(depth>2000) { piexls[colorIndex + BlueIndex] = 0; piexls[colorIndex + GreenIndex] = 0; piexls[colorIndex + RedIndex] = 255; } byte intensity = CalculateIntensityFromDepth(depth); piexls[colorIndex + BlueIndex] = intensity; piexls[colorIndex + GreenIndex] = intensity; piexls[colorIndex + RedIndex] = intensity; if(player>0) { piexls[colorIndex + BlueIndex] = Colors.LightGreen.B; piexls[colorIndex + GreenIndex] = Colors.LightGreen.G; piexls[colorIndex + RedIndex] = Colors.LightGreen.R; } } return piexls; } void _kinect_AllFramesReady(object sender, AllFramesReadyEventArgs e) { //显示彩色摄像头 using(ColorImageFrame colorFrame = e.OpenColorImageFrame()) { if(colorFrame == null) { return; } byte[] pixels = new byte[colorFrame.PixelDataLength]; colorFrame.CopyPixelDataTo(pixels); //BGR32格式图片一个像素为4个字节 int stride = colorFrame.Width * 4; ImageCamera.Source = BitmapSource.Create(colorFrame.Width, colorFrame.Height, 96, 96, PixelFormats.Bgr32, null, pixels, stride); } using(DepthImageFrame depthFrame = e.OpenDepthImageFrame()) { if(depthFrame==null) { return; } byte[] piexls = convertDepthFrameToColorFrame(depthFrame); int stride = depthFrame.Width * 4; imageDepth.Source = BitmapSource.Create(depthFrame.Width, depthFrame.Height, 96, 96, PixelFormats.Bgr32, null, piexls, stride); }

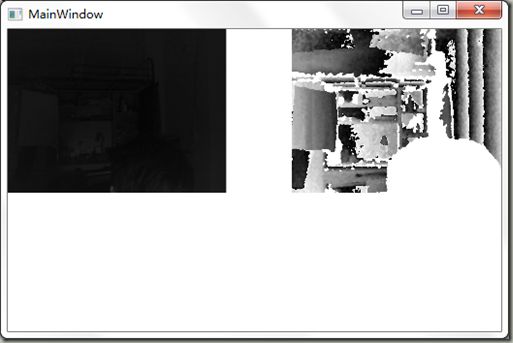

3、效果图

五、骨骼追踪

1、首先注释掉我们之前注册的时间,重新注册一个彩色数据流时间和一个骨骼事件,然后添加如下代码

private Skeleton[] skeletons; void _kinect_SkeletonFrameReady(object sender, SkeletonFrameReadyEventArgs e) { bool isSkeletonDataEeady = false; using (SkeletonFrame skeletonFrame = e.OpenSkeletonFrame()) { if(skeletonFrame!=null) { skeletons = new Skeleton[skeletonFrame.SkeletonArrayLength]; skeletonFrame.CopySkeletonDataTo(skeletons); isSkeletonDataEeady = true; } } if(isSkeletonDataEeady==true) { Skeleton currentSkeleton = (from s in skeletons where s.TrackingState == SkeletonTrackingState.Tracked select s).FirstOrDefault(); if(currentSkeleton !=null) { lockHeadWithSpot(currentSkeleton); } } } void lockHeadWithSpot(Skeleton s) { Joint head = s.Joints[JointType.Head]; ColorImagePoint colorPoint = _kinect.MapSkeletonPointToColor(head.Position, _kinect.ColorStream.Format); Point p = new Point((int)(ImageCamera.Width * colorPoint.X / _kinect.ColorStream.FrameWidth), (int)(ImageCamera.Height * colorPoint.Y / _kinect.ColorStream.FrameHeight)); Canvas.SetLeft(ellipseHead, p.X); Canvas.SetRight(ellipseHead, p.Y); } void _kinect_ColorFrameReady(object sender, ColorImageFrameReadyEventArgs e) { using (ColorImageFrame colorFrame = e.OpenColorImageFrame()) { if (colorFrame == null) { return; } byte[] pixels = new byte[colorFrame.PixelDataLength]; colorFrame.CopyPixelDataTo(pixels); //BGR32格式图片一个像素为4个字节 int stride = colorFrame.Width * 4; ImageCamera.Source = BitmapSource.Create(colorFrame.Width, colorFrame.Height, 96, 96, PixelFormats.Bgr32, null, pixels, stride); } }

2、效果图

六关闭kinect设备

private void stopKinect() { if(_kinect != null) { if(_kinect.Status== KinectStatus.Connected) { _kinect.Stop(); } } }